AI in Telehealth Incident Response: Risks and Benefits

Post Summary

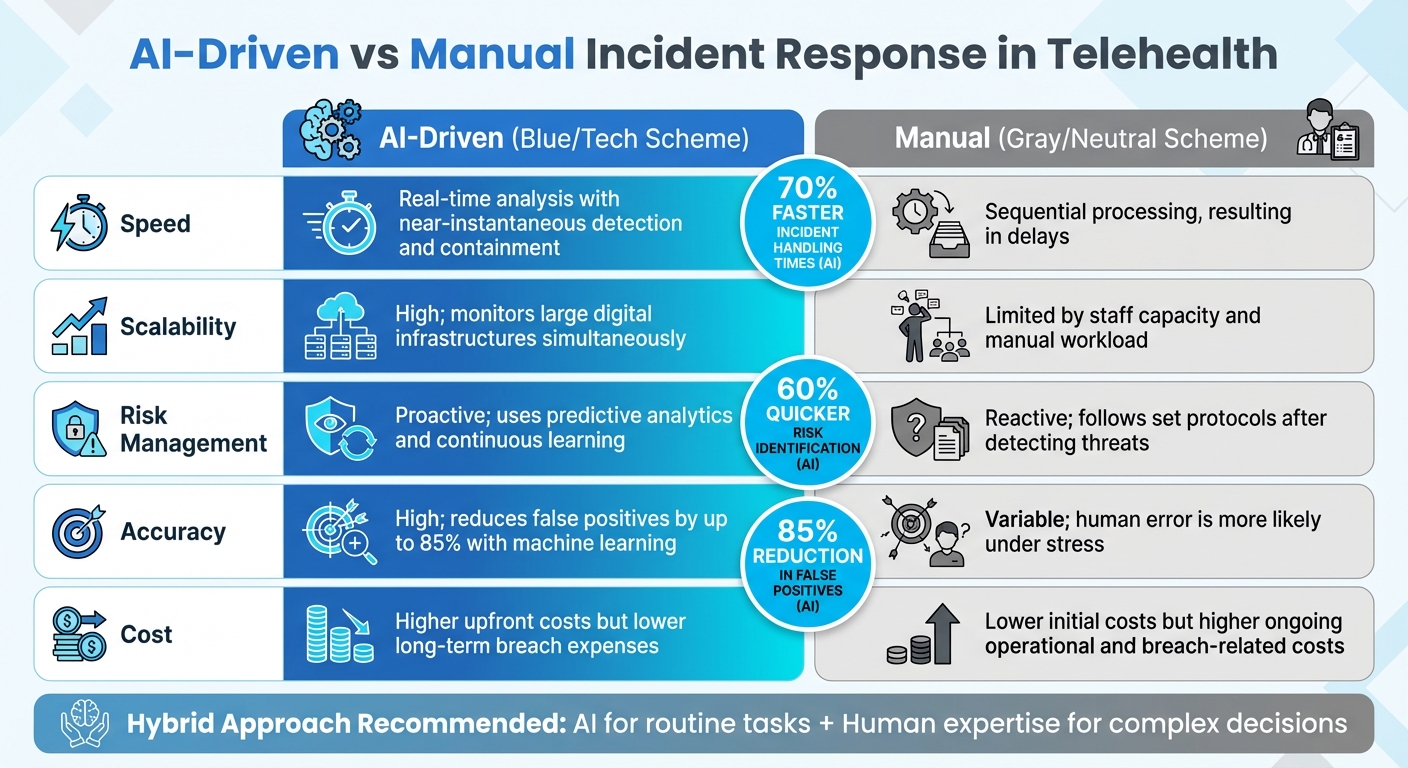

AI-driven telehealth incident response reduces incident handling times by up to 70%, improves risk identification speed by 60%, and cuts false positives by 85% compared to manual methods. In remote patient monitoring, AI integrates data from devices including pulse oximeters and glucose meters to trigger instant security alerts. AI can predict critical clinical events such as sepsis hours in advance using ICU data and anticipate acute kidney injury and ventilation requirements up to 24 hours ahead — demonstrating the predictive monitoring capability that manual log review cannot replicate. AI handles multiple incidents simultaneously across devices, cloud platforms, and distributed environments without proportional staff increases, enabling nursing stations to monitor more patients while security teams concentrate on high-priority threats.

The digitization of PHI across remote telehealth networks creates exposure to data breaches, model poisoning, adversarial attacks, and ransomware that AI-integrated systems introduce alongside their benefits. Hackers can exploit AI through corrupted training data or prompt injections, disrupting patient care. AI hallucinations, model decay, and biases from incomplete training data produce false positives, false negatives, and missed threats during critical incidents. The Change Healthcare ransomware attack demonstrated how AI-integrated system disruptions can last six months, cause direct patient harm, and contribute to hospital closures. The opaque nature of AI decision-making complicates accountability — when an AI system fails to detect a breach or responds inappropriately, tracing responsibility is difficult without robust governance frameworks.

Manual incident response provides human judgment for nuanced decision-making, clear individual accountability tying actions to specific people, and contextual analysis that AI algorithms cannot replicate for complex, novel threats. However, manual methods cannot keep pace with the speed of AI-driven detection — analysts sifting through data reactively allow security incidents to linger undetected while telehealth platforms generate thousands of log entries daily. Manual processes face insurmountable scalability limits as each new device or data stream adds workload requiring additional staff rather than computational resources. Alert fatigue, cognitive biases, and inconsistent decision-making under sustained high-volume alert environments cause human analysts to miss genuine threats. Incomplete documentation creates HIPAA compliance gaps, and manual processes cannot keep pace with emerging attack methods including data poisoning and model tampering.

The hybrid model assigns AI responsibility for routine threat detection, real-time monitoring, and automated containment of known threat patterns — leveraging AI's speed and scalability advantages. Human analysts retain responsibility for complex investigations, strategic decisions, verification of AI-generated alerts, bias assessment, and ethical and regulatory compliance determinations. This division ensures that AI's efficiency gains are captured for high-volume routine tasks while human judgment addresses the ambiguous, novel, and high-stakes incidents where AI limitations create patient safety and accountability risks. Tower Health's deployment demonstrates the operational efficiency available through hybrid AI implementation: Censinet RiskOps™ enabled three FTEs to return to their primary roles while only two FTEs are now required to manage significantly more risk assessments.

Healthcare organizations must adopt governance frameworks that include regular bias testing throughout the AI lifecycle, continuous monitoring for model performance degradation, and fairness metrics measuring equity in AI detection outcomes. Bias assessments at every stage of development and deployment identify training data gaps before they cause systematic false negatives for specific attack patterns or patient populations. Routine audits detect emerging bias and drift before they produce clinical consequences. Governance committees with cross-functional representation across IT security, clinical operations, compliance, and ethics provide the oversight structure that ensures AI decision-making meets healthcare regulatory standards. Incident response plans must address AI-specific failure modes — hallucinations, model poisoning, adversarial manipulation — with documented escalation procedures routing AI failures to human review.

Generic incident response tools fail in healthcare because they cannot address the complexity of patient data protection, clinical application security, HIPAA compliance, and medical device risk simultaneously. As Matt Christensen, Sr. Director GRC at Intermountain Health, explains: healthcare is the most complex industry and tools not built specifically for healthcare will fail when applied to it. Censinet RiskOps™ is designed specifically for healthcare — streamlining third-party and enterprise risk assessments, supporting PHI management, enabling cybersecurity benchmarking, and providing collaborative risk management across healthcare organizations. Its AI-driven features combine automation with human-guided decision-making, ensuring the oversight that HIPAA compliance and patient safety require. Tower Health's experience demonstrates that healthcare-specific AI implementation enables more risk assessments with fewer staff while maintaining the human judgment that complex healthcare incidents demand.

AI is transforming how telehealth handles cybersecurity threats, offering faster detection, scalability, and automation. It can reduce response times by up to 70% and cut false positives by 85%. However, it introduces risks like data breaches, algorithmic errors, and accountability challenges. On the other hand, manual methods provide human judgment but struggle with speed, scalability, and consistency. A hybrid approach - AI for routine tasks and humans for complex decisions - balances efficiency and oversight, ensuring better patient data protection best practices.

Cybersecurity on the Health Care Front Lines Against AI and Ransomware

sbb-itb-535baee

1. AI-Driven Telehealth Incident Response

AI is revolutionizing how telehealth systems respond to cybersecurity threats by constantly monitoring network activity, system logs, and user behavior. For example, a leading healthcare provider leverages AI to analyze patient data, swiftly identify breaches, and ensure rapid, compliant responses [4]. Let’s delve into how AI impacts threat detection, scalability, security risks, and accountability in telehealth environments.

Threat Detection Speed

Organizations using AI for incident response have reported impressive results, including up to 70% faster incident handling times and 60% quicker risk identification compared to traditional methods [4]. In remote patient monitoring (RPM), AI integrates data from devices like pulse oximeters and glucose meters, triggering instant alerts. It can also predict critical events like sepsis hours in advance using ICU data, or anticipate acute kidney injury (AKI) and the need for mechanical ventilation up to 24 hours ahead [7]. This real-time detection enables swift root cause analysis and automated responses, reducing damage and the need for manual intervention.

Scalability

AI is particularly effective at managing the massive data loads generated by growing telehealth systems, without requiring a proportional increase in staff. It can process multiple incidents simultaneously across devices, cloud platforms, and other digital environments while cutting false positives by 85% [4]. This scalability allows nursing stations to monitor more patients at once, improving resource allocation and enabling security teams to concentrate on high-priority threats [7]. However, these benefits come with new challenges, particularly around privacy and security.

Privacy and Security Risks

While AI offers numerous advantages, it also introduces vulnerabilities. The digitization of protected health information (PHI) across remote networks creates new opportunities for data breaches, model poisoning, adversarial attacks, and ransomware [4][5][7][9]. Hackers can exploit AI systems through corrupted training data or prompt injections, potentially disrupting patient care. High-profile ransomware incidents, like the Change Healthcare breach, highlight how AI-integrated systems can become prime targets, leading to extended service outages [7][9]. Balancing robust security with HIPAA compliance remains a critical challenge for healthcare providers.

Reliability and Accountability

AI’s reliability and accountability present additional hurdles. Issues like hallucinations, model decay, and biases from incomplete training data can result in false positives, false negatives, or missed threats during crucial moments [3][5][7][8]. To stay effective, AI models require ongoing updates and rigorous testing. The opaque nature of AI decision-making complicates accountability - when an AI system fails to detect a breach or responds inappropriately, tracing responsibility becomes difficult. Tools like Censinet RiskOps™ assist with third-party vendor risk assessments and PHI management, but human oversight remains vital to ensure AI actions meet ethical and regulatory standards [3][5][6][7].

2. Manual Telehealth Incident Response

Manual incident response relies entirely on human analysts to review network traffic, system logs, and user behavior without the aid of automation. Security teams investigate alerts manually, document their findings, and follow established playbooks to coordinate responses. While this approach allows for nuanced decision-making, it often leads to delays and struggles to scale effectively [3][5].

Threat Detection Speed

Manual methods simply can't keep up with the speed of AI-driven systems in detecting threats. Human analysts must sift through data reactively, which means security incidents can linger undetected for longer periods. For telehealth platforms generating thousands of log entries every day, this process becomes overwhelming and error-prone. In high-stakes scenarios, these delays can compromise patient safety and extend the exposure of Protected Health Information (PHI).

Scalability

As telehealth organizations grow, manual incident response faces significant scalability hurdles. Each new device or data stream adds to the workload, increasing the demand for more staff [3][4]. Unlike AI tools that can handle multiple incidents simultaneously, human-led processes are limited by the number of analysts available. For healthcare providers managing remote patient monitoring across hundreds - or even thousands - of patients, manual analysis becomes unsustainable. Organizations are then forced to either hire more security personnel, driving up costs, or accept slower response times and reduced monitoring coverage.

Privacy and Security Risks

Handling patient data manually introduces vulnerabilities, even if it reduces certain technological breach risks. Every human interaction - whether it’s reviewing logs, documenting incidents, or sharing findings - creates opportunities for PHI breaches. Errors in data handling, such as weak access controls or accidental exposure, can lead to serious consequences. In past cyberattacks, manual processes have been linked to extended disruptions and compromised patient care [7]. Additionally, the documentation and communication required in manual workflows can leave sensitive information exposed if audit trails are compromised.

Reliability and Accountability

While manual processes offer accountability by tying actions to specific individuals, they come with their own set of challenges, such as alert fatigue, cognitive biases, and inconsistent decision-making [4][5]. Security teams that are overwhelmed by constant alerts may miss genuine threats buried among false positives. The quality of manual analysis often depends on the expertise and focus of individual analysts, leading to inconsistent responses to similar incidents. Unlike the predictability of automated systems, human-led efforts can vary widely. This inconsistency also risks incomplete documentation, potentially leading to HIPAA compliance issues [7]. Furthermore, human analysts may struggle to keep up with new attack methods targeting healthcare AI systems, such as data poisoning or model tampering [5]. These challenges highlight the ongoing struggle to balance the efficiency of automation with the judgment of human experts.

Advantages and Disadvantages

AI vs Manual Telehealth Incident Response: Speed, Scalability, and Cost Comparison

When deciding between AI-driven and manual incident response methods in telehealth, healthcare organizations must weigh the benefits of speed against the need for human judgment. AI excels at reducing the time needed for incident handling and identifying risks compared to traditional manual approaches [1].

On the other hand, manual response methods often fall short in terms of speed and scalability. For example, Tower Health's use of AI demonstrates how automation can enhance scalability while reducing the need for full-time staff. As Terry Grogan, CISO at Tower Health, noted:

"Censinet RiskOps allowed 3 FTEs to go back to their real jobs! Now we do a lot more risk assessments with only 2 FTEs required"

.

Feature

AI-Driven

Manual

Real-time analysis with near-instantaneous detection and containment

.

Sequential processing, resulting in delays

.

High; monitors large digital infrastructures simultaneously

.

Limited by staff capacity and manual workload

.

Proactive; uses predictive analytics and continuous learning

.

Reactive; follows set protocols after detecting threats

.

High; reduces false positives by up to 85% with machine learning

.

Variable; human error is more likely under stress

.

Higher upfront costs but lower long-term breach expenses

.

Lower initial costs but higher ongoing operational and breach-related costs

.

Despite these strengths, AI is not without its challenges. Its effectiveness hinges on the quality of the data it processes - poor data can lead to flawed conclusions [1]. Additionally, relying on a small number of vendors for healthcare technology introduces a significant risk. A single cyberattack could potentially disrupt care for millions of patients [2].

To address these limitations, many organizations now use a hybrid approach. AI handles routine threat detection, while human experts manage complex investigations. This combination allows security teams to efficiently handle the demands of telehealth operations without compromising the depth of analysis needed for advanced threats. This balanced strategy helps organizations navigate the trade-offs between automation and human oversight effectively.

Conclusion

AI in telehealth incident response offers measurable advantages. For instance, organizations have reported up to a 70% reduction in incident handling times, a 60% improvement in risk identification speed, and an 85% drop in false positives [4]. These efficiencies strengthen patient data protection but also introduce challenges, such as biases, false positives, and vulnerabilities stemming from model errors or data manipulation. The Change Healthcare ransomware attack highlights the potential consequences - AI-integrated system disruptions lasting six months or more, leading to patient harm and even hospital closures [7].

To navigate these challenges, healthcare organizations should adopt a hybrid approach that blends AI automation with human expertise. While AI is excellent for routine threat detection and real-time monitoring, human professionals are essential for handling complex investigations and making critical strategic decisions. This combination helps mitigate AI's limitations while retaining its speed and scalability.

The success of this hybrid model depends on using solutions tailored specifically for healthcare. Generic tools often fail to address the complexities of patient data, clinical applications, and device security. As Matt Christensen, Sr. Director GRC at Intermountain Health, explains:

"Healthcare is the most complex industry... You can't just take a tool and apply it to healthcare if it wasn't built specifically for healthcare"

.

Censinet RiskOps™ addresses these challenges by offering a platform designed for healthcare-specific needs. It streamlines third-party and enterprise risk assessments, supports cybersecurity benchmarking, and enables collaborative risk management across healthcare organizations. Its AI-driven features combine automation with human-guided decision-making, ensuring critical oversight. This balance allows healthcare organizations to scale their risk management efforts without compromising patient safety, maintaining the essential equilibrium between automation and human judgment in telehealth security.

FAQs

What telehealth incidents should AI handle vs. humans?

AI is best suited for tasks that demand speed and automation, such as detecting anomalies, flagging security breaches, and executing containment protocols. These capabilities are crucial for combating threats like ransomware and phishing. By handling these tasks efficiently, AI helps reduce downtime and safeguards sensitive data.

However, humans play a vital role in managing complex decisions, verifying AI-generated alerts, addressing potential biases, and ensuring compliance with ethical and legal standards. When combined, AI and human oversight create a balanced approach, allowing for effective and responsible telehealth incident response.

How can AI incident response stay HIPAA-compliant with PHI?

AI incident response plays a key role in maintaining HIPAA compliance by prioritizing patient data security. This involves enforcing strict data access controls, ensuring that only authorized personnel can view sensitive information. Additionally, encryption protects data both in transit and at rest, reducing the risk of breaches.

To track and monitor data usage, audit trails are essential. They provide a detailed log of who accessed the data, when, and for what purpose, helping to identify any potential misuse. Regular risk assessments are also critical, as they help uncover vulnerabilities that could lead to unauthorized sharing of Protected Health Information (PHI) or risks of re-identification.

Lastly, human oversight ensures that AI-generated decisions adhere to HIPAA requirements, offering an extra layer of accountability. Together, these measures work to safeguard patient data and ensure compliance with HIPAA standards.

How do you validate and govern AI to prevent bias and drift?

Healthcare organizations aiming to minimize bias and drift in AI systems should adopt robust governance frameworks. These frameworks should prioritize regular bias testing, continuous monitoring, and clear oversight to ensure the systems remain reliable and fair.

Key actions include:

Additionally, forming governance committees and developing incident response plans ensures the organization can adapt to evolving data and conditions while maintaining safety, compliance, and fairness.

Related Blog Posts

- AI Risks in Healthcare Incident Response Policies

- AI's Role in Incident Response Teams

- AI Cyber Risk: When Your Smart Defense Becomes the Attack Vector

- From Breach to Resolution in Hours, Not Days: AI-Powered Incident Response for Healthcare

{"@context":"https://schema.org","@type":"FAQPage","mainEntity":[{"@type":"Question","name":"What telehealth incidents should AI handle vs. humans?","acceptedAnswer":{"@type":"Answer","text":"<p>AI is best suited for tasks that demand <strong>speed and automation</strong>, such as detecting anomalies, flagging security breaches, and executing containment protocols. These capabilities are crucial for combating threats like ransomware and phishing. By handling these tasks efficiently, AI helps reduce downtime and safeguards sensitive data.</p> <p>However, humans play a vital role in managing <strong>complex decisions</strong>, verifying AI-generated alerts, addressing potential biases, and ensuring compliance with ethical and legal standards. When combined, AI and human oversight create a balanced approach, allowing for effective and responsible telehealth incident response.</p>"}},{"@type":"Question","name":"How can AI incident response stay HIPAA-compliant with PHI?","acceptedAnswer":{"@type":"Answer","text":"<p>AI incident response plays a key role in maintaining HIPAA compliance by prioritizing patient data security. This involves enforcing <strong>strict data access controls</strong>, ensuring that only authorized personnel can view sensitive information. Additionally, <strong>encryption</strong> protects data both in transit and at rest, reducing the risk of breaches.</p> <p>To track and monitor data usage, <strong>audit trails</strong> are essential. They provide a detailed log of who accessed the data, when, and for what purpose, helping to identify any potential misuse. Regular <strong>risk assessments</strong> are also critical, as they help uncover vulnerabilities that could lead to unauthorized sharing of Protected Health Information (PHI) or risks of re-identification.</p> <p>Lastly, <strong>human oversight</strong> ensures that AI-generated decisions adhere to HIPAA requirements, offering an extra layer of accountability. Together, these measures work to safeguard patient data and ensure compliance with HIPAA standards.</p>"}},{"@type":"Question","name":"How do you validate and govern AI to prevent bias and drift?","acceptedAnswer":{"@type":"Answer","text":"<p>Healthcare organizations aiming to minimize bias and drift in AI systems should adopt robust governance frameworks. These frameworks should prioritize <strong>regular bias testing</strong>, <strong>continuous monitoring</strong>, and <strong>clear oversight</strong> to ensure the systems remain reliable and fair.</p> <p>Key actions include:</p> <ul> <li><strong>Bias assessments throughout the AI lifecycle</strong>: Regularly evaluate for potential biases at every stage of development and deployment.</li> <li><strong>Fairness metrics</strong>: Implement measurable standards to gauge and maintain equity in outcomes.</li> <li><strong>Routine audits</strong>: Conduct periodic reviews to identify and address any emerging issues.</li> </ul> <p>Additionally, forming <strong>governance committees</strong> and developing <strong>incident response plans</strong> ensures the organization can adapt to evolving data and conditions while maintaining safety, compliance, and fairness.</p>"}}]}

Key Points:

Why does telehealth incident response present unique challenges that neither purely AI nor purely manual approaches can fully address?

- Telehealth's distributed attack surface exceeding what manual processes can monitor — Telehealth platforms integrate patient-facing applications, remote monitoring devices, clinician portals, cloud infrastructure, and third-party API connections into a distributed environment that generates thousands of log entries daily. Manual security teams monitoring this environment through sequential log review cannot detect threats in real time — incidents remain undetected until the next review cycle, extending exposure duration and patient safety risk.

- Patient safety stakes making both false positives and false negatives dangerous — In telehealth incident response, both AI failure modes carry patient safety consequences. False positives — AI incorrectly flagging benign activity as threats — overwhelm security teams, create alert fatigue, and divert attention from genuine threats. False negatives — AI missing actual threats — allow breaches and ransomware to progress to the point where clinical operations are disrupted. Manual methods solve the false positive problem through human judgment but cannot achieve the detection speed that minimizes false negative exposure windows.

- Change Healthcare demonstrating the systemic risk of AI-integrated telehealth disruption — The Change Healthcare ransomware attack demonstrated that AI-integrated healthcare system disruptions carry consequences beyond data exposure — six months of service disruption, direct patient harm from care delays, and contributions to hospital financial distress severe enough to threaten closures. This outcome establishes that telehealth incident response is not primarily a data security challenge; it is a patient care continuity challenge where the consequences of failure are measured in patient outcomes.

- Model poisoning and adversarial attacks targeting AI as the new primary attack vector — As healthcare organizations deploy AI-driven incident response, attackers have adapted by targeting the AI systems themselves through model poisoning — corrupting training data to create systematic blind spots — and adversarial attacks — crafting inputs that cause AI to produce incorrect outputs. These attacks exploit the opacity of AI decision-making, creating vulnerabilities that neither traditional security controls nor manual review alone can detect without explicit AI governance frameworks.

- HIPAA accountability requirements creating individual responsibility obligations AI cannot satisfy — HIPAA's security rule requires organizations to demonstrate that specific administrative, technical, and physical safeguards are implemented and functioning — a demonstration that requires human accountability for decisions and actions that AI systems make autonomously. When an AI system incorrectly classifies a PHI access event as non-reportable, the organization, not the AI, bears the regulatory consequence. Human oversight of AI incident response decisions is a HIPAA compliance necessity, not merely a best practice.

- Vendor concentration risk amplifying AI-integrated telehealth vulnerabilities — Healthcare technology concentration around a small number of AI vendors creates systemic risk — a single cyberattack on a widely-used AI incident response platform could simultaneously disrupt care for millions of patients across multiple healthcare organizations. This concentration risk requires healthcare organizations to assess their AI vendor dependencies as a third-party risk management obligation, evaluating the vendor's own cybersecurity posture and business continuity capabilities alongside the platform's detection performance metrics.

What specific capabilities make AI superior to manual methods for telehealth threat detection and what quantified outcomes demonstrate this advantage?

- 70% faster incident handling times eliminating the delay window where breach scope expands — The 70% reduction in incident handling times that AI-driven systems deliver is not merely an efficiency metric — it is a patient safety metric. In healthcare, the time between initial breach and containment determines how much PHI is exfiltrated, how many systems are compromised, and whether ransomware achieves the encryption breadth that triggers clinical disruption. Faster handling directly limits breach scope and reduces both regulatory penalty exposure and clinical impact.

- 60% faster risk identification enabling proactive rather than reactive response — The 60% improvement in risk identification speed converts incident response from a reactive activity — responding to confirmed breaches — to a proactive activity — identifying and containing threats before they achieve their objectives. In telehealth environments where patient data is continuously transmitted from remote devices, early threat identification prevents the data accumulation that makes breaches more severe.

- 85% false positive reduction enabling human attention concentration on genuine threats — Human security analysts facing high volumes of false positive alerts develop alert fatigue — a cognitive state in which genuine threats are missed because they appear indistinguishable from the constant background of false alarms. AI's 85% false positive reduction allows human analysts to apply their judgment to the alerts that genuinely require it rather than investigating hundreds of false alarms to find the genuine threats hidden within them.

- Predictive clinical monitoring 24 hours ahead of AKI and ventilation events — AI integration of remote monitoring device data to predict acute kidney injury and mechanical ventilation requirements 24 hours in advance demonstrates the clinical monitoring capability that extends AI's value in telehealth beyond cybersecurity incident response to patient care safety — the same continuous data integration that makes AI effective at security threat detection also makes it effective at clinical deterioration prediction.

- Simultaneous multi-device, multi-platform monitoring without proportional staffing — AI's ability to simultaneously monitor activity across devices, cloud platforms, EHR systems, remote monitoring networks, and third-party integrations without proportional staff increases addresses the fundamental scalability limitation of manual telehealth security programs. Healthcare organizations expanding their telehealth services cannot hire security staff proportionally with their patient population growth; AI-driven monitoring scales with the environment rather than with headcount.

- Continuous learning enabling adaptation to evolving telehealth-specific attack patterns — AI systems continuously updated with new threat intelligence and attack pattern data adapt their detection models to emerging telehealth-specific attack methods — including healthcare-targeted phishing campaigns, medical device firmware exploitation, and telehealth platform-specific vulnerabilities — faster than manual analysts can maintain knowledge currency across the breadth of the telehealth attack surface.

What are the specific AI risks that healthcare organizations must govern in telehealth incident response and what governance mechanisms address each?

- Hallucination risk producing confident false threat assessments — AI hallucinations — outputs that are confident but factually incorrect — in incident response produce false threat assessments that direct security teams toward non-existent incidents while genuine threats proceed undetected. Hallucination risk requires governance mechanisms including output validation by human reviewers for high-stakes assessments, explainability requirements for AI-generated threat classifications, and regular comparison of AI outputs against independent security assessments.

- Model decay reducing detection accuracy as telehealth environments evolve — AI detection models trained on historical threat patterns lose accuracy as telehealth architectures, device types, and attack methods evolve. A model trained on 2022 telehealth attack patterns may fail to detect 2025 attack variants that exploit newer telehealth platform features or remote monitoring device vulnerabilities. Governance requirements include regular model retraining schedules, performance monitoring against current threat baselines, and documented procedures for model retirement when decay renders accuracy insufficient.

- Training data bias creating systematic blind spots in threat detection — AI models trained on incomplete or biased data develop systematic blind spots — consistently missing threat categories underrepresented in training data, such as insider threats or low-and-slow data exfiltration patterns that appear in healthcare but not in general IT security training datasets. Bias testing throughout the AI lifecycle using healthcare-specific threat data and fairness metrics measuring detection equity across threat categories addresses this governance requirement.

- Model poisoning enabling attacker manipulation of AI detection systems — Sophisticated healthcare threat actors can intentionally corrupt AI training data — feeding carefully crafted false examples that train the model to classify malicious activity as benign. This model poisoning attack creates persistent blind spots that survive model retraining unless organizations implement data integrity controls for training data sources and anomaly detection for training data submissions.

- Accountability gap when AI fails to detect or misclassifies a reportable breach — When AI incident response systems fail to classify a PHI disclosure as a reportable breach, the organization faces HIPAA notification violations — but no clear mechanism exists for assigning accountability within an automated decision chain. Governance mechanisms must establish that human reviewers are accountable for reviewing AI breach classification decisions above defined impact thresholds, creating a human-in-the-loop for all decisions with regulatory notification consequences.

- Governance committee structure and incident response planning for AI-specific failures — Healthcare organizations deploying AI incident response must establish governance committees with representation across IT security, clinical operations, compliance, legal, and ethics functions — and must develop specific incident response plans for AI failure modes including model poisoning, adversarial manipulation, hallucination-driven false negative breaches, and vendor AI platform outages. These AI-specific plans must define escalation procedures, human override protocols, and communication requirements when AI systems fail to perform as expected.

How do the specific failure modes of manual telehealth incident response create patient safety and HIPAA compliance risks?

- Alert fatigue systematically degrading threat detection reliability — Security teams managing manual telehealth incident response face sustained high volumes of alerts that include large proportions of false positives. Alert fatigue — the progressive reduction in analyst responsiveness that sustained high alert volumes produce — causes analysts to process alerts more rapidly and less carefully, increasing the probability that genuine threats are dismissed as false positives. This fatigue-driven miss rate is not a performance failure of individual analysts; it is a predictable structural outcome of manually monitoring environments that generate alert volumes beyond human sustainable processing capacity.

- Cognitive biases producing inconsistent threat assessment for similar incidents — Human analysts apply contextual judgment to threat assessment — a strength for novel, complex threats but a source of inconsistency for routine assessments. Two analysts reviewing identical telehealth access anomalies may reach different conclusions based on their individual experience, current cognitive load, and recency bias toward threat patterns they have recently investigated. This inconsistency creates systematic gaps in threat coverage and undermines the predictability that HIPAA compliance documentation requires.

- Sequential processing creating exposure windows that AI's parallel monitoring eliminates — Manual incident response processes threats sequentially — completing investigation of one alert before beginning the next. During the time between alert generation and manual investigation, the threat continues to operate undetected. In telehealth environments transmitting PHI continuously from hundreds of remote devices, sequential processing creates exposure windows measured in hours or days during which unauthorized access, data exfiltration, or ransomware deployment can proceed.

- Documentation inconsistency creating HIPAA compliance gaps — HIPAA requires comprehensive documentation of security incident detection, investigation, and response activities. Manual documentation processes — particularly under time pressure during active incident response — produce inconsistent, incomplete, or retrospectively reconstructed records that fail to meet HIPAA's documentation standards and create regulatory exposure during OCR investigations. Auditors reviewing manually documented incident response records routinely identify gaps that automated logging systems would prevent.

- Inability to keep pace with emerging healthcare AI attack methods — Healthcare-targeted attack methods including data poisoning of clinical AI systems, adversarial manipulation of remote monitoring device data streams, and model tampering in AI-driven clinical decision support tools require security analysts with specialized knowledge of both healthcare AI systems and adversarial machine learning — a combination of expertise that most healthcare security teams do not currently possess and that manual monitoring processes cannot scale to address.

- Staff concentration risk creating single points of failure — Manual incident response programs are vulnerable to staff concentration risk — incidents occurring during periods when experienced analysts are unavailable through illness, vacation, or turnover receive degraded response quality from less experienced staff or are deferred entirely. This concentration risk is particularly acute in healthcare where security staffing shortages are widespread and experienced healthcare security analysts are scarce. AI-driven systems provide consistent performance regardless of staff availability, eliminating the coverage gaps that manual programs create.

How should healthcare organizations design a hybrid telehealth incident response model that maximizes AI benefits while maintaining appropriate human oversight?

- Task allocation framework assigning AI and human responsibilities by incident type — The hybrid model requires an explicit task allocation framework specifying which incident types AI handles autonomously, which require human review before action, and which are escalated to human control for full investigation. Routine threat detection, initial containment actions for known threat patterns, and evidence collection automation are appropriate AI responsibilities. Decisions with direct patient safety implications, breach classification with HIPAA notification consequences, and novel threats without established AI pattern matches require human review and approval before action.

- Healthcare-specific platform selection as the prerequisite for effective hybrid implementation — Generic incident response tools applied to healthcare environments fail because they are not designed for the regulatory complexity of HIPAA compliance, the patient safety stakes of clinical system disruption, the device diversity of IoT medical environments, or the third-party vendor risk complexity of healthcare supply chains. Healthcare-specific platforms that integrate PHI protection, clinical application security, medical device risk management, and regulatory compliance documentation into a unified workflow provide the infrastructure that hybrid models require.

- Continuous AI performance monitoring maintaining hybrid model effectiveness — Hybrid models require continuous monitoring of AI detection performance — tracking false positive rates, false negative rates, detection latency, and comparison against independent security assessments — to identify model decay before it creates detection gaps. Organizations that deploy AI systems and assume consistent performance without ongoing monitoring will experience progressive degradation of hybrid model effectiveness as their telehealth environments evolve beyond the AI's training parameters.

- Training security teams to validate and govern AI outputs — Human analysts in hybrid models require training specific to AI governance — understanding how to validate AI-generated threat assessments, identify hallucination patterns, recognize potential model decay symptoms, and override AI decisions when professional judgment conflicts with AI classification. This training requirement is distinct from traditional security analyst training and represents an investment that healthcare organizations must budget for AI-augmented security programs.

- Incident response planning covering AI failure scenarios — Hybrid incident response plans must explicitly address AI failure scenarios — how the organization responds when AI-driven detection systems are offline, when model poisoning is suspected, when AI hallucinations produce actionable false threat assessments, and when vendor AI platforms experience outages. These failure scenarios require manual backup procedures, staff capacity planning for periods of degraded AI capability, and pre-established vendor communication protocols.

- Benchmarking AI performance against peer healthcare organizations — Healthcare organizations cannot assess whether their AI incident response performance is adequate without comparative context — knowing whether their false positive rates, detection latency, and breach containment times reflect strong, average, or inadequate performance relative to peer organizations facing similar telehealth attack surfaces. Censinet RiskOps™ benchmarking against the Censinet Risk Network of 100-plus provider and payer facilities provides the comparative performance context that internal measurement alone cannot supply.

How does Censinet RiskOps™ specifically address the telehealth incident response needs that both pure AI and manual approaches leave unmet?

- Healthcare-specific design addressing the complexity generic tools cannot handle — Censinet RiskOps™ is built specifically for healthcare — addressing the simultaneous complexity of PHI protection, HIPAA compliance, medical device risk, clinical application security, and third-party vendor oversight that generic incident response tools are not designed to manage. Matt Christensen's observation that healthcare tools must be built specifically for healthcare reflects the operational reality that generic security platforms encounter when applied to telehealth environments with patient safety stakes, regulatory obligations, and clinical workflow constraints that enterprise IT environments do not share.

- Tower Health efficiency demonstration: 3 FTEs returned to primary roles — Tower Health's implementation result — three FTEs returned to their primary roles with only two FTEs required for significantly expanded risk assessment capacity — quantifies the operational efficiency that healthcare-specific AI implementation achieves. This outcome demonstrates that the hybrid model's promise of AI-enabled scale without proportional headcount growth is achievable in production healthcare environments rather than theoretical.

- Third-party vendor risk assessment supporting telehealth platform security — Telehealth incident response operates within an ecosystem of video platform vendors, remote monitoring device manufacturers, EHR integrations, and cloud infrastructure providers whose security posture directly affects the organization's incident response capability. Censinet RiskOps™ streamlines vendor risk assessments for these telehealth infrastructure providers — verifying security certifications, identifying known vulnerabilities, and maintaining BAA compliance across the vendor ecosystem that telehealth operations depend on.

- AI-driven risk features with human-guided decision authority — Censinet RiskOps™ combines AI acceleration with human-guided decision-making — enabling AI to process and prioritize risk information at scale while routing decisions with clinical implications, regulatory consequences, or novel threat characteristics to human reviewers with final authority. This architecture implements the hybrid model's core principle — AI for speed and scale, humans for judgment and accountability — within a purpose-built healthcare workflow rather than requiring organizations to assemble hybrid capabilities from separate general-purpose tools.

- Cybersecurity benchmarking providing relative performance context — Censinet RiskOps™'s cybersecurity benchmarking capability enables healthcare organizations to assess their telehealth incident response performance relative to peer organizations — identifying whether their detection times, false positive rates, and response capabilities reflect best practice or areas for improvement. This benchmarking context supports evidence-based investment decisions for telehealth incident response capability improvement and enables organizations to demonstrate to regulators and board members that their security posture reflects industry standards.

- Collaborative risk network enabling shared telehealth threat intelligence — The Censinet Risk Network of 100-plus healthcare providers and payers creates a collaborative threat intelligence environment in which telehealth-specific incident patterns detected by one organization can inform response preparation across the network. This shared intelligence model addresses one of the most significant structural advantages that sophisticated healthcare threat actors hold over individual organizations — the ability to observe and adapt to multiple organizations' defenses simultaneously while each organization can only observe its own network.