Algorithmic Accountability: Liability Frameworks for AI-Driven Clinical Decisions

Post Summary

Who’s responsible when AI makes a mistake in healthcare?

AI is reshaping medical decisions, from diagnosing diseases to recommending treatments. But accountability remains a major concern. Physicians, hospitals, and AI developers all play roles, yet current liability frameworks leave gaps:

- Clinicians risk lawsuits for both misusing AI and not using it when it becomes standard practice.

- Hospitals bear institutional risks but often lack robust AI monitoring processes.

- Vendors limit their liability through contracts, leaving others to shoulder most legal exposure.

Key principles for accountability include:

- Human oversight: Clinicians must evaluate AI recommendations critically.

- Transparency: AI systems should disclose their error rates and limitations.

- Bias mitigation: Validate AI tools against local patient demographics.

- Traceability: Document AI usage and decisions for legal and safety purposes.

With malpractice claims involving AI up 14% between 2022 and 2024, it’s clear that better governance, audits, and clear liability frameworks are urgently needed to manage risks and ensure safe AI adoption in healthcare.

Michelle Mello | Understanding Liability Risk from Healthcare AI Tools

sbb-itb-535baee

Core Principles of AI Accountability in Healthcare

Accountability in healthcare AI revolves around four key principles that clarify responsibility when algorithms impact patient care. These principles help define roles in AI-driven healthcare and shape legal accountability frameworks.

Human oversight plays a critical role. AI is designed to assist, not replace, clinical decision-making. Physicians must carefully evaluate AI recommendations to avoid liability for errors. As the Physician AI Handbook puts it: "AI is a tool, not a shield" [3]. This reinforces the idea that clinicians remain the ultimate decision-makers, even when AI is involved.

Transparency and explainability tackle the challenge of AI's often opaque nature. When AI systems lack clarity, it becomes harder to determine whether errors were predictable - a crucial factor in legal claims [6]. Studies show that sharing AI error rates with jurors can significantly reduce perceived physician negligence if an AI detects something the clinician misses [3]. For example, mock-juror research found that a "double-review" workflow - where clinicians document their independent evaluations before and after considering AI input - lowered perceived negligence from 74.7% to 52.9% [3].

Bias mitigation is essential for both clinical effectiveness and legal protection. Using AI tools outside their validated population - such as employing pediatric algorithms for adults or applying tools trained on one racial group to another - introduces serious risks [3]. Algorithms that disproportionately affect patients based on race, disability, or other protected categories may lead to enforcement actions under civil rights laws, potentially exposing institutions to liability beyond individual malpractice claims [1].

Traceability and monitoring ensure accountability through detailed documentation. Clinicians must record the specific AI system used, including its version and FDA clearance status, in patient records. This should also include their reasoning for either following or overriding AI recommendations [3]. Such documentation is crucial in legal disputes. Additionally, healthcare organizations are responsible for post-deployment monitoring to detect performance drift - when an AI's accuracy declines over time compared to its validation data [3].

| Accountability Principle | Legal Defense Requirement | Organizational Action |

|---|---|---|

| Human Oversight | Document independent clinical assessment before and after AI input | Implement double-review workflows [3] |

| Transparency | Record AI version, error rates, and limitations in patient notes | Disclose AI failure modes to clinicians [3] |

| Bias Mitigation | Validate AI performance on local patient demographics | Test tools against institutional patient data before deployment [1] |

| Traceability | Maintain records of when AI recommendations were followed or overridden | Establish clear override protocols and documentation standards [3] |

These principles lay the groundwork for determining liability and managing risks in AI-supported healthcare. They also provide a framework for understanding how responsibility is assigned as AI continues to shape clinical decision-making.

US Regulatory Landscape for Healthcare AI

Key US Regulations Addressing Healthcare AI

In the United States, healthcare organizations navigate a complex web of federal and state regulations governing the use of AI in clinical settings. These rules are constantly evolving, shaping how accountability and liability are managed when AI is used in medical decision-making.

A key player in this regulatory space is the FDA, which oversees AI and machine learning algorithms classified as medical devices under the Software as a Medical Device (SaMD) framework. Since 1995, the FDA has authorized more than 1,200 AI/ML-enabled medical devices, with over 690 approvals in 2023 alone [4][5]. Most tools for clinical decision-making follow the 510(k) pathway, which establishes substantial equivalence to existing devices. However, higher-risk AI systems require Premarket Approval (PMA). For example, in 2018, the FDA approved IDx-DR via the De Novo pathway, marking it as the first autonomous AI diagnostic system for diabetic retinopathy that operates without clinician oversight [5].

The 21st Century Cures Act provides a carve-out for certain clinical decision support tools, exempting them from FDA regulation if they meet specific criteria. These include transparency about how recommendations are generated, allowing clinicians to independently review results, and avoiding the processing of medical images or signals [5]. However, this exemption was intended for simpler tools and not for large-scale predictive algorithms.

Another critical regulation is Section 1557 of the Affordable Care Act, updated in 2024 by the Department of Health and Human Services (HHS). This rule prohibits algorithmic discrimination in federally funded health programs, holding healthcare entities accountable for disparate impacts based on race, age, or sex - even if the discrimination is unintentional [5]. This expands liability beyond traditional malpractice claims.

The ONC HTI-1 Final Rule, finalized in December 2023, emphasizes transparency and risk management for predictive decision support tools. Developers must disclose summaries of training data and potential biases, ensuring accountability in predictive models [4]. Meanwhile, HIPAA remains a cornerstone for protecting health information. AI systems processing protected health information (PHI) face penalties of up to $1.9 million per violation category per year for improper data handling during training [5].

At the state level, regulations like the Colorado AI Act, effective February 1, 2026, further refine accountability. This law requires healthcare providers using high-risk AI tools to implement risk management programs and conduct annual impact assessments. Charles Gass and Hailey Adler from Foley & Lardner LLP remarked:

"The Act represents a significant shift in AI regulation, particularly for health care providers who increasingly rely on AI-driven tools for patient care, administrative functions, and financial operations" [7].

The act grants enforcement authority exclusively to the State Attorney General, with no private right of action.

Regulators are also using AI to combat fraud. In June 2025, the Department of Justice’s National Health Care Fraud Takedown uncovered a scheme involving AI-generated fake recordings of Medicare beneficiaries consenting to products, leading to $703 million in fraudulent claims submitted to Medicare and Medicare Advantage plans [9][10]. Federal agencies now rely on AI tools to detect emerging fraud patterns.

Federal-state dynamics add another layer of complexity. A December 2025 Executive Order, "Ensuring a National Policy Framework for Artificial Intelligence", created an AI Litigation Task Force to address conflicts between state accountability measures and federal innovation policies [9][10]. Danielle Barbour from Kiteworks highlighted the importance of these developments:

"State AI legislation is not a future concern - it is the defining compliance event of 2026" [8].

These regulations are not just about safety and data protection - they also define liability in AI-driven clinical decisions. For healthcare organizations, understanding these rules is critical for ensuring compliance and managing risks.

Comparative Analysis of Regulatory Requirements

Healthcare organizations must juggle multiple regulations, each with unique compliance demands. These overlapping requirements necessitate careful coordination.

| Regulation | Focus | Transparency Mandate | Audit Requirement | Applicability |

|---|---|---|---|---|

| FDA SaMD Framework | Safety and effectiveness of medical software | High (Premarket review) | Post-market surveillance | AI intended to diagnose or treat disease |

| ONC HTI-1 Rule | Transparency for predictive DSI | High (Training data summaries) | Risk management documentation | Certified Health IT / Predictive Decision Support |

| Colorado AI Act | Algorithmic discrimination | Public notice of AI use | Annual Impact Assessments | High-risk AI making "consequential decisions" |

| HIPAA | Data privacy and security | Disclosure of PHI use | Regular security audits | All covered entities processing PHI |

| Section 1557 (ACA) | Anti-discrimination | Disclosure of AI's role in adverse decisions | Evaluation of disparate impact | Federally funded health programs |

Importantly, compliance with one regulation does not ensure compliance with others. For instance, an AI tool cleared by the FDA for safety may still violate Section 1557 if it disproportionately affects protected groups. Similarly, HIPAA compliance for data security does not address the Colorado AI Act’s requirements for assessing algorithmic discrimination.

The regulatory environment is shifting rapidly. By March 2026, over 35 states had enacted AI legislation, often moving faster than federal efforts to regulate training data transparency and healthcare decisions [8]. This poses challenges, as 78% of organizations cannot validate data before it enters AI training pipelines, and 77% cannot trace the origin of their training data [8]. These gaps leave organizations vulnerable to compliance risks across multiple regulatory frameworks.

Liability Assignment Models for AI-Driven Clinical Decisions

AI Accountability Framework: Stakeholder Liability and Responsibilities in Healthcare

When AI influences patient care, figuring out who holds legal responsibility can get tricky. The responsibility spans clinicians, vendors, and healthcare organizations, each facing its own set of challenges. This section explores how accountability must be shared across these stakeholders to ensure patient safety.

Clinician-Centric Liability

Physicians carry the primary responsibility for patient care, with AI acting as a support tool rather than replacing clinical judgment. This setup creates a delicate balance - clinicians can face liability for both misusing AI and for not using it when it becomes standard practice.

One major risk is automation bias, where clinicians overly rely on AI without critical evaluation. Teresa Younkin, CEO of Mosaic Life Tech, highlights this concern:

"A clinician who 'blindly trusts a flawed AI' may not have met what a reasonably competent physician would have done in the same circumstances" [1].

Failing to question or override AI recommendations when clinical signs point elsewhere can also be seen as both a clinical and governance failure.

Recent studies underline these risks. For instance, mock-juror research showed that a "double-review" process - where clinicians review cases both before and after AI input - reduced perceived negligence from 74.7% to 52.9% [3]. Jurors also tend to penalize clinicians more harshly when AI flags an issue that they miss [3].

As AI tools gain wider acceptance and become part of standard care, clinicians face a dual risk: liability for not using AI and for ignoring its accurate recommendations [3]. This underscores the need for thorough documentation when deviating from AI suggestions.

Of course, liability doesn’t stop with clinicians - it also extends to those who create and sell AI tools.

Developer and Vendor Accountability

AI developers and vendors may be held responsible for issues like flawed training data or software bugs. However, contractual terms often shift much of this risk to healthcare organizations through liability caps and indemnification clauses [1].

Vendors frequently rely on the "learned intermediary" doctrine, arguing that it’s up to physicians to manage AI-related risks [3]. The opaque nature of many AI models makes it difficult to hold developers accountable under traditional product liability rules [6]. Plaintiffs often struggle to prove that errors were "reasonably foreseeable" or to suggest a "safer alternative design", both of which are critical in such cases [6].

State enforcement has started to play a role in holding vendors accountable. In May 2024, the Texas Attorney General's Office settled with Pieces Technologies, Inc., following an investigation into the accuracy of its AI-generated clinical summaries [1]. This case set a precedent for using consumer protection laws to address AI-related issues.

FDA clearance through the 510(k) pathway provides some regulatory defense for vendors, but it doesn’t eliminate liability or guarantee that tools meet clinical standards for specific patients [1]. The FDA's Pre-Determined Change Control Plan (PCCP), finalized in August 2025, allows AI updates without new submissions but exposes vendors to ongoing liability if performance declines [3].

Healthcare organizations also face their own challenges in managing AI.

Institutional and Organizational Governance

Hospitals and healthcare organizations may be held liable for corporate negligence, poor credentialing, or inadequate oversight of AI systems [1]. Legal experts are increasingly advocating for enterprise liability, which would make hospitals broadly responsible for injuries caused by negligence within their systems - provided they have access to the necessary monitoring data [11].

Alarmingly, only 61% of hospitals validate AI performance using local patient data before implementation [1]. This gap in oversight poses significant risks. For example, a 2019 study led by Ziad Obermeyer revealed that an algorithm developed by Optum was less likely to refer Black patients to high-risk care programs than white patients with similar needs. This bias was discovered through external research, not internal monitoring [1].

Healthcare organizations also face liability through various channels, including CMS quality audits, OCR investigations into algorithmic bias, FDA reporting violations, and state Attorney General actions [1].

| Party | Primary Liability Basis | Key Defense/Limitation |

|---|---|---|

| Physician | Breach of standard of care; failure to exercise judgment | Use of AI as a support tool; double-review protocols |

| Vendor | Product liability (design defects, failure to warn) | Learned intermediary doctrine; liability caps |

| Hospital | Corporate negligence; poor oversight | Local validation, robust governance, training |

If developers withhold critical performance data, making it impossible for hospitals to monitor AI effectively, liability may shift from the institution to the developer [11]. This creates a strong incentive for transparency, though how this plays out in court remains to be seen.

Understanding these liability models is essential for building effective risk management strategies for AI in healthcare.

Case Studies: AI Errors in Clinical Practice

Looking at real-world AI failures in healthcare reveals why clear accountability and managing third-party AI risk are absolutely necessary. These examples, ranging from diagnostic mishaps to insurance misjudgments, underscore how fragmented responsibility can lead to serious consequences.

Diagnosis Errors and Liability Implications

IBM's Oncology Expert Advisor (OEA), implemented at MD Anderson Cancer Center, serves as a stark example. The system issued unsafe cancer treatment recommendations because it was trained on a limited set of synthetic (hypothetical) cancer cases instead of actual patient data. This created a dangerous gap between the AI's suggestions and the realities of clinical care, highlighting a broader issue: algorithms often fail to adapt to the fast-changing nature of medical scenarios [2].

Another prevalent issue is alert fatigue. Some AI systems integrated with electronic health records bombard physicians with false alerts, leading them to dismiss even critical warnings. This undermines the very purpose of these tools and exposes the fragility of AI-driven decision-making in high-stakes environments [2].

Failures in diagnostic and treatment recommendations further reveal the risks tied to relying too heavily on AI.

Treatment Recommendations Gone Wrong

In November 2023, UnitedHealthcare faced a class action lawsuit over its use of the nH Predict AI model [12][14]. One case involved Gene Lokken, a 91-year-old whose doctor recommended extended physical therapy in a skilled nursing facility after a leg fracture. The AI, however, predicted a discharge date just 2.5 weeks later, contradicting the therapists' judgment. Lokken remained in the facility for a year until his death, racking up $150,000 in additional costs. Shockingly, the AI tool had a reported 90% error rate, with many denials being overturned upon review. Yet, only 0.2% of policyholders typically appealed these decisions [14].

"By replacing licensed practitioners with unchecked AI, UHC is telling its patients that they are completely interchangeable with one another and undervaluing the expertise of the physicians devoted to key elements of care." – Zarrina Ozari, Senior Associate, Clarkson Law [14]

In another instance from March 2023, Cigna faced legal action for its use of an AI-based system called PXDX [13]. This tool automatically flagged and denied insurance claims, including those for essential tests like those diagnosing autonomic nervous system disorders. Claims were rejected without proper patient file reviews, with medical directors merely rubber-stamping AI decisions. Reports also surfaced of employees being penalized for challenging AI-generated recommendations, turning the tool from a supportive asset into an inflexible rulebook that sidelined human expertise.

A particularly chilling case occurred in January 2020, when Practice Fusion (now owned by Allscripts) settled a $145 million case with the U.S. Department of Justice. The company admitted to accepting $1 million in kickbacks from Purdue Pharma to create a software alert encouraging doctors to prescribe extended-release opioids. Between 2016 and 2019, this alert was triggered 230 million times, ignoring evidence-based guidelines and driving tens of thousands of additional opioid prescriptions [15].

"Purdue's drug marketers paid to invade the sanctity of the physician-patient relationship so that it could influence medical decisions and increase prescriptions of its most potent opioids." – United States Attorney's Office, District of Vermont [15]

These examples show how AI errors can harm patients and expose organizations to severe legal and financial risks. They emphasize the need for proper governance and constant oversight to minimize the dangers AI poses in clinical settings.

Best Practices for Managing AI Risk Using Censinet

The examples above highlight how AI missteps in healthcare often result from gaps in governance, weak vendor oversight, and insufficient human participation in decision-making. These challenges emphasize the importance of robust risk management frameworks - something Censinet’s platform is designed to address. With the right tools, healthcare organizations can ensure accountability and reliability in their AI systems.

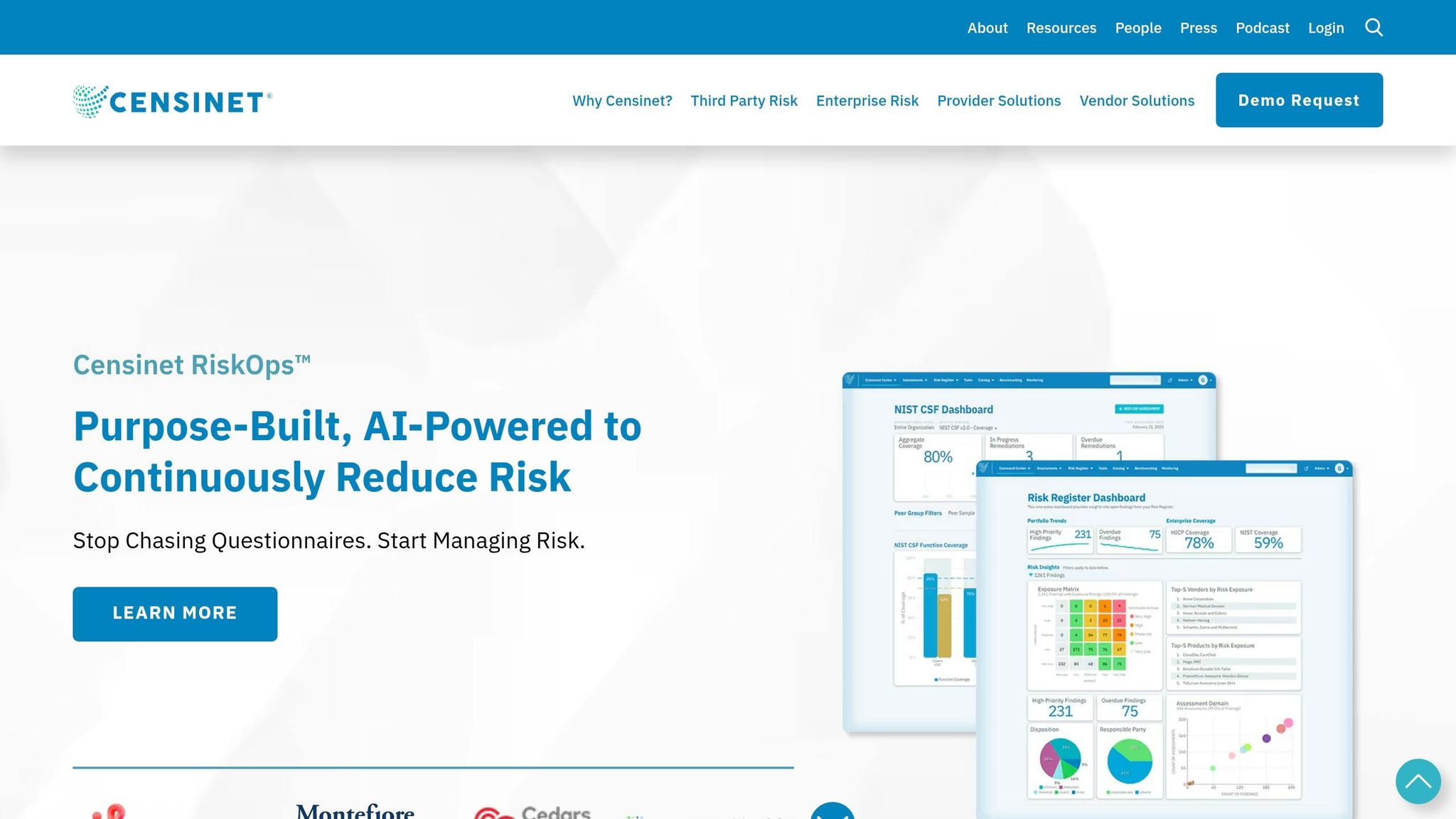

Using Censinet RiskOps™ for AI Governance

Censinet RiskOps™ acts as a centralized platform for managing all aspects of AI-related policies, risks, and operational tasks. It automates risk assessments and enforces strict validation protocols before any AI system is used in clinical settings. This includes critical evaluations, such as examining the diversity of training data and assessing performance across different demographic groups - key steps in ensuring fairness and accuracy before deployment[16].

The platform’s command center provides real-time insights into AI system performance. This enables continuous monitoring to detect issues like algorithm degradation or emerging biases before they affect patient outcomes[16][17]. Additionally, healthcare organizations can use the platform to establish standardized audit trails and clear oversight mechanisms, ensuring responsibilities are well-defined and traceable[17].

Human-in-the-Loop Oversight with Censinet AI

Censinet AI ensures a balance between automation and human oversight by embedding human involvement into critical stages of risk assessment. Configurable rules and review processes allow risk teams to maintain control, ensuring that automation supports decision-making rather than replacing it entirely.

The platform acts as a central coordinator for AI governance, directing key findings and risks to appropriate stakeholders, such as members of AI oversight committees. This coordination across Governance, Risk, and Compliance (GRC) teams ensures that the right issues are addressed by the right individuals promptly. This structure fosters ongoing accountability and oversight for AI systems[16]. Alongside this, managing vendor risks is equally crucial.

Managing AI Vendor Risk with Censinet Connect™

Censinet Connect™ simplifies vendor evaluations by automating security questionnaires, summarizing integration details, and producing detailed risk reports. This functionality enables organizations to conduct thorough assessments of AI vendors and establish clear liability terms within licensing agreements[6]. By doing so, healthcare organizations gain a comprehensive understanding of the systems they deploy and how vendor performance will be monitored over time.

Implementing Liability Frameworks in Healthcare Organizations

Creating a liability framework for AI-driven clinical decisions requires a clear, structured approach. This involves assigning specific responsibilities and maintaining thorough documentation. Healthcare organizations face a dual risk: they can be held liable for both misusing AI and for not adopting AI tools that become the standard of care over time [3]. This dual exposure makes a systematic framework essential.

Adding to the complexity is the uneven distribution of liability. Hospitals and physicians bear most of the responsibility for harm caused by AI-assisted decisions, highlighting a significant imbalance in risk [1]. To counter this, organizations must establish strong governance and documentation practices.

Conducting Algorithmic Impact Assessments (AIAs)

Algorithmic Impact Assessments (AIAs) are a crucial first step. These assessments pinpoint potential risks and clarify accountability before deploying AI tools in clinical settings. They evaluate how algorithms perform across diverse patient groups, ensuring that training data reflects the demographics served by the organization. When procuring AI systems, it’s critical to demand evidence of performance across various populations and proof of bias evaluation [19].

AIAs also set baseline performance metrics, which are vital for ongoing monitoring. By documenting validation outcomes, staff training records, and decision-making processes, organizations create a strong foundation for defending against malpractice claims [1]. This is increasingly important as malpractice claims involving AI tools rose by 14% in 2024 compared to 2022 [3].

Establishing AI Governance Committees

AI governance committees play a key role in overseeing the development, deployment, and monitoring of AI systems. These committees should include representatives from various fields: healthcare providers, AI experts, ethicists, legal advisors, data scientists, patient advocates, and compliance officers [18][19]. This diverse group ensures that decisions are informed by clinical, technical, ethical, and regulatory perspectives.

Core responsibilities of these committees include drafting and approving AI policies, conducting ethical reviews, approving tools before clinical use, and maintaining open communication with stakeholders [18]. They should also report regularly to the fiduciary board about AI outcomes and any adverse events [19]. The Joint Commission emphasizes the importance of such structures:

"Governance creates accountability which will help to drive the safe use of AI tools" [19].

Before formalizing governance, organizations should conduct AI audits to determine which staff members are already using AI, the platforms they’re accessing, and the purposes of these tools - whether clinical or administrative [18]. This initial evaluation ensures that oversight is comprehensive and no gaps exist. Regular audits complement governance efforts by continuously monitoring AI use and performance.

Routine Audits and Continuous Monitoring

Regular audits are essential for identifying issues like performance drift or new biases before they affect patient care. The frequency of these audits should match the risk level of the system - high-risk tools require more frequent evaluations and retraining due to their clinical importance [21]. These audits reinforce the earlier risk assessments and governance measures.

Incident response plans should also be in place, detailing how AI errors are reported, documented, and addressed. These plans should include protocols for suspending an algorithm when necessary [18][20]. Proper documentation strengthens the audit trail, which is critical for legal defense [3]. Ultimately, physicians remain responsible for patient care and cannot delegate medical judgment to AI systems [4]. A well-documented framework ensures that clinical decisions are defensible and grounded in sound judgment.

Conclusion

AI-powered clinical decisions are changing the face of healthcare, but they bring with them a tangle of liability issues that demand immediate attention. Right now, the bulk of legal responsibility falls on hospitals and physicians, while AI vendors often limit their liability through contracts that cap their exposure at minimal levels [1]. This uneven distribution of risk highlights the urgent need for clear liability frameworks to ensure organizations can navigate these challenges effectively.

Healthcare providers walk a fine line when it comes to liability. They face legal risks both for misusing AI tools and for not adopting them as these tools become the standard of care. The stakes are high, with AI-related malpractice claims rising by 14% between 2022 and 2024 [1][3]. To mitigate these risks, robust governance structures are essential. Key defenses include thorough documentation of local validation efforts, staff training, and ongoing monitoring - all of which can serve as critical safeguards in the event of adverse outcomes [1].

The role of clinicians remains central. As Teresa Younkin, CEO of Mosaic Life Tech, aptly puts it: "The AI tool doesn't transfer clinical accountability. It adds a layer of decision support that the physician is still responsible for evaluating" [1]. Physicians maintain ultimate responsibility for patient care, with AI serving as an aid rather than a replacement for their clinical judgment.

Beyond reducing legal risks, clear accountability frameworks encourage responsible use of AI. Without them, fear of lawsuits could hinder the adoption of tools that have the potential to save lives [6]. By establishing measures such as algorithmic impact assessments, oversight committees, and regular audits, healthcare organizations can create an environment where innovation thrives alongside patient safety.

Striking a balance between progress and accountability is crucial. By acknowledging AI's limitations, introducing double-review processes, validating tools for local patient populations, and ensuring human oversight at every stage, healthcare providers can unlock AI's potential while safeguarding patients, clinicians, and institutions from avoidable harm.

FAQs

How should liability be shared between clinicians, hospitals, and AI vendors?

Liability for clinical decisions influenced by AI is a shifting landscape. At present, the bulk of responsibility typically rests with clinicians and hospitals, largely because existing laws and contracts often protect AI vendors from direct accountability. However, there’s growing support among experts for a model of shared liability. This approach would distribute responsibility across developers, healthcare providers, and regulators, ensuring a more balanced system.

One concept gaining traction is "technological due care." This framework emphasizes the importance of all stakeholders - whether designing, using, or overseeing AI systems - taking proactive steps to manage risks. The ultimate goal is to promote safety and accountability in healthcare settings where AI plays a role.

What documentation best protects clinicians when using AI in patient care?

Comprehensive documentation plays a critical role in safeguarding clinicians when incorporating AI into patient care. Keeping thorough records of how AI systems are used, the validation processes they undergo, and the level of clinician oversight involved is essential. This not only ensures accountability but also helps meet legal requirements and showcases a commitment to using AI responsibly in clinical environments.

How can hospitals detect and respond to AI bias or performance drift?

Hospitals have several ways to tackle AI bias and performance drift, ensuring these systems remain reliable and fair. One key approach is continuous monitoring paired with regular validation, which helps spot biases or inaccuracies, particularly when dealing with vulnerable populations.

To stay ahead of potential issues, hospitals can take proactive steps like retraining AI models, enhancing the quality of input data, and educating staff about the limitations of AI tools. It's also crucial to establish clear roles for clinicians, data scientists, and compliance teams. This ensures a coordinated and timely response, ultimately protecting both accuracy and patient safety.