Checklist for PHI Breach Response

Post Summary

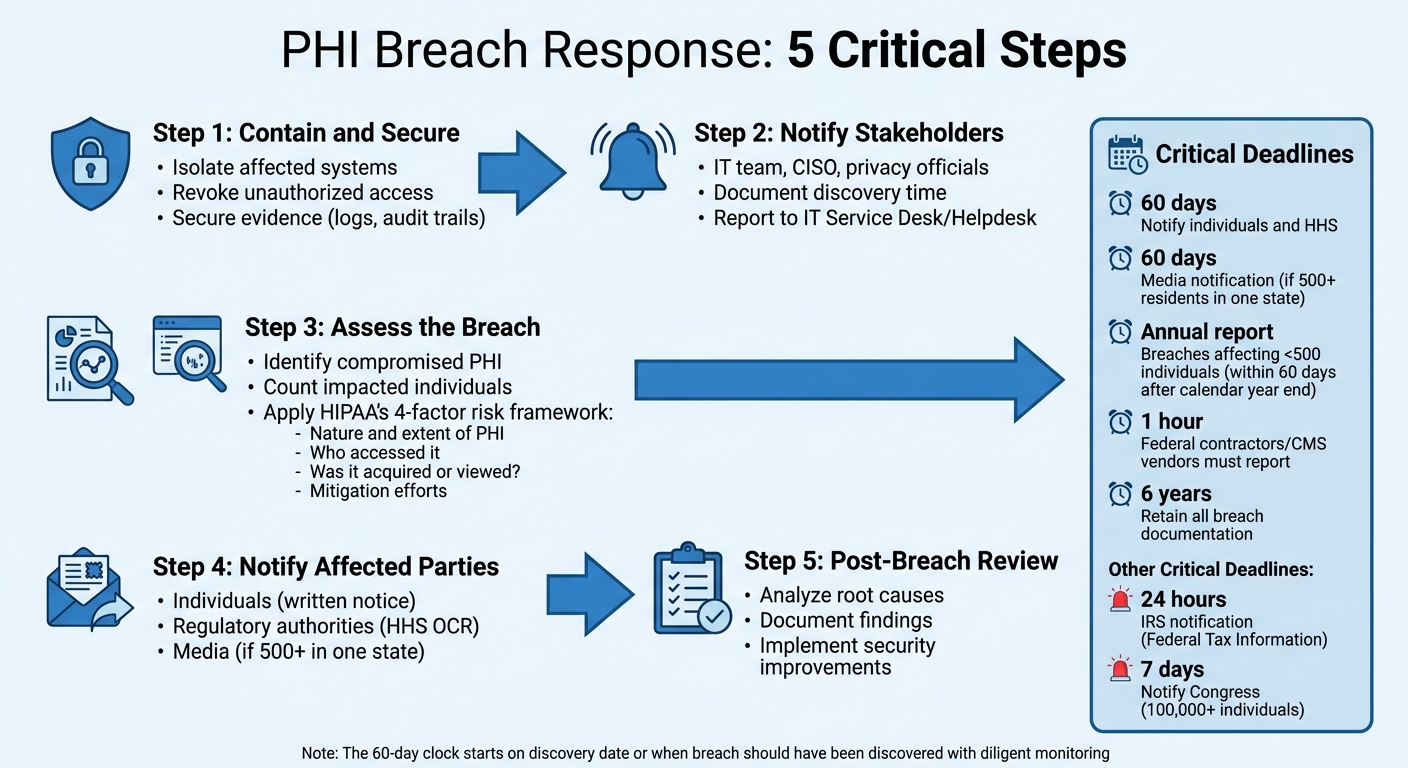

When a Protected Health Information (PHI) breach occurs, acting fast is mandatory to minimize damage and meet federal regulations. Here's the essential process:

- Contain and Secure: Immediately isolate affected systems, revoke access, and secure evidence like logs and audit trails.

- Notify Stakeholders: Inform key personnel, including your IT team, CISO, and privacy officials. Document the discovery time for compliance.

- Assess the Breach: Identify compromised PHI, impacted individuals, and assess risks using HIPAA’s four-factor framework.

- Notify Affected Parties: Notify individuals, regulatory authorities, and media (if required) within set timeframes (e.g., 60 days for HIPAA).

- Post-Breach Review: Analyze what went wrong, document findings, and implement stronger security measures to prevent future breaches.

Key Deadlines:

- Notify individuals and HHS: 60 days.

- Notify Congress (for 100,000+ individuals): 7 days.

- Notify IRS (for Federal Tax Information): 24 hours.

A clear plan ensures compliance, protects patients, and reduces chaos during incidents. Tools like Censinet RiskOps™ can simplify documentation and risk management.

5-Step PHI Breach Response Process with HIPAA Notification Deadlines

HIPAA Breach Reporting: Protecting Patient Information in Healthcare

sbb-itb-535baee

Immediate Containment and Security Actions

When you suspect a PHI breach, acting quickly is critical to limit damage and ensure compliance. The first few hours after discovery are vital for containing the situation and reducing potential risks.

Detect and Contain the Breach

The top priority is stopping the breach from spreading. Secure affected systems immediately and revoke any unauthorized access. Even if you're unsure whether a breach has occurred, treat any indication of one as an urgent incident.

"The team does not need confirmation of a breach to begin the breach response process – they should treat incidents as breaches as soon as the investigation reveals that PII, PHI, or FTI was jeopardized." - CMS Breach Response Handbook [1]

If shutting down a server requires approval from a Business Owner, start by isolating compromised areas and restricting access to vulnerable data.

Notify Key Stakeholders

As soon as you suspect a breach, report it to your IT Service Desk or Helpdesk to initiate the formal response process. Record the exact time the breach was discovered to meet HIPAA reporting requirements. Notify your Incident Management Team, including the Chief Information Security Officer (CISO), Senior Official for Privacy (SOP), Information System Security Officer (ISSO), and the Business Owner of the affected system. If third-party vendors or business associates are involved, inform your Contracting Officer’s Representative (COR) to activate contractual breach response protocols.

After notifying stakeholders, secure and document all relevant information immediately.

Preserve Evidence

Isolate compromised systems carefully to avoid damaging forensic data. Secure system logs, access records, and audit trails to prevent them from being overwritten or deleted. Ensure all system details are ready for review by your Incident Management and Breach Analysis Teams. Use a standardized Incident Report Template to document every action taken. Coordination is key to maintaining both security and compliance.

For healthcare organizations aiming to improve their breach response, integrated tools like the Censinet RiskOps™ platform can simplify documentation and coordination efforts, making incident management more efficient.

Breach Scope and Risk Assessment

After containing a breach and notifying the necessary stakeholders, the next critical step is figuring out exactly what happened and how severe the situation is. This assessment shapes every decision moving forward - whether patients need to be informed or what details must be reported to the Department of Health and Human Services (HHS). Accurately evaluating the breach's scope is a cornerstone of your response plan.

Identify Affected PHI and Systems

The first step is pinpointing which protected health information (PHI) was compromised and determining how many individuals were impacted. This involves reviewing logs, access records, and network traffic to identify the types of PHI involved - such as names, Social Security numbers, or medical diagnoses - and tracking how data moved across systems like electronic health records (EHRs), billing platforms, and vendor portals.

Don't overlook hidden vulnerabilities in your data flow. For example, during the 2023 Change Healthcare ransomware attack, forensic analysis revealed that PHI for nearly one-third of Americans had been exposed across interconnected billing and claims systems [2]. Often, breaches extend beyond the initially affected system due to the interconnected nature of healthcare platforms. To get a full picture, use tools like asset inventories and network diagrams to check all systems, including backups that might hold copies of compromised data. Once you've mapped out the compromised data, you can move on to evaluating the risk using HIPAA's four-factor approach.

Perform a 4-Factor Risk Assessment

HIPAA outlines a four-factor framework to evaluate the likelihood of harm to individuals affected by a breach:

- Factor 1: Assess the nature and extent of the PHI involved. For instance, unencrypted Social Security numbers combined with medical diagnoses represent a higher risk than a simple mailing list.

- Factor 2: Determine who accessed the PHI without authorization. A breach involving an external hacker carries more risk than an internal team member accidentally viewing records.

- Factor 3: Evaluate whether the PHI was actually acquired or merely viewed. Files that were downloaded or copied pose a higher risk than data that was only seen briefly.

- Factor 4: Review your mitigation efforts. Actions like recalling data or implementing post-exposure training can significantly reduce risk.

Assign a risk level - low, medium, or high - to each of these factors, and use this evaluation to determine the overall likelihood of harm. If the risk exceeds "low", notification is required. However, it's worth noting that over 90% of breaches are resolved without requiring notification, thanks to effective safeguards.

Document Findings and Breach Determination

Thorough documentation is essential to avoid regulatory penalties. Keep detailed records of your evaluation, including evidence, assumptions, risk scores, team involvement, and timestamps.

If your assessment concludes the breach poses a low risk and doesn't require notification, your documentation must clearly support this conclusion. For example, in 2022, a hospital avoided notification after a laptop theft by proving the device used AES-256 encryption and was successfully wiped remotely. HHS upheld this determination during their review. Remember, HIPAA mandates that you retain all breach determination records for six years.

For healthcare organizations juggling complex vendor relationships and sprawling data systems, platforms like Censinet RiskOps™ can simplify documentation and even provide benchmarks by comparing your risk assessment to similar industry incidents.

Notification and Reporting Requirements

Once you've contained the breach and assessed its impact, it's time to address the notification and reporting requirements. HIPAA enforces strict timelines for breach notifications. As Kevin Henry, a HIPAA Specialist at Accountable, explains, "The 60-day clock starts on the date the breach is discovered - or the date it reasonably should have been discovered with diligent monitoring" [3]. While HIPAA allows up to 60 days, it's best to notify sooner to stay ahead of potential issues. These steps should align seamlessly with your broader breach response strategy.

Notify Affected Individuals

All affected individuals must be notified in writing within 60 calendar days of discovering the breach [3][4]. The notification should include critical details such as:

- A brief description of the incident

- The dates the breach occurred and was discovered

- The types of PHI involved (e.g., Social Security numbers, medical diagnoses, billing details)

- Steps individuals can take to protect themselves, such as setting up fraud alerts or freezing credit [3][5]

The CMS Breach Response Plan highlights the importance of clarity: "Notification provided to individuals potentially affected by a breach must be concise and use plain language. Notifications should avoid generic or repetitive language and should be tailored to the specific breach" [4].

Draft these notices quickly and update them as needed [3]. If contact information is unavailable for 10 or more individuals, you must post a visible notice on your website for at least 90 days and provide a toll-free number during that time [3][5]. For larger incidents, consider setting up a dedicated call center with trained staff to handle inquiries and provide accurate information.

Report to Regulatory Authorities

The scope of your breach determines how and when you report to the HHS Office for Civil Rights (OCR):

- Breaches affecting 500 or more individuals: Submit a report through the OCR online portal within 60 calendar days of discovery [3].

- Breaches affecting fewer than 500 individuals: File an annual report within 60 days after the end of the calendar year [3].

Additionally, retain all breach-related documentation for at least six years. Business associates must follow healthcare vendor breach response best practices and notify you of breaches within 60 days (or as outlined in your contract), while federal contractors and CMS vendors are required to report within one hour of discovery [3][4].

Media Notification (If Required)

In cases where a breach affects 500 or more residents of a single state or jurisdiction, you must notify prominent media outlets in that area within 60 calendar days of discovery [3][5]. Media notifications should follow individual notices and be carefully coordinated to ensure accuracy. State breach laws often impose shorter deadlines - usually between 30 and 45 days - so verify the residency of affected individuals and comply with the strictest applicable timeline. The only exception is if law enforcement provides written confirmation that notification would interfere with an investigation or threaten national security; in such cases, notifications may be delayed for up to 30 days [3].

Incorporating these notification and reporting steps into your breach response plan is essential for staying compliant and safeguarding patient data. Streamlining these processes with tools like Censinet RiskOps™ can help ensure timely and effective action.

Post-Breach Review and Prevention

Once notifications are handled, it's time to dive into a post-breach review. This process helps uncover what went wrong and ensures steps are taken to prevent future incidents. The CMS Breach Response Handbook emphasizes that breach-related activities often overlap with containment, eradication, and recovery efforts [1]. Prevention planning should start while the response is still underway. This involves analyzing the incident, addressing weaknesses, and reinforcing defenses.

Perform a Post-Incident Analysis

Using insights from your risk assessment, form a Breach Analysis Team (BAT) that includes leadership, Information System Security Officers (ISSOs), and your Senior Official for Privacy [1]. The team's goal is to examine the breach from start to finish to determine whether it stemmed from external interference or internal misuse [1]. Document any process flaws, system vulnerabilities, or training gaps that contributed to the incident. A formal risk assessment by the Incident Management Team will help determine if there was unauthorized disclosure, acquisition, or loss of control over PHI [1]. This analysis sets the stage for corrective measures.

Implement Corrective Actions

Once vulnerabilities are identified, take immediate steps to protect affected individuals and prevent the issue from happening again. Develop a Notification and Mitigation Plan to address the breach [1]. Update security protocols to close identified gaps, enhance employee training on handling PHI, and impose sanctions if policies were violated [1]. If a third-party contractor was involved, follow a guide to HIPAA-compliant vendor risk management to ensure contracts explicitly require them to bear the costs of notifications and mitigation [1]. Assign responsibilities for these actions to the Business Owner, Senior Official for Privacy, and the Incident Management Team.

Strengthen Security Measures

Bolstering security requires a combination of technical, administrative, and physical safeguards. On the technical side, implement multi-factor authentication (MFA) for accessing ePHI, establish a robust patch management process to fix software vulnerabilities, and ensure encryption for data both at rest and in transit [6][8]. Administrative measures should include regular risk assessments, ongoing workforce training, and clear documentation of sanctions for policy breaches [6].

"Protecting PHI in 2026 requires a data-centric approach... Instead of focusing only on where data is stored, organizations must continuously understand what sensitive data exists, who can access it, and how that access changes over time." – Yair Cohen, Co-Founder and VP Product at Sentra [7]

Tools like Censinet RiskOps™ can simplify managing PHI risks by providing streamlined assessments and continuous monitoring. Lastly, ensure all breach-related records are kept for at least six years from their creation date [6].

Conclusion

This checklist brings together key steps for handling breaches, from detection to long-term prevention.

Responding effectively to a PHI breach not only safeguards patient safety but also upholds trust in your organization. Having a clear plan ensures your team knows who should act, when to act, and how to meet federal deadlines. Without this clarity, chaos can take over during a crisis, increasing the risk of errors.

The checklist serves as a structured guide, helping your organization navigate each phase: from identifying a breach and assessing risks to notifying the necessary parties and conducting post-incident reviews. By using clear notification triggers, you reduce uncertainty and stay compliant, ultimately aiming to limit harm to those affected.

Tools like Censinet RiskOps™ can further strengthen your response. By automating risk assessments, simplifying vendor monitoring, and centralizing incident data for real-time reporting, it helps you maintain accurate records, meet FISMA requirements, and focus on addressing high-risk threats. Each step reinforces an ongoing cycle of improvement in protecting PHI.

But the work doesn’t stop at notifications. The post-incident analysis is where you uncover gaps, make necessary corrections, and fortify your defenses to prevent future breaches. These improvements lay the foundation for stronger, more resilient systems.

FAQs

Who leads the breach response team?

The breach response team operates under the leadership of an Incident Commander, typically the Chief Information Security Officer (CISO) or Chief Information Officer (CIO). Supporting them are crucial teams from various areas such as security, privacy, legal, clinical, and compliance. This collaboration ensures a well-organized and efficient response to incidents.

When does the 60-day HIPAA clock start?

The 60-day HIPAA notification clock starts ticking the moment a breach involving Protected Health Information (PHI) is discovered. Within this period, organizations are required to notify affected individuals. However, this must happen only after completing a risk assessment that confirms there's a higher-than-low probability that the PHI has been compromised.

What counts as “low risk” under HIPAA?

Under HIPAA, a "low risk" incident refers to situations where there is a low probability that protected health information (PHI) has been compromised. This classification is determined through a thorough risk assessment, which evaluates the potential impact of the breach. Essentially, it suggests that the incident is unlikely to result in any significant harm to the individuals involved.