The Audit Trail Imperative: Documentation Standards for Healthcare AI

Post Summary

Healthcare AI systems are under intense scrutiny due to strict regulations like HIPAA, the EU AI Act, and the FDA's 21 CFR Part 11. The key takeaway? Audit trails are essential for compliance, transparency, and patient safety. Without them, organizations risk hefty fines, inefficiency, and compromised data integrity.

Here’s what you need to know:

- Regulatory Pressure: Non-compliance with GDPR and the EU AI Act can cost millions. Audit trails are now a must-have, not a nice-to-have.

- Data Integrity Standards: Adhering to ALCOA+ principles ensures data is traceable, accurate, and secure.

- Key Requirements:

- Track user actions, system events, and AI model details.

- Secure logs with tamper-proof technologies like cryptographic hashing and immutable storage.

- Maintain records for at least six years per HIPAA guidelines.

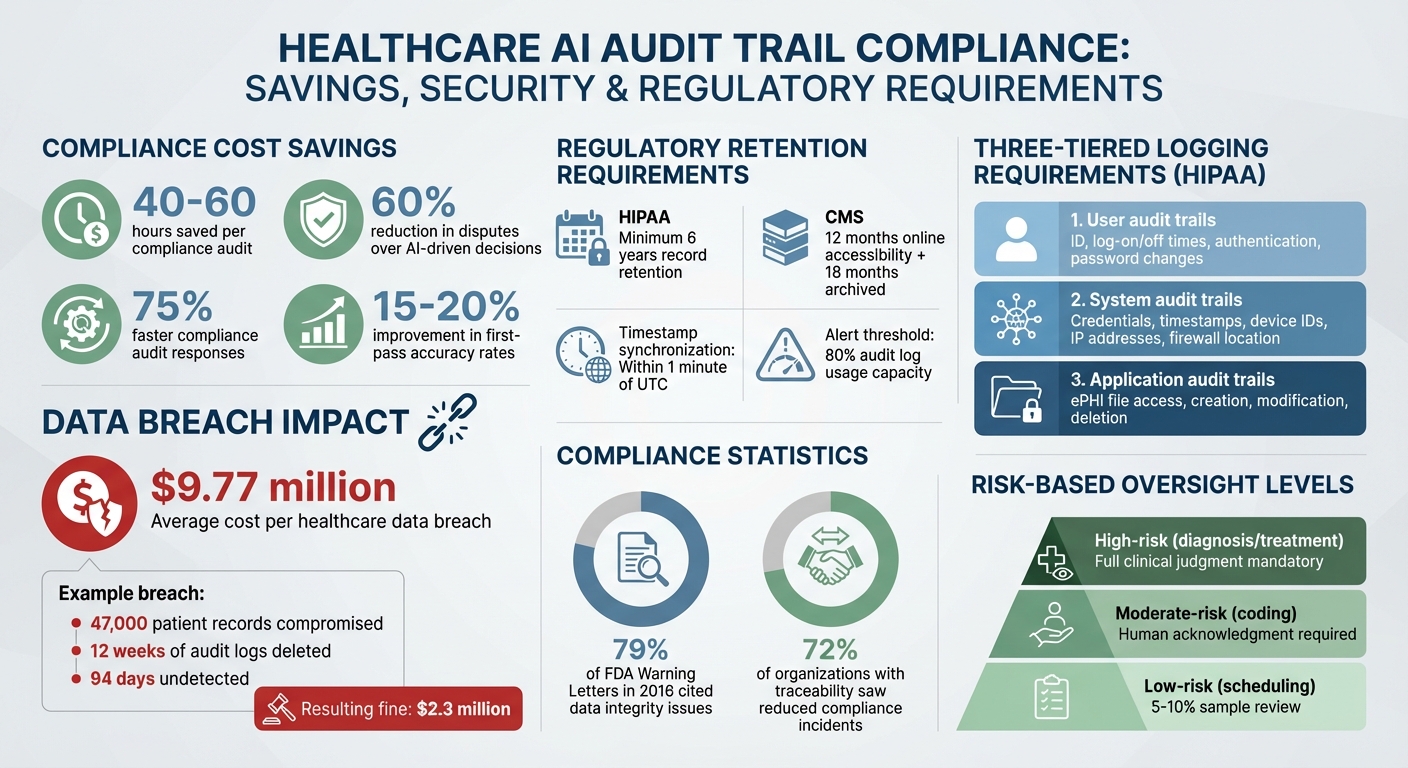

- Efficiency Gains: Organizations with robust audit trails save 40–60 hours per compliance audit and reduce disputes over AI-driven decisions by 60%.

Audit trails are more than compliance tools - they build trust and improve operational reliability in healthcare AI. Read on to learn how to implement effective, secure, and regulation-ready audit trails.

Healthcare AI Audit Trail Benefits and Requirements: Key Statistics and Compliance Data

Regulatory Requirements for AI Audit Trails

HIPAA Requirements for Audit Trails

Under the HIPAA Security Rule (45 C.F.R. §164.312(b)), Covered Entities and Business Associates are required to implement mechanisms - both technological and procedural - to record and review activities within systems handling electronic protected health information (ePHI). This includes maintaining three distinct types of audit trails:

- User audit trails: These logs capture details like user identification, log-on and log-off times, authentication attempts, and password changes.

- System audit trails: These track information such as log-on credentials, timestamps, device identifiers, IP addresses, and whether access originated inside or outside the organization's firewall.

- Application audit trails: These monitor actions like accessing ePHI files, as well as the creation, modification, or deletion of ePHI records.

Access to these audit trails must be tightly controlled, limited only to audit and IT personnel, to protect the integrity of compliance-related evidence. Organizations are advised to prioritize tracking the most critical information based on a risk-based approach [3].

Beyond HIPAA, other federal regulations expand on the expectations for audit trail management.

CMS and Other Federal Standards

The Centers for Medicare & Medicaid Services (CMS) impose additional documentation requirements that supplement HIPAA. According to OMB M-21-31, systems must log details such as user identities, IP addresses, timestamps, and event outcomes. These logs must remain accessible online for 12 months and archived for 18 months. Furthermore, systems must synchronize timestamps within one minute of UTC and issue real-time alerts when audit log usage exceeds 80% [5].

For AI systems classified as "High-Impact" - those influencing health, safety, or civil rights - OMB Memorandum M-25-21 mandates additional risk management practices and CMS AI Governance risk assessments. These systems must document specific details, such as the AI model version and the prompts used to generate outputs, to comply with federal records retention standards (44 U.S.C. §3101) [4]. As CMS guidance emphasizes:

AI tools are only to be used in support of and not as a substitute for human decisions and oversight of CMS strategic or compliance-related activities. Humans must be in the loop.

For Clinical Decision Support (CDS) software, audit trails must include details like source data, processing steps, and confidence scores for each automated decision. Similarly, AI-assisted medical coding for billing purposes requires audit trails to demonstrate alignment with official coding guidelines, ensuring decisions can be validated by certified coders [1].

Business Associate Agreements and Vendor Obligations

Ensuring compliance also extends to contractual agreements with vendors. Business Associate Agreements (BAAs) play a crucial role in defining vendor responsibilities for managing audit trails on behalf of healthcare organizations. These agreements should specify how vendors will implement the three-tiered logging requirements, maintain restricted access to audit logs, and adhere to HIPAA and CMS standards.

Vendors handling ePHI must adopt safeguards such as encryption, dual authorization for sensitive log actions, and non-repudiation measures to verify system activities [5]. BAAs should also address AI-specific requirements, such as tracking AI model versions and prompts, as mandated by CMS. Vendors are further obligated to comply with federal records retention policies, as AI-generated data is subject to these regulations and the Freedom of Information Act (FOIA) [4].

sbb-itb-535baee

How to Design and Implement Effective Audit Trails

Real-Time Logging and Transparent Records

Audit logs are only as useful as the details they capture. To be effective, each log entry should include six key elements: Who (user identity or NPI number), What (specific action or resource accessed), When (timestamp), Where (IP address and device details), Why (clinical purpose), and Outcome (success, failure, or changes made) [6]. This level of detail ensures that every action is traceable and meets regulatory standards.

One major risk to audit trails is the possibility of "mutable logs", where unauthorized users can alter or delete records to cover their tracks. This vulnerability can amplify the damage from data breaches, which cost healthcare organizations an average of $9.77 million per incident [6]. As Piyoosh Rai from Towards AI explains:

The technical root cause? Mutable audit logs. Someone could modify the evidence of their own crime [6].

To safeguard logs, implement technologies like cryptographic hash chains or Merkle trees. These methods use SHA-256 hashing to link log entries, instantly flagging any tampering. For systems managing millions of entries, Merkle trees are particularly efficient, allowing verification in O(log n) time [6]. Additionally, write-once storage solutions such as AWS S3 Object Lock or Azure Immutable Blob Storage ensure logs cannot be altered or deleted, even by administrators [6][7].

Healthcare AI systems also require specialized audit trail features. These include tracking MLOps artifacts, such as training data lineage, model versions, input prompts, confidence scores, and human review sign-offs [2][7]. The ALCOA+ framework - which ensures data is Attributable, Legible, Contemporaneous, Original, Accurate, Complete, Consistent, Enduring, Available, and Traceable - provides a solid foundation for maintaining integrity [2].

To avoid slowing down clinical workflows, use write-behind caching for logging. Pair this with correlation IDs to link AI events directly to Electronic Health Record (EHR) entries, enabling seamless bidirectional tracing [1][7].

Adding Human Oversight to AI Documentation

While automated logging is critical, human oversight plays an equally important role in ensuring AI outputs meet clinical standards. The level of oversight should match the risk level of the AI-driven decision:

- Low-risk tasks, like appointment scheduling, may only require periodic reviews of a small sample (5-10%) of outputs.

- Moderate-risk functions, such as ICD-10 coding, typically need human acknowledgment before implementation.

- High-risk decisions, such as diagnoses or treatment plans, demand full clinical judgment, with AI serving as a support tool [7].

Audit trails should document the Human Reviewer ID to identify the clinician who validated the AI output. If a clinician overrides an AI recommendation, the system must log both the original AI suggestion and the human decision, along with the rationale for the override. A high rate of overrides can signal the need for retraining or refining the AI model.

Adrien Laurent from Intuition Labs highlights the value of treating AI outputs as controlled records:

AI belongs in GxP when you prove data integrity at every step. The quickest way to do that is to treat training data, prompts, model context, and outputs as controlled records [2].

To balance privacy and compliance, implement automated redaction tools that mask patient identifiers in logs while preserving decision logic for auditors. Organizations that use audit analytics to refine their models report a 15-20% improvement in first-pass accuracy rates [1]. This approach not only strengthens compliance but also enhances the reliability of AI systems.

Documenting AI Model Updates and Retraining

Thorough documentation of model updates is essential for maintaining accountability. Each update should record the model version/hash, dataset ID, and any changes in performance metrics [2]. Updates must go through a formal approval process, including an electronic signature from a clinician or compliance officer.

Every AI-generated output should be linked to the exact model version used at the time. This allows organizations to trace decisions back to the specific model configuration, even months or years later. HIPAA mandates that these records be retained for at least six years from the date of creation or last use [6][7].

To detect issues like model drift, continuously analyze audit trails for patterns such as declining confidence scores or rising override rates. Set performance thresholds that trigger mandatory retraining or re-validation. For example, if confidence scores drop below 85% for more than 5% of decisions over a 30-day period, initiate a formal review.

Manage storage costs by using tiered storage: keep recent logs (0-90 days) in high-performance "hot" storage for quick access during audits, and move older logs to more affordable "cold" storage while maintaining the six-year retention requirement [1]. Event-driven architectures can simplify this process by capturing audit data in real time through healthcare-compliant message queues, eliminating the need for log reconstruction [1].

Organizations that integrate traceability mechanisms throughout the AI lifecycle report significant benefits. For instance, 72% saw a reduction in compliance-related incidents, while 79% of FDA Warning Letters in 2016 cited data integrity issues - underscoring the importance of comprehensive documentation in healthcare AI [8][9].

AI Healthcare Compliance: How to Build Audit-Ready AI Products (HIPAA, SOC 2, HITRUST)

Using Censinet RiskOps™ for Audit Trail Management

Censinet RiskOps™ takes the complexity out of managing audit trails by combining automated evidence collection with a focus on AI governance.

Automated Evidence Collection and Validation

One of the standout features of Censinet RiskOps™ is its ability to handle evidence collection automatically. Instead of relying on manual documentation, the platform captures data in real time, logging key details like model inputs and outputs, user interactions, and system changes. This constant monitoring ensures there are no gaps or inconsistencies in the audit trail.

The Cybersecurity Data Room™ plays a critical role by maintaining a tamper-proof record of all risk data and evidence. This creates a comprehensive history that tracks AI model updates and retraining cycles. When changes occur, the platform flags missing documentation - such as Business Associate Agreements or validation reports - and automatically collects and validates the required data. Each piece of evidence is timestamped and protected through cryptographic hashing and immutable logging, which ensures it meets the strict standards required for healthcare audits.

Terry Grogan, CISO at Tower Health, highlights the platform's impact on efficiency:

Censinet RiskOps allowed 3 FTEs to go back to their real jobs! Now we do a lot more risk assessments with only 2 FTEs required.

[10] Additionally, the delta-based reassessments feature identifies changes in AI model configurations or retraining protocols since the last assessment, ensuring nothing is overlooked.

Centralized Dashboard for AI Risk Management

Censinet RiskOps™ simplifies oversight by offering a single dashboard where healthcare organizations can manage compliance for all their AI applications. This eliminates the need to juggle multiple systems, enabling teams to monitor AI-related risks, policies, and audit trail data in one place. The dashboard helps organizations quickly determine which AI systems are audit-ready and which need attention - an essential capability for meeting HIPAA, CMS, and other regulatory standards.

The dashboard doesn’t just organize data; it turns it into actionable insights. For example, it can highlight which AI systems generate the most alerts or how model changes affect performance. Matt Christensen, Sr. Director GRC at Intermountain Health, underscores the platform's healthcare-specific design:

Healthcare is the most complex industry... You can't just take a tool and apply it to healthcare if it wasn't built specifically for healthcare.

[10] With its Digital Risk Catalog™, which includes data on over 50,000 vendors and products [10] [11], and its Censinet Risk Network, connecting more than 100 provider and payer facilities [11], the platform helps organizations benchmark their practices and identify new risks in their vendor ecosystem. This centralized approach naturally encourages better collaboration across governance, risk, and compliance (GRC) teams.

Improved Collaboration Across GRC Teams

Censinet RiskOps™ fosters teamwork by breaking down silos between governance, risk, and compliance teams. Instead of maintaining separate records, all stakeholders share access to audit trails, compliance tasks, and risk assessments. This unified workflow allows teams to assign tasks, track progress, and communicate directly within the platform.

Acting as a central hub for AI governance, Censinet AI™ routes key findings and risks to the appropriate stakeholders, such as the AI governance committee. For instance, if a compliance officer identifies a documentation gap, they can assign it to a risk analyst for investigation and have the governance team approve the remediation plan - all within the same system. This approach reduces delays, avoids duplicate work, and ensures consistent compliance interpretations.

The platform also keeps track of all Corrective Action Plans (CAPs) and remediation efforts [11]. Organizations can assign AI-related tasks to specific team members, ensuring every action is logged and completed. This collaborative structure allows healthcare organizations to scale their risk management efforts while maintaining oversight of AI systems that directly impact patient safety and care quality.

Common Challenges and How to Address Them

Creating reliable audit trails for healthcare AI systems comes with its own set of hurdles. Addressing these challenges requires practical strategies to maintain transparency, data integrity, and compliance with regulations. Let's break down some of these challenges and their solutions.

Handling Large Volumes of Data

AI systems in healthcare generate enormous amounts of data. For instance, a mid-sized clinic processing 500 documents daily might produce around 50GB of audit logs each month, factoring in source documents and confidence metrics [1]. Managing this sheer volume of data calls for smart storage solutions.

A tiered storage system can help. Recent logs can be stored in high-performance "hot" storage for quick access, while older logs can move to more economical "cold" archives. This approach ensures compliance with the six-year retention requirement [6] without overloading high-cost storage systems.

Another strategy is risk-based logging levels. Routine tasks may only need basic logs, while critical clinical decisions - like diagnostic support or treatment recommendations - should capture detailed information, including confidence scores and model versions. This ensures that the most important data is thoroughly documented without unnecessary clutter.

Creating Tamper-Proof Logs

Conventional databases often allow write access, which can make logs vulnerable to tampering. A notable example involved a breach of 47,000 patient records, where an attacker deleted 12 weeks of audit logs, leaving the incident undetected for 94 days. This resulted in a hefty $2.3 million fine from the Department of Health and Human Services [6].

To prevent such vulnerabilities, immutable storage solutions are essential. Options like AWS S3 Object Lock or Azure Immutable Blob Storage use Write-Once-Read-Many (WORM) technology, which physically prevents deletion or modification of logs, even by administrators. Additionally, primary audit databases should be configured as append-only, with UPDATE and DELETE permissions explicitly revoked.

For added protection, cryptographic hash chaining can link each log entry to the previous one using algorithms like SHA-256. If someone tries to alter a log, the chain breaks, making tampering immediately detectable [6]. For organizations managing millions of entries, Merkle trees provide a way to verify the integrity of logs efficiently without processing the entire chain. Automated background checks can continuously monitor these hash chains, flagging any inconsistencies [6].

Balancing Detail with System Performance

Detailed logging is essential, but it can also slow down AI processing if not handled properly [1]. The solution lies in optimizing how logs are recorded.

Asynchronous logging, combined with write-behind caching and correlation IDs, allows audit trails to be created in parallel with AI operations. This approach minimizes the impact on processing speed. With the right architecture, organizations can maintain full audit compliance while keeping performance impacts below 10% [1].

Conclusion: Building Accountability in Healthcare AI

Key Takeaways

Strong audit trails can turn AI automation from a compliance risk into a reliable, trackable resource. By keeping detailed logs of input data, decision-making processes, confidence scores, and model versions for each AI action, organizations can see clear benefits: 75% faster compliance audit responses, 40–60 hours saved per audit event, 15–20% better first-pass accuracy, and 60% fewer disputes over AI-processed claims [1]. These results not only prove compliance but also encourage ongoing improvements in processes.

Equally important is ensuring technical reliability alongside thorough documentation. Cryptographic hashing and digital signatures help create a tamper-proof chain of records, suitable for use as legal evidence if needed. Integrating these systems with existing healthcare platforms further strengthens audit reliability. Tools like Censinet RiskOps™ simplify this process by automating evidence collection, offering a centralized dashboard for managing AI risks, and improving coordination in governance, risk, and compliance (GRC).

The Future of Audit Trails in Healthcare AI

As documentation standards evolve, the demand for transparency and accountability in healthcare AI is only going to grow. Regulations are advancing quickly, with explainable AI (XAI) becoming a necessity rather than a bonus. These new rules require AI decisions to be understandable to humans and call for assessments to identify potential biases. Transparency for patients is also becoming a priority, emphasizing that accountability isn't just about meeting regulations - it's about building trust with the people who rely on these systems [1]. Organizations investing in robust audit trails now will be better equipped to manage the challenges of tomorrow’s healthcare advancements while ensuring safety and scalability in their AI solutions.

FAQs

What should an AI audit log entry include?

An AI audit log entry serves as a secure and transparent record of every action taken. It should detail who carried out the activity, what was done, when it happened, where it occurred, and the reasoning behind decisions. This is particularly crucial for automated healthcare decisions, as they can directly impact patient care and regulatory compliance. By keeping thorough and tamper-proof documentation, organizations can ensure accountability and meet necessary regulatory requirements.

How do you make healthcare AI logs tamper-proof?

To keep healthcare AI logs secure and tamper-proof, it's crucial to use immutable storage solutions, such as Write-Once-Read-Many (WORM) technology, along with cryptographic methods to preserve data integrity. Strengthen protection by encrypting logs, applying role-based access controls, and storing them in compliant repositories like SIEM systems or secure cloud storage. These practices not only protect the logs but also ensure adherence to healthcare regulations.

How can audit trails support model drift detection?

Audit trails play a crucial role in spotting model drift within healthcare AI systems. These detailed, time-stamped logs capture every action, data input, and decision-making step, offering a clear record of how the system operates over time. By tracking changes in data patterns and model performance, audit trails make it easier to pinpoint drops in accuracy or reliability. They document key details like input features, model versions, and outputs, enabling trend analysis, ensuring compliance, and helping to quickly identify anomalies that could pose risks to patient safety.