The New Perimeter: How AI Changes the Healthcare Security Landscape

Post Summary

Healthcare cybersecurity is facing a major transformation as AI becomes both a tool for protection and an enabler of new threats. Attackers are using AI to create highly targeted phishing emails, exploit vulnerabilities faster, and deploy advanced ransomware. At the same time, healthcare organizations are leveraging AI to detect breaches in seconds, predict vulnerabilities, and automate responses.

Key points:

- AI-powered threats: Advanced phishing, automated vulnerability scanning, and adaptive ransomware are on the rise.

- AI in defense: Real-time threat detection, predictive risk modeling, and automated incident response help mitigate risks.

- Rising costs: The average healthcare data breach costs $10.93 million, with ransomware incidents causing operational chaos.

- Regulatory changes: By 2026, stricter HIPAA rules will demand AI-specific safeguards for patient data.

To stay ahead, healthcare leaders must integrate AI-driven security tools, conduct regular audits, and prepare for evolving regulations. The future of healthcare cybersecurity will depend on balancing AI’s potential to protect against its risks.

Cybersecurity on the Health Care Front Lines Against AI and Ransomware

sbb-itb-535baee

New AI-Driven Cyber Threats in Healthcare

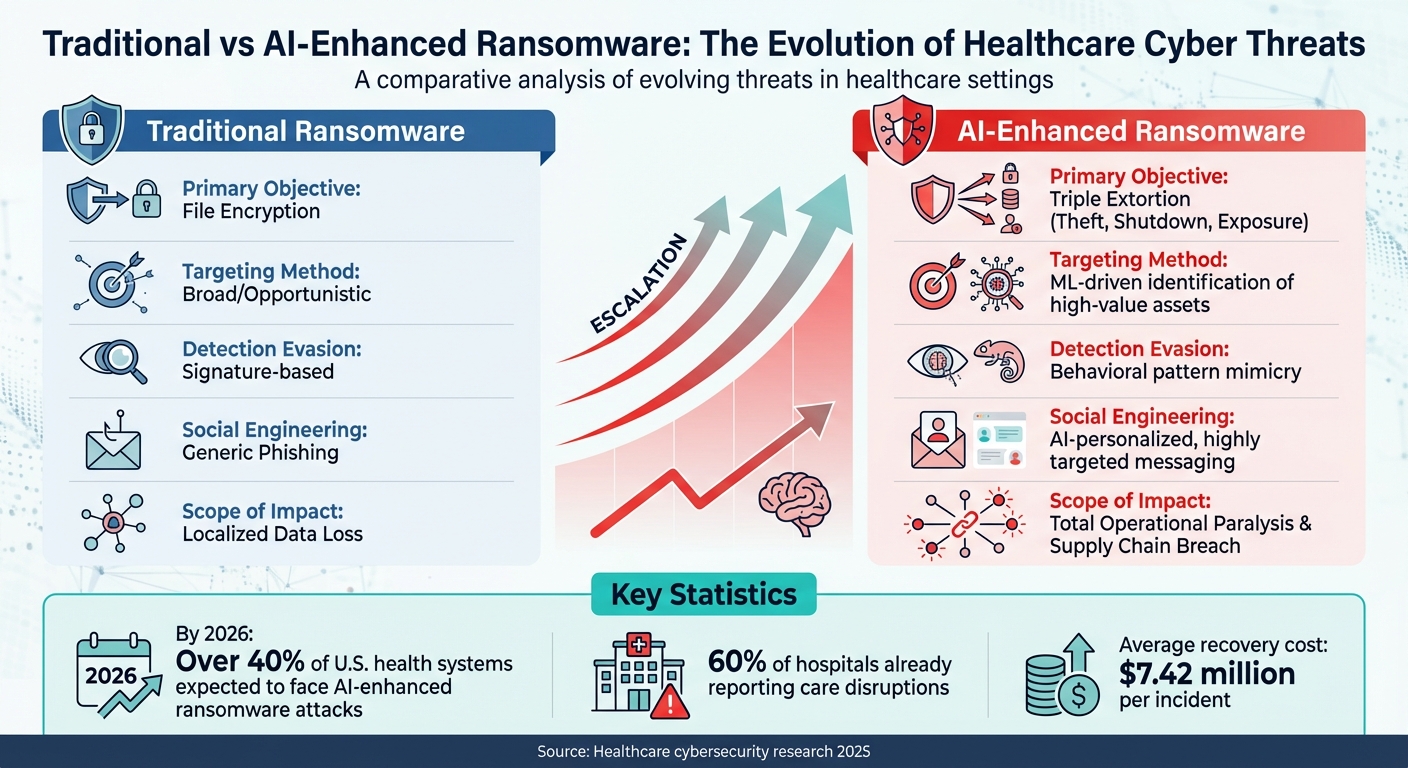

Traditional vs AI-Enhanced Ransomware in Healthcare: Key Differences

Cyberattacks in healthcare are evolving at a rapid pace, with attackers leveraging AI to outsmart traditional security measures. These AI-driven threats are faster, more adaptive, and harder to detect, creating significant challenges for the industry.

AI-Powered Phishing and Social Engineering

AI has taken phishing attacks to a whole new level. Today’s phishing emails don’t just look convincing - they feel authentic. Using machine learning, attackers analyze patient data, internal communications, and even staff writing styles to craft emails that seem legitimate. These emails might reference actual patients, ongoing treatments, or internal processes, making them incredibly believable.

This level of precision enables attackers to escalate their campaigns, targeting network vulnerabilities with greater efficiency.

Automated Vulnerability Scanning and Exploitation

AI tools are now capable of scanning healthcare networks at lightning speed, pinpointing weaknesses in outdated systems or unpatched electronic medical record (EMR) software. Many hospitals rely on legacy infrastructure, which makes them prime targets. These AI-driven tools not only identify vulnerabilities but also prioritize them based on potential impact, exploiting them before they can be addressed.

In some cases, a single compromised vendor connection can grant attackers access to multiple healthcare facilities, amplifying the scale of these breaches.

How AI Is Changing Ransomware Attacks

Ransomware attacks have become more aggressive and invasive with the integration of AI. By 2026, over 40% of U.S. health systems are expected to face these attacks, with 60% of hospitals already reporting care disruptions [7]. AI-enhanced ransomware introduces a "triple extortion" strategy - stealing sensitive data, shutting down systems, and threatening public exposure of the information.

What makes this form of ransomware particularly dangerous is its ability to adapt. Machine learning enables the malware to analyze network behavior, identify critical patient data, and blend seamlessly into normal traffic patterns to avoid detection. The financial toll is staggering, with recovery and penalties averaging $7.42 million per incident [7]. For many hospitals, this means reverting to paper records, halting patient care, and grappling with operational chaos.

| Attack Feature | Traditional Ransomware | AI-Enhanced Ransomware |

|---|---|---|

| Primary Objective | File Encryption | Triple Extortion (Theft, Shutdown, Exposure) |

| Targeting Method | Broad/Opportunistic | ML-driven identification of high-value assets |

| Detection Evasion | Signature-based | Behavioral pattern mimicry |

| Social Engineering | Generic Phishing | AI-personalized, highly targeted messaging |

| Scope of Impact | Localized Data Loss | Total Operational Paralysis & Supply Chain Breach |

AI Solutions for Healthcare Cybersecurity

AI isn't just a tool for attackers - it’s also a game-changer for healthcare organizations looking to strengthen their defenses. The same technology that powers advanced cyber threats can also detect them quickly, pinpoint vulnerable areas, and respond to incidents before they escalate.

Real-Time Threat Detection and Analysis

AI systems excel at monitoring network traffic around the clock, spotting unusual patterns that could indicate a breach. By analyzing massive amounts of data - like login activity, requests to access Electronic Health Records (EHRs), and file transfers - AI can identify anomalies that might go unnoticed by human analysts. For instance, it could flag unusual after-hours access to patient records in real time. This capability slashes response times from hours to mere seconds, minimizing the damage before security teams can step in. Beyond detection, AI also helps predict potential threats, giving organizations a chance to act before problems arise.

Predictive Risk Modeling

Why wait for an attack when you can predict and prevent it? AI-powered predictive analytics use machine learning to analyze past breaches, system vulnerabilities, and attack patterns to identify weak spots before they’re exploited. This proactive approach allows healthcare organizations to stay ahead of AI-driven threats, which evolve far too quickly for traditional security measures to handle.

As Gianmarco Di Palma from Fondazione Policlinico Universitario Campus Bio-Medico explains, "The integration of Artificial Intelligence (AI) into CRM has enabled early identification of risks and errors, further enhancing patient safety measures" [8].

These predictive systems adapt to the unique needs of each organization. For example, a rural clinic faces different challenges than a large urban hospital, and AI can tailor its strategies accordingly. It can even anticipate advanced threats like data poisoning, where attackers tamper with training datasets to compromise machine learning models [9]. By forecasting vulnerabilities, these systems ensure that automated responses are ready to contain breaches the moment they occur.

Automated Incident Response

Speed matters in cybersecurity. AI-driven systems can respond to incidents almost instantly by isolating compromised devices, blocking suspicious IP addresses, and launching containment protocols - all without waiting for human intervention. For example, they can quarantine infected workstations, disable compromised accounts, and send detailed threat reports to security teams within minutes. The faster the response, the less damage attackers can do - whether that’s stealing sensitive data or encrypting critical systems.

How Censinet Addresses Healthcare Risk Management

Healthcare organizations face the dual challenge of acting swiftly while safeguarding patient data from AI-driven threats. Censinet RiskOps™ tackles this by offering automated processes tailored specifically for healthcare. The platform connects more than 100 provider and payer facilities through a collaborative network and provides access to a Digital Risk Catalog™. This catalog includes over 50,000 vendors and products, each assessed and risk-scored, making it easier to manage third-party risks effectively [10][11]. A standout feature is Censinet AITM, which transforms how third-party risk assessments are conducted.

Faster Third-Party Risk Assessments with Censinet AITM

Traditional vendor assessments are often slow, taking weeks or even months to complete and delaying essential partnerships. Censinet AITM changes the game by automating the most time-intensive steps. Vendors can complete security questionnaires in seconds, while the platform summarizes evidence, captures integration details, and flags fourth-party risk exposures. With delta-based reassessments focusing only on changes, assessment times are reduced to less than a day [11].

Tower Health experienced this transformation firsthand. By implementing Censinet RiskOps, CISO Terry Grogan decreased the staff needed for risk assessments from 3 full-time employees (FTEs) to 2, while simultaneously increasing the total number of assessments completed.

As Grogan explained: "Censinet RiskOps allowed 3 FTEs to go back to their real jobs! Now we do a lot more risk assessments with only 2 FTEs required" [10].

AI-Enhanced GRC Collaboration

Effectively managing AI risks requires seamless collaboration across diverse teams, including governance committees, compliance officers, security analysts, and clinical leaders. Censinet RiskOps simplifies this by centralizing AI risk management. Its AI risk dashboard consolidates real-time data into a single hub, making it easy for teams to monitor policies, risks, and remediation efforts - without the chaos of spreadsheets or email chains.

James Case, VP & CISO at Baptist Health, highlighted the platform’s collaborative edge: "Not only did we get rid of spreadsheets, but we have that larger community [of hospitals] to partner and work with" [10].

The platform also automates Corrective Action Plans (CAPs), identifying security gaps based on questionnaire responses and tracking remediations to closure. By eliminating fragmented communication, Censinet ensures risk management processes are efficient and focused [11]. While automation handles much of the workload, critical decisions are still guided by human expertise.

Balancing Automation with Human Oversight

AI excels at processing data quickly, but some healthcare decisions require human judgment and insight that machines simply can't provide. Censinet addresses this with a human-in-the-loop approach, blending automation with carefully designed review processes. Routine tasks, such as validating evidence or drafting policies, are automated, while high-stakes decisions are escalated to human experts through designated review queues. This approach allows organizations to scale their risk management efforts without sacrificing the oversight necessary for HIPAA compliance, patient safety, and clinical standards.

Matt Christensen, Sr. Director of GRC at Intermountain Health, underscored the importance of this tailored design: "Healthcare is the most complex industry... You can't just take a tool and apply it to healthcare if it wasn't built specifically for healthcare" [10].

Building AI-Enhanced Security Frameworks

Integrating AI into healthcare cybersecurity requires a significant shift in how risks are managed. The upcoming 2026 HIPAA Security Rule modernization moves away from flexible "addressable" guidelines to strict, prescriptive mandates that demand specific technical safeguards. Healthcare providers will need to keep detailed inventories of all AI tools and cloud services handling PHI (Protected Health Information), along with comprehensive logs for every AI interaction involving patient data [13].

Dr. Amanda Foster, Healthcare Compliance Advisor, explained: "The Security rule modernization expected in 2026 will fundamentally change how healthcare organizations approach AI security" [13].

This regulatory update provides a structured path for balancing innovation with compliance. For example, new security measures should include automatic detection and redaction of PHI in AI-related activities, ensuring compliance before sensitive data leaves the network. Additionally, Multi-Factor Authentication (MFA) must become a standard for any AI tool accessing patient systems or Single Sign-On (SSO) platforms [13]. Vigilant network monitoring is also critical to identifying "shadow AI" - unauthorized tools used by employees without IT oversight [13].

The timeline for preparation is tight. Organizations should begin by auditing their AI tools and reviewing Business Associate Agreements (BAAs) by early 2026. From there, technical controls should be implemented, and risk assessments updated through mid-2026, with mock audits and control validations taking place from late 2026 to 2027 [13]. Early adoption of recognized security practices, as encouraged under the 2021 HIPAA Safe Harbor law, can reduce penalties for breaches and provide a strategic advantage [13]. These actions lay the groundwork for continuous monitoring and adaptive defenses.

Using AI for Continuous Vulnerability Assessment

Periodic security scans often leave gaps where threats can linger unnoticed. AI brings a transformative approach by enabling real-time monitoring, identifying vulnerabilities as they arise rather than waiting for scheduled scans. These tools track network activity, system configurations, and behavior patterns to flag weaknesses or exploitation attempts immediately.

Healthcare organizations should prioritize AI solutions that maintain up-to-date inventories of systems and dependencies. The Health Sector Coordinating Council (HSCC) Cybersecurity Working Group, which represents 115 healthcare entities, advises using AI-specific risk taxonomies to classify threats like data poisoning, model manipulation, and drift exploitation [14]. This proactive stance helps organizations counter attackers who increasingly use automated tools to exploit vulnerabilities within hours of discovery.

AI-driven vulnerability assessments also align with Industry 4.0 trends in healthcare, where interconnected environments involving IoT devices, cyber-physical systems, and cloud computing demand constant oversight [8]. By automating detection, security teams can focus their expertise on resolving issues rather than manual scanning, reducing the time between identifying a vulnerability and deploying a fix.

Implementing Zero-Trust Security Models

Zero-trust security models build on real-time monitoring by requiring rigorous verification for every access request. The core principle - "never trust, always verify" - ensures that all users, devices, and applications must authenticate and be authorized before accessing resources, regardless of their location. AI enhances this model by analyzing access patterns, user behavior, and device health in real time to make dynamic authentication decisions that adapt to evolving risks.

In healthcare, zero-trust models are especially effective because they guard against both external breaches and insider threats. For instance, AI can establish baseline behaviors for users and devices, flagging anomalies like a nurse accessing records outside their department or a medical device connecting to an unexpected external server. These real-time analytics enable organizations to block suspicious activities before data is compromised.

Implementing zero-trust involves segmenting networks into smaller zones, with AI managing the micro-perimeters between them. For example, clinical systems, administrative networks, and research databases should operate as separate trust zones. AI-driven access controls can then determine user permissions based on factors like role, location, device security, and current context. This segmentation minimizes the risk of lateral movement in case of a breach, confining attackers to a single zone.

Ensuring AI Tools Meet Regulatory Requirements

Healthcare organizations must address AI-specific vulnerabilities in their risk assessments, such as prompt injection attacks, data exposure during model training, and inaccuracies in AI outputs that could affect patient care [13]. BAAs should be updated to reflect AI-related concerns, including training data usage, retention policies, and the roles of subprocessors [13].

Dr. Amanda Foster highlighted: "AI security gateways that can detect and protect PHI in AI interactions will become table stakes for healthcare compliance" [13].

Establishing formal governance is essential. This includes continuous consent processes and scenario-based training to educate staff on evolving AI risks. For example, training should cover the dangers of pasting patient data into public AI tools like ChatGPT - a common compliance issue [13]. Encouraging a no-blame reporting culture can also help staff feel safe reporting algorithmic errors or "hallucinations", fostering systemic learning and ongoing improvement [8].

The concept of dynamic informed consent is gaining traction as a best practice for AI oversight. Since AI models evolve with new data, organizations should adopt continuous consent mechanisms rather than relying on one-time approvals. This ensures patients remain informed about how their data is being used by automated systems [8]. Such practices are critical for integrating AI into a comprehensive risk management strategy.

Preparing for the Future of Healthcare Cybersecurity

The rapid development of AI is pushing healthcare organizations to rethink their cybersecurity strategies. Experts predict a 40% rise in AI-driven cyber incidents by 2028, fueled by threats like deepfakes and adaptive malware [1][2]. To protect patient data and ensure compliance, organizations must prioritize regular audits, collaborative risk-sharing, and benchmarking their security measures. This includes learning how to manage third-party risk across the digital supply chain. These proactive steps are essential to keep pace with the evolving threat landscape.

Regular Audits: A Critical First Step

Quarterly AI audits are crucial for monitoring performance, detecting model drift, and ensuring compliance with frameworks like the NIST AI RMF. Automated auditing pipelines, such as those implemented by Mayo Clinic, have proven effective, identifying 95% of drift issues. This not only supports HIPAA compliance but also reduces false positives in threat detection [3]. Such audits help pinpoint when AI models deviate from expected behavior as they process new data, ensuring systems remain reliable and secure.

The Power of Collaboration

Collaboration through risk-sharing networks enables organizations to strengthen their defenses collectively. Secure platforms allow for the exchange of anonymized AI threat data, amplifying the ability to predict and respond to attacks. For instance, a 2025 HHS-led consortium demonstrated a 30% reduction in response times through shared predictive models and joint simulations [4]. Similarly, Johns Hopkins Hospital detected a novel ransomware variant 48 hours earlier than it would have independently, preventing potential disruptions to patient care [2][6]. These examples highlight the value of shared intelligence in combating sophisticated threats.

Benchmarking for Better Preparedness

Benchmarking cybersecurity practices against industry standards ensures organizations remain prepared for AI-driven risks. Metrics like mean time to detect (ideally under one hour) and false positive rates (below 5%) are key benchmarks. Using frameworks like the HIMSS Cybersecurity Maturity Model, Cleveland Clinic achieved 98% regulatory compliance by comparing its performance with industry peers. Meanwhile, Kaiser Permanente's involvement in AI risk guilds led to a 25% reduction in third-party vulnerabilities [5][2][6]. These efforts demonstrate how benchmarking can drive measurable improvements in security readiness.

Looking Ahead

Gartner forecasts that by 2027, 75% of enterprises will require hybrid oversight models, combining automation with expert validation to safeguard patient outcomes [12]. With AI threats like polymorphic ransomware expected to rise by 50% during the same period, healthcare organizations must implement frameworks that adapt continuously - ideally on a monthly basis [3][4]. This approach ensures that defenses remain agile and capable of addressing emerging challenges.

FAQs

What should we secure first when rolling out AI tools that touch PHI?

When using AI tools to manage Protected Health Information (PHI), it's crucial to focus on strong encryption, robust access controls, and real-time monitoring. Additionally, performing AI-specific privacy impact assessments can help uncover potential risks and implement measures to safeguard sensitive data effectively.

How can we reduce AI-driven phishing risk without slowing down clinical work?

Healthcare organizations can tackle AI-driven phishing threats by leveraging AI-powered email detection systems. These systems analyze email content, sender behavior, and embedded links in real time to block malicious messages before they ever reach clinicians. The result? High accuracy in threat detection while keeping disruptions to a minimum.

On top of that, training staff to spot sophisticated phishing methods - such as deepfake-based messages - adds another layer of protection. By blending advanced AI tools with staff education, organizations can maintain strong security measures without interfering with clinical workflows.

What do the 2026 HIPAA security updates mean for AI logging and audits?

The 2026 HIPAA security updates are set to introduce tighter regulations for healthcare organizations, particularly when it comes to managing AI tools and processes. Here's a breakdown of the key changes:

- Detailed AI Inventories: Organizations will need to maintain comprehensive records of all AI tools in use, ensuring transparency and accountability.

- Multi-Factor Authentication (MFA): Enforcing MFA will be mandatory to strengthen access controls for systems handling Protected Health Information (PHI).

- AI-Specific Risk Assessments: Healthcare entities will be required to conduct targeted risk assessments focused on potential vulnerabilities and risks associated with AI technologies.

- Real-Time AI Logging: Continuous, real-time logging of all AI interactions involving PHI will be necessary to track activities and ensure compliance.

These updates are designed to bolster security protocols and ensure sensitive healthcare data is handled with the utmost care.