Securing the Learning System: Cybersecurity for Adaptive AI in Healthcare

Post Summary

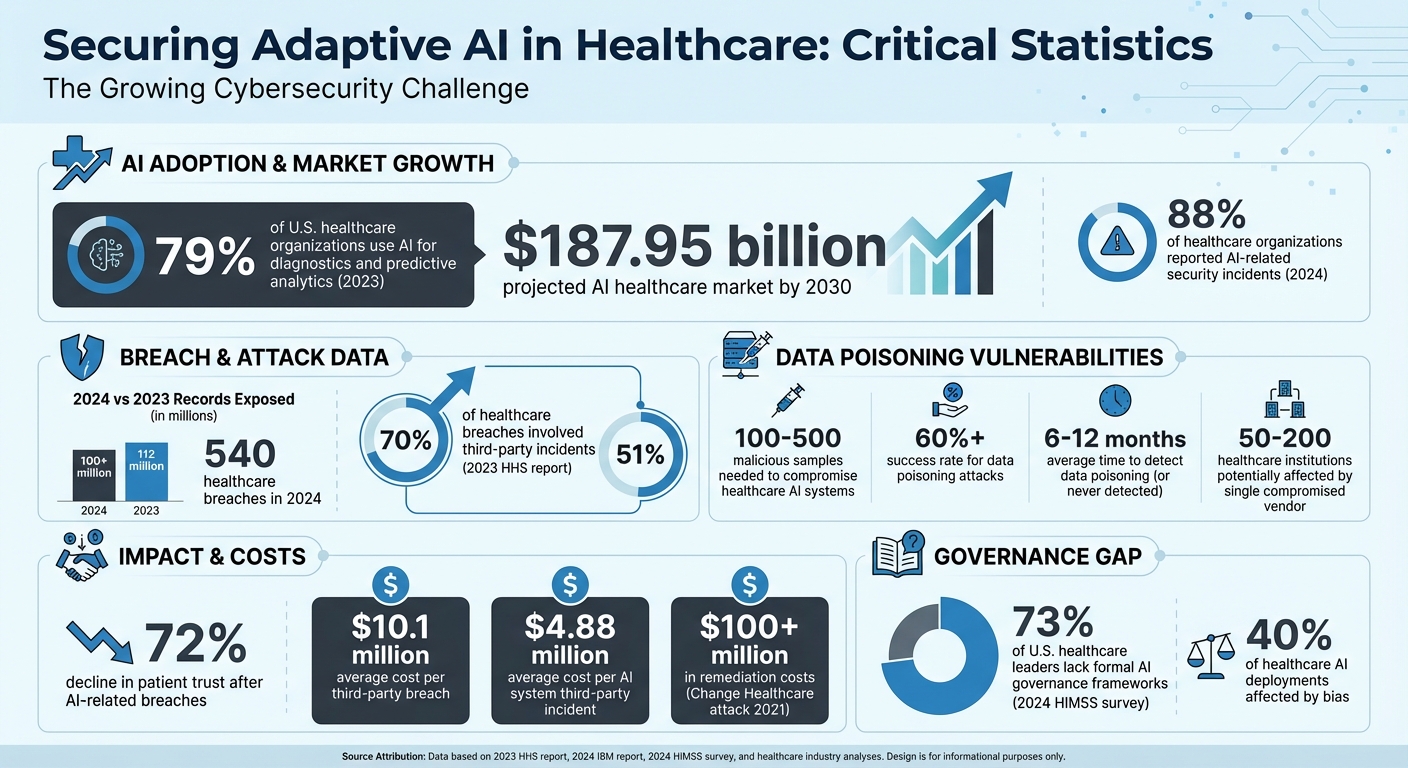

Healthcare is rapidly adopting AI systems, but cybersecurity risks are growing just as fast. By 2023, 79% of healthcare organizations in the U.S. used AI for tasks like diagnostics and predictive analytics. These systems, which continuously learn from patient data, improve clinical outcomes but also introduce vulnerabilities like data poisoning and adversarial attacks. Recent incidents highlight how compromised AI can lead to misdiagnoses, regulatory penalties, and loss of patient trust.

Key insights include:

- 79% of hospitals use AI, expected to grow into a $187.95 billion market by 2030.

- Data poisoning attacks can compromise AI with as few as 100 malicious samples.

- 540 healthcare breaches in 2024 exposed over 100 million records, many linked to AI vulnerabilities.

- Patient trust declines by 72% after breaches involving AI systems.

To address these risks, healthcare organizations must:

- Implement strong encryption (e.g., AES-256) and multi-factor authentication.

- Conduct adversarial robustness testing and monitor for model drift.

- Use zero-trust architecture and secure APIs to limit access points.

- Regularly audit third-party vendors and enforce compliance with frameworks like NIST AI RMF.

Cybersecurity for AI is not just about protecting systems - it's about safeguarding patient safety, maintaining trust, and ensuring compliance with strict regulations like HIPAA. Without these measures, healthcare AI systems remain vulnerable to attacks that can disrupt care and compromise sensitive data.

Cybersecurity Risks and Statistics for Adaptive AI in Healthcare 2023-2024

AI & Cybersecurity Risk Mitigation In Healthcare Management

sbb-itb-535baee

What Adaptive AI Systems Are and How They Work in Healthcare

Adaptive AI systems in healthcare stand out because they continuously learn and adjust as they process new patient data, unlike static systems that need manual updates. These systems often rely on architectures like reinforcement learning agents, large language models (LLMs), and federated learning systems. They integrate real-time data from sources such as Electronic Health Records (EHR), Laboratory Information Systems (LIS), and Internet of Medical Things (IoMT) devices, including insulin pumps and pacemakers. For example, an adaptive AI monitoring ICU patients could update its risk predictions based on the latest vital signs, lab results, and medication changes. This dynamic approach is reshaping how healthcare systems handle data and security.

Key Features of Adaptive AI in Healthcare

The hallmark of adaptive AI is its ability to evolve - its accuracy and reliability improve as it encounters new clinical scenarios [8]. For these systems to perform effectively, they require diverse, high-quality patient data. Specialties like radiology, oncology, and genomics benefit greatly from these systems, as they identify patterns that support predictive modeling. Many adaptive AI models function as "black boxes", meaning their outputs are based on statistical correlations rather than transparent, traceable rules.

However, this continuous learning creates unique challenges. Adaptive systems depend heavily on a steady flow of patient data across various demographics and disease types to maintain accuracy. Their integration with IoMT devices adds complexity; for instance, they can automatically adjust insulin dosages or pacemaker settings based on real-time data. This makes their reliability and security critical for patient safety [8]. Supporting these systems often requires specialized MLOps frameworks for tasks like automated retraining, version control, and deployment. Additionally, interoperability standards like HL7 FHIR ensure smooth data exchange with legacy IT systems. While these features improve clinical outcomes, they also introduce specific security risks.

Why Adaptive AI Systems Create New Security Risks

The same adaptive nature that makes these systems powerful also expands their vulnerability to attacks. Unlike traditional cybersecurity, which focuses on preventing breaches, adaptive AI can fall victim to data poisoning. This occurs when attackers introduce malicious inputs - such as fake patient visits - into trusted clinical workflows. In November 2025, researchers Farhad Abtahi, Fernando Seoane, Iván Pau, and Mario Vega-Barbas highlighted a case where coordinated fake patient visits were used to compromise an AI's learning process without triggering conventional security alerts [7].

The statistics are concerning. Attackers need only 100–500 malicious samples to compromise healthcare AI systems, with data poisoning attacks achieving success rates exceeding 60% [7]. Even worse, detecting such attacks can take six to 12 months - or may never happen at all [7]. A single compromised vendor in the AI supply chain could potentially poison models used by 50 to 200 healthcare institutions simultaneously [7].

"Privacy laws such as HIPAA and GDPR can unintentionally shield attackers by restricting the analyses needed for detection" [7].

Additionally, the integration of adaptive AI with older hospital systems and outdated software creates further vulnerabilities, offering attackers more opportunities to exploit weaknesses across the digital ecosystem [8].

Finding Vulnerabilities in Adaptive AI Systems

This section dives into methods for detecting and analyzing vulnerabilities in adaptive AI systems, focusing on their evolving attack surfaces. Unlike traditional software, where security efforts target static code or network vulnerabilities, adaptive AI presents unique challenges. Its learning process continuously updates, creating new potential entry points for attacks. Healthcare organizations must scrutinize every stage of the AI lifecycle - from data collection to clinical workflows, model retraining, and deployment.

The first step is mapping all AI assets. This involves identifying every adaptive AI model, its data sources, and how it interacts with hospital systems. For example, tracing data from patient records through electronic health record (EHR) systems, lab databases, and IoMT devices to the AI's training pipeline can reveal potential vulnerabilities. Each connection is a possible entry point for malicious data. Tools like MITRE ATLAS can help map out attack vectors specific to machine learning, such as evasion or discovery attacks.

Adversarial robustness testing is another critical step. This involves simulating attacks to see how easily an AI system can be manipulated. For instance, subtle changes to medical images - undetectable to human radiologists - could cause the AI to misdiagnose a condition. Monitoring for model drift is equally important, as sudden performance changes may signal data poisoning.

The adaptive learning process itself requires close monitoring. Feedback loops, where the AI learns from new data, must be audited to ensure no unauthorized or unvalidated inputs influence the model. Research highlights significant vulnerabilities in healthcare AI systems related to data poisoning, which current defenses and regulations struggle to address [7]. Implementing MLSecOps practices can integrate security checks into the machine learning lifecycle, ensuring every model update is scanned for vulnerabilities before deployment.

Common Vulnerability Types in Adaptive AI Systems

Several vulnerability types pose significant risks to adaptive AI systems in healthcare:

- Data poisoning: This remains one of the biggest threats. Attackers inject malicious samples into training data or clinical workflows, compromising the model's integrity. Even a small number of poisoned samples can have a massive impact.

- Medical Scribe Sybil attacks: These attacks involve coordinated fake patient visits, introducing poisoned data through legitimate clinical documentation systems. No advanced technical skills are needed - attackers exploit routine workflows, bypassing traditional security measures.

-

Architectural vulnerabilities: The type of AI system determines the specific risks. For example:

- Convolutional Neural Networks (CNNs) used in imaging can be tricked with subtle pixel changes, leading to misdiagnoses.

- Large Language Models (LLMs) in decision support tools may produce biased or harmful recommendations if their training data is compromised.

- Reinforcement Learning agents managing resources like organ transplants or triage can be manipulated, resulting in biased or dangerous decisions.

- Infrastructure entry points: Distributed healthcare environments, such as those using federated learning, can obscure the source of malicious updates. Insiders with access to clinical systems can exploit these vulnerabilities without advanced skills.

- Supply chain weaknesses: A compromised vendor providing foundation models can introduce vulnerabilities across multiple healthcare institutions, potentially affecting hundreds of hospitals [7].

- Regulatory gaps: Privacy laws like HIPAA and GDPR, while protecting patient data, can limit the deep analyses needed to detect data poisoning. This creates challenges for security teams trying to investigate malicious patterns without violating patient privacy [7].

How Vulnerabilities Affect Healthcare Operations

The impact of AI vulnerabilities goes beyond data breaches, directly threatening patient safety and clinical workflows. A compromised diagnostic AI could recommend incorrect treatments, miss critical diagnoses, or misprioritize patients, leading to serious legal and ethical consequences.

Detection delays make these issues even worse. Data poisoning attacks in healthcare AI can go undetected for six to 12 months, during which the compromised system continues to make clinical decisions, potentially affecting countless patients. The distributed nature of healthcare data often complicates attribution, especially when attackers exploit legitimate access points.

Resource allocation systems are particularly vulnerable. Manipulation of AI systems managing organ transplants or triage decisions can result in biased outcomes, denying critical care to certain patient groups. Such errors could have life-or-death consequences.

System-wide corruption is another major risk. In federated learning environments, a single attack can spread poisoned models across multiple institutions, influencing decisions at several hospitals. The decentralized nature of these systems makes it almost impossible to trace the source of the attack.

Matching Vulnerabilities to Mitigation Strategies

To address these vulnerabilities, healthcare organizations need targeted strategies. Below is a table matching common vulnerabilities to recommended mitigation approaches:

| Vulnerability Type | How it Appears in Adaptive AI | Recommended Mitigation Strategy |

|---|---|---|

| Adversarial Attacks | Subtle changes to medical images causing misdiagnoses. | Adversarial training and input denoising. |

| Data Poisoning | Fake patient visits poisoning clinical workflows. | Ensemble-based detection and data sanitization. |

| Supply Chain Weakness | Malicious code in vendor foundation models. | Vendor audits and mandatory adversarial robustness testing. |

| Infrastructure Weakness | Federated learning obscuring malicious updates. | Privacy-preserving security mechanisms and attribution tracking. |

| Architectural Flaws | Manipulation of RL agents in resource allocation. | Interpretable models with safety guarantees. |

| Weak Governance | Lack of oversight on model updates and data use. | Role-based access control (RBAC) and centralized risk management. |

| Legacy System Gaps | Modern AI interacting with outdated, insecure databases. | Secure API gateways and end-to-end encryption. |

To strengthen defenses, healthcare organizations should combine multiple measures like adversarial testing, ensemble detection techniques, and privacy-preserving security. Requiring a "human-in-the-loop" for high-stakes decisions ensures clinicians validate AI outputs that deviate from established norms. Automated risk assessment tools can evaluate third-party vendors for compliance with cybersecurity standards. Additionally, differential privacy techniques can help prevent exposing sensitive patient data through model inversion attacks.

Security Measures for Adaptive AI Systems

Securing adaptive AI in healthcare requires a mix of technical and procedural safeguards that operate in real time. These measures are essential to protect both the AI systems and the sensitive patient data they handle.

The foundation of these safeguards lies in strong authentication and encryption protocols. Multi-factor authentication (MFA) is a must, leveraging methods like biometrics, hardware tokens, or authenticator apps to secure access points. Following NIST guidelines, implementing role-based access control (RBAC) ensures that only authorized clinicians can access patient-specific AI outputs, reducing breach risks by as much as 99% [1]. Encryption should be applied at every stage using techniques like AES-256, TLS 1.3, and homomorphic encryption. A prime example is Mayo Clinic's encrypted federated learning approach, which allows AI training without exposing raw patient data, all while staying HIPAA-compliant [2]. For cloud-stored model weights, such as those on AWS S3, envelope encryption with customer-managed keys adds an extra layer of security.

Below are specific strategies for authentication, incident response, and risk management tailored to adaptive AI systems.

Authentication, Encryption, and Access Control

Granular access controls are critical for safeguarding AI systems, especially given their continuous learning capabilities and evolving vulnerabilities. A zero-trust architecture is essential, requiring ongoing verification for every access request. This approach treats all requests as potentially malicious. For added security, just-in-time privileges provide temporary access for specific tasks, with permissions automatically revoked afterward. For instance, using dynamic secrets in AI pipelines can limit lateral movement during a breach [3].

Attribute-based access control (ABAC) takes this a step further by factoring in elements like device trust, location, and context. For example, if a radiologist tries to access an AI imaging system from an unknown device, additional verification steps might be triggered. Micro-segmentation further minimizes risk by isolating breaches to specific components. In 2024, healthcare organizations that implemented encrypted AI pipelines reported a 67% drop in unauthorized access incidents, with encryption protocol adoption reaching 94% [10].

AI-Specific Incident Response and Monitoring

Traditional incident response plans often fall short when addressing AI-specific risks, such as model poisoning or data drift. To fill this gap, healthcare organizations should align their efforts with the NIST AI Risk Management Framework. This includes preparation, identification, containment, eradication, recovery, and post-incident analysis.

- Preparation involves crafting threat models tailored to different AI applications, like diagnostic imaging or resource allocation.

- Identification relies on anomaly detection tools to flag unusual model behavior, such as sudden performance drops or unexpected predictions. Real-time monitoring is key to spotting anomalies in data inputs, model drift, or adversarial attacks. For example, Microsoft's Azure Sentinel integrates behavioral analytics to catch irregularities early [9].

- Tools like Splunk or the ELK Stack can perform continuous vulnerability scans, while libraries like CleverHans help test models for adversarial robustness. Cleveland Clinic demonstrated the effectiveness of these measures by isolating an evasion attack on its imaging AI system within 15 minutes, avoiding data loss and restoring operations quickly [4].

Containment strategies include quarantining affected models and switching to backup versions while investigations are underway. Automated model versioning allows quick rollbacks to clean states, ensuring minimal disruption to clinical workflows. Eradication involves retraining models with sanitized data and validating their integrity through adversarial testing. Gartner suggests weekly vulnerability scans, a practice that helped a U.S. health network in 2025 identify 40% more vulnerabilities before deployment [5]. Regular red-teaming exercises also prepare security teams for real-world incidents and reveal potential weaknesses.

Using Censinet RiskOps™ for Cybersecurity Management

Centralized risk management platforms like Censinet RiskOps™ simplify AI security by streamlining processes across healthcare organizations. This platform automates third-party risk assessments using standardized questionnaires and AI-driven analytics, cutting assessment times from weeks to days. For instance, a California health system using Censinet RiskOps™ avoided a potential breach that could have affected 500,000 patient records while keeping its adaptive AI models secure [6].

Censinet RiskOps™ offers real-time risk scoring aligned with HITRUST standards, helping organizations prioritize vulnerabilities. Its collaborative workflows let healthcare teams work directly with vendors to address issues, with progress tracked through automated dashboards. The platform's AI capabilities also speed up risk assessments by summarizing evidence and identifying fourth-party risks in seconds. With human oversight guiding automation, critical decisions remain in expert hands. Major U.S. hospital networks have reported a 60% boost in efficiency using Censinet RiskOps™ for vendor management. Its AI risk dashboard serves as a centralized hub for managing policies, risks, and tasks - acting as a control center for AI governance.

Governance and Ethical Oversight for AI Security

In healthcare, adaptive AI systems require structured governance and ethical oversight to address the ever-changing landscape of cybersecurity threats. Without formal frameworks, organizations face risks like model drift, unauthorized updates, and data breaches, all of which can jeopardize patient data. A 2024 HIMSS survey revealed that 73% of U.S. healthcare leaders lack formal AI governance frameworks, leaving them vulnerable to both ethical and security issues. Governance ensures consistent security policies, accountability, and compliance with regulations such as HIPAA.

The nature of adaptive AI, which evolves through continuous learning, makes governance indispensable. Traditional security measures alone can't keep up with these dynamic systems. A governance framework provides structure by defining roles, documenting processes, and tracking performance, which helps mitigate random vulnerabilities. It also ties cybersecurity to ethical considerations, addressing issues like bias and transparency that could otherwise weaken AI systems. Here’s how healthcare organizations can create a strong governance framework and tackle ethical challenges to secure adaptive AI systems.

Building a Governance Framework

Developing a robust governance framework requires collaboration across IT, clinical, legal, and data science teams. This can include conducting risk assessments, using standardized templates, and holding regular coordination meetings - such as monthly guilds - to align security efforts, share knowledge, and streamline workflows from AI model development to deployment. Breaking down silos ensures that security practices remain consistent across the organization.

Key elements of a governance framework include:

- Documented processes: Clear risk assessment and mitigation protocols, along with stakeholder responsibilities, ensure accountability.

- Centralized tools: Version control tools and templates help maintain standards during AI model reviews. For instance, project managers oversee timelines, executives approve decisions, and clinicians validate security measures to avoid disrupting care delivery.

- Collaborative platforms: Tools like Asana or Slack facilitate feedback loops, enabling teams to quickly adapt to emerging threats.

Regulatory compliance is a cornerstone of governance. Organizations must integrate checklists for HIPAA, FDA guidelines, and the NIST AI Risk Management Framework (introduced in January 2023) into their workflows. The NIST AI RMF provides a voluntary framework tailored to high-stakes sectors like healthcare, addressing trust, ethics, and security [11]. Regular audits with measurable metrics - such as encryption rates or response times - ensure compliance. Living documentation that tracks changes creates an audit trail, demonstrating adherence to regulations.

Ethical Considerations in AI Deployment

Ethical oversight plays a critical role in cybersecurity by exposing hidden biases and vulnerabilities in adaptive AI systems. For example, bias in AI models affects 40% of healthcare deployments, creating opportunities for attackers to exploit weaknesses through adversarial inputs. Manipulated medical images, for instance, can deceive diagnostic AI systems, leading to data poisoning attacks that compromise model integrity. Regular bias audits using tools like IBM AI Fairness 360, combined with penetration testing, can link ethical issues to potential cyber risks.

Patient consent is another area where ethics and cybersecurity intersect. Adaptive AI systems that retrain on unauthorized data risk violating patient privacy and creating exploitable vulnerabilities. To address this, organizations must implement detailed consent protocols that specify how AI systems use data. These protocols should ensure HIPAA compliance and document consent throughout workflows. A privacy-by-design approach, featuring strong access controls and encryption, helps safeguard patient data.

Transparency in AI decision-making is crucial for building trust and enhancing security. Explainable AI techniques - such as model cards that document training data and decision-making processes - make it easier to monitor for issues like tampering or hidden backdoors. As states like California move toward requiring transparency disclosures for healthcare AI by 2026, this is becoming not only a regulatory requirement but also a best practice for security.

Practical steps for implementation include setting up ethics review boards for pre-deployment evaluations, using modular templates for bias and consent documentation, and forming cross-functional committees. For example, AI governance committees with clearly defined roles can meet quarterly to review adaptive AI models and address emerging security and ethical concerns.

Third-Party Risk Management for AI in Healthcare

Healthcare organizations often depend on third-party vendors to develop and maintain AI systems rather than building them entirely in-house. These vendors include AI algorithm developers, cloud service providers, medical device manufacturers, and data processing platforms. However, each vendor introduces potential risks that could compromise patient data or the integrity of AI models. According to a 2023 HHS report, 70% of healthcare breaches involved third parties, and the 2024 IBM Cost of a Data Breach Report found that third-party incidents accounted for 51% of healthcare breaches, with costs averaging $10.1 million per breach [1].

The risks are even greater for adaptive AI systems, which continuously learn from vendor data feeds. If a vendor is compromised, malicious data can be introduced without detection. For instance, the 2021 Change Healthcare ransomware attack, which originated through a third-party vendor, disrupted adaptive billing AI systems and exposed 1 in 3 U.S. patient records. The attack resulted in over $100 million in remediation costs and led to inaccurate treatment recommendations when AI models lost access to clean training data [3].

The problem doesn’t stop with direct vendors. It extends to "Nth parties" - the cloud providers, API services, and data processors that support those vendors. Traditional manual risk assessments, like using spreadsheets, are too slow to handle this complex web of dependencies. As Matt Christensen, Sr. Director GRC at Intermountain Health, explains:

"Healthcare is the most complex industry... You can't just take a tool and apply it to healthcare if it wasn't built specifically for healthcare" [13].

Let’s delve into how to rigorously evaluate vendor security practices.

Assessing Vendor Security Practices

A thorough evaluation of vendor security requires more than a simple compliance checklist. Start by confirming certifications like SOC 2 Type II and ISO 27001, then dig deeper into AI-specific safeguards. Ask for evidence of adversarial robustness testing, model integrity checks, and data provenance tracking. For adaptive AI vendors, inquire about model retraining frequency, access controls for training data, and measures to prevent model poisoning.

Leverage standardized frameworks for a comprehensive evaluation. NIST SP 800-53 offers criteria for control assessments, while HITRUST provides benchmarks tailored to healthcare. The NIST AI Risk Management Framework, introduced in January 2023, addresses key considerations like trust, ethics, and security for AI in high-stakes environments [11]. Vendor questionnaires should cover encryption standards (minimum AES-256), role-based access control, penetration testing schedules, and incident response agreements.

Practical steps include reviewing third-party audit reports, conducting on-site evaluations, and running breach simulation exercises. Contracts should include clauses that allow system audits and require vendors to notify you within 24 hours of any security incidents. Vendors should also be categorized by their access to Protected Health Information (PHI) and their impact on clinical workflows. Those with "Critical" or "High" risk ratings should undergo annual reassessments at minimum [12].

Automated platforms can simplify this process. For example, the Censinet Risk Network includes over 50,000 pre-assessed vendors and products, offering immediate insights into vendor risk profiles [12]. Delta-based reassessments, which focus only on changes in vendor responses, can cut reassessment time to under a day [12].

Collaborative Risk Management Strategies

Managing third-party risks effectively requires more than periodic audits - it demands ongoing collaboration. Establish joint security operations centers (SOCs) with key vendors to share threat intelligence in real time. Service level agreements (SLAs) should mandate quarterly risk assessments and define protocols for data handling, model updates, and incident responses.

Cloud-based risk exchange platforms streamline this process by enabling vendors to complete assessments once and share results with multiple healthcare organizations. This creates a "Cybersecurity Data Room™", as Censinet calls it, which tracks a vendor’s security posture over time [12]. Terry Grogan, CISO at Tower Health, noted:

"Censinet RiskOps allowed 3 FTEs to go back to their real jobs! Now we do a lot more risk assessments with only 2 FTEs required" [13].

Integrating vendor APIs with zero-trust architecture ensures continuous monitoring of data flows. Automated alerts for vendor breaches eliminate the need for manual monitoring, enabling faster responses. According to the 2024 Ponemon Institute report, 62% of healthcare organizations experienced a third-party breach involving AI systems, with average costs of $4.88 million per incident [3]. Automated notifications can reduce response times from days to hours.

Regular workshops with vendors can align security protocols and address new threats. Shared platforms can be used to co-develop corrective action plans (CAPs) and transparently track progress. This collaborative approach turns vendor relationships into strategic partnerships, fostering shared accountability for safeguarding adaptive AI systems.

Conclusion

Adaptive AI is reshaping healthcare, but it also introduces cybersecurity risks that go beyond what traditional defenses can handle. In 2024, 88% of healthcare organizations reported AI-related security incidents, with adaptive models particularly vulnerable to poisoning attacks and data breaches. The numbers are staggering - 112 million healthcare records were exposed in 2023 alone. These challenges demand more than conventional security measures [14][15].

Protecting adaptive AI systems requires a multi-layered approach. This includes identifying vulnerabilities (like adversarial and supply chain risks), enforcing robust authentication and encryption protocols, establishing clear governance structures, and rigorously evaluating vendor risks. Proactive monitoring and incident response are also critical pieces of the puzzle. Together, these measures create a solid foundation for securing AI in healthcare.

But technical defenses alone aren’t enough. To turn these strategies into actionable results, healthcare organizations must embrace specialized solutions like Censinet RiskOps™. Traditional manual processes and generic tools simply can’t handle the intricacies of AI risk management. Censinet RiskOps™ addresses these challenges with features like AI-powered risk intelligence, continuous visibility through its Cybersecurity Data Room™, and automated Corrective Action Plans. Its expansive Risk Network - featuring over 50,000 pre-assessed vendors - helps organizations stay ahead of evolving threats [13]. As Terry Grogan, CISO at Tower Health, highlighted:

"Censinet RiskOps allowed 3 FTEs to go back to their real jobs! Now we do a lot more risk assessments with only 2 FTEs required" [13].

The gap between AI adoption and effective governance continues to grow. However, healthcare leaders who implement proactive frameworks, invest in dedicated platforms, and build strong vendor partnerships will be better equipped to safeguard patient data while leveraging the transformative power of adaptive AI. The future of healthcare AI security depends on shifting from reactive measures to operational excellence - turning risk management into a strategic advantage.

FAQs

How can hospitals spot AI data poisoning early?

Hospitals can stay ahead of AI data poisoning by keeping a close eye on their training data. This means looking out for unusual patterns, such as sudden shifts, unexpected outliers, or labels that don't seem to make sense. Setting up validation pipelines and incorporating multiple layers of detection - like ensemble methods or adversarial testing - adds another line of defense. On top of that, regular audits and real-time monitoring of data sources play a crucial role in spotting and addressing potential risks before they can harm the performance of AI models in healthcare.

What AI systems in healthcare are highest-risk?

The riskiest AI systems in healthcare are those that directly affect patient safety, data integrity, and operational reliability. Machine learning models used for diagnostics, clinical decision-making, and managing resources are particularly susceptible to threats like data poisoning or model manipulation. These vulnerabilities can result in serious consequences, such as misdiagnoses or harmful treatment recommendations.

AI systems operating in interconnected settings - like Internet of Medical Things (IoMT) devices and federated learning models - face extra challenges. For example, supply chain breaches can compromise both patient safety and the security of healthcare organizations.

How do you secure AI vendors and their supply chain?

Protecting patient data and ensuring the integrity of AI systems in healthcare requires a solid risk management approach. Here are some essential steps to consider:

- Evaluate vendor security practices: Take a close look at how vendors handle data security. This includes their protocols for data encryption, access controls, and incident response plans.

- Ensure regulatory compliance: Verify that vendors comply with healthcare regulations like HIPAA, which sets strict standards for protecting patient information.

- Validate AI models: Confirm that the AI models being used are accurate, reliable, and free from bias.

- Establish detailed agreements: Create clear contracts, such as Business Associate Agreements (BAAs) and Service Level Agreements (SLAs), to define responsibilities and expectations.

- Conduct regular audits: Periodically review vendor practices to ensure they continue to meet security and compliance requirements.

- Assess certifications: Look for certifications like SOC 2 and HITRUST, which demonstrate a vendor’s commitment to maintaining high security standards.

To streamline these efforts, automated tools can be incredibly useful. They can help identify vulnerabilities, monitor risks, and ensure vendors remain accountable. By following these steps, healthcare organizations can better safeguard patient data and maintain trust in their AI systems.