Trust But Verify: Building Accountability Into Healthcare AI Systems

Post Summary

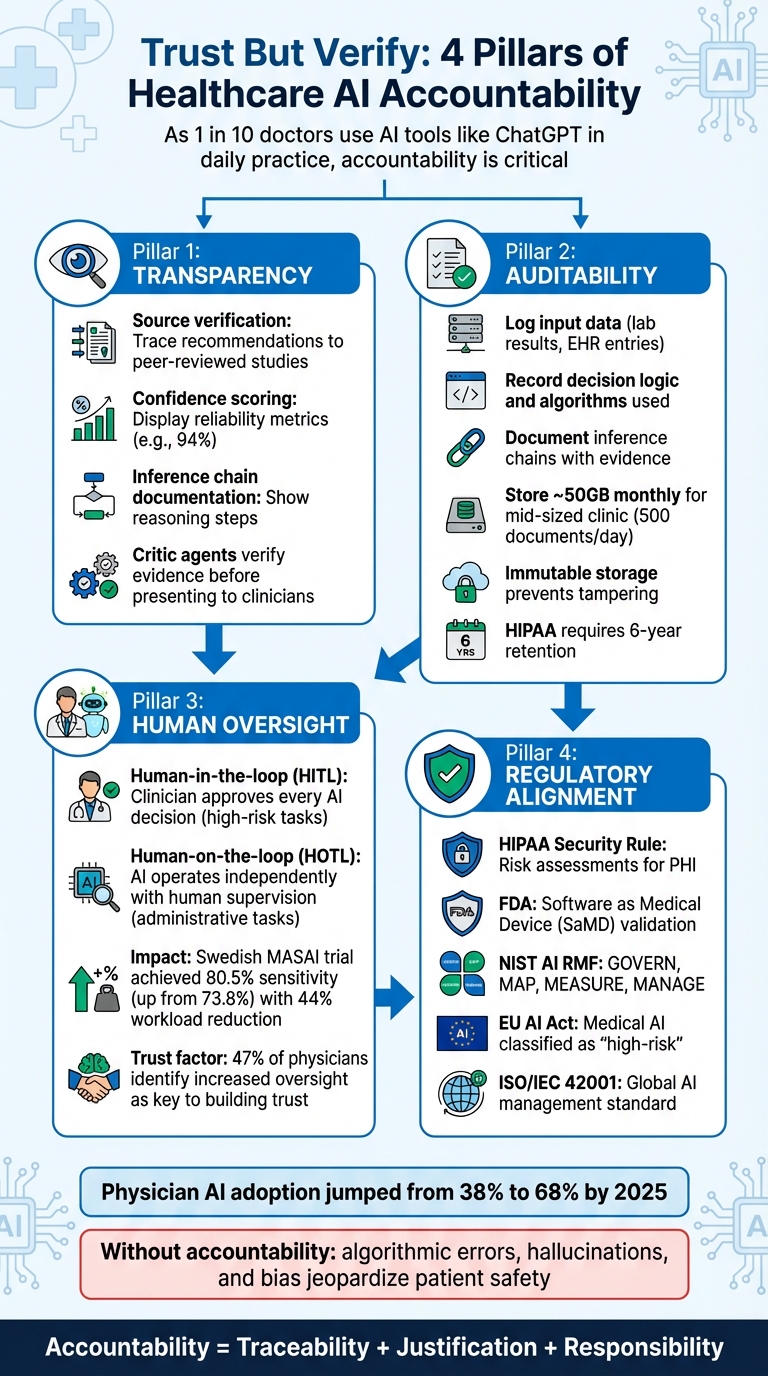

Healthcare AI is transforming clinical workflows but raises critical questions about safety and accountability. As of 2024, 1 in 10 doctors were using AI tools like ChatGPT in daily practice. The challenge? Ensuring these systems make reliable, explainable decisions. This article explores strategies to build accountability into healthcare AI, focusing on:

- Transparency: AI recommendations must be traceable to verified medical sources.

- Auditability: Detailed logs should document every decision step, from input data to outcomes.

- Human Oversight: Clinicians must remain involved, especially for high-risk decisions.

- Regulatory Alignment: Compliance with frameworks like the EU AI Act and FDA guidelines is non-negotiable.

Without these safeguards, risks like algorithmic errors, hallucinations, and bias can jeopardize patient safety and trust. Learn how to integrate accountability measures into healthcare AI to meet regulatory standards and empower clinicians.

4 Key Strategies for Building Accountability into Healthcare AI Systems

Healthcare AI Governance - Risks, Compliance, and Frameworks Explained

sbb-itb-535baee

Risks and Accountability Gaps in Healthcare AI

This section delves into how accountability gaps in healthcare AI can jeopardize patient safety, building on the earlier discussion about the importance of verifiable AI.

What Accountability Means for AI Systems

In the context of healthcare AI, accountability ensures a clear and traceable chain of responsibility for every action - whether it's the data the system processes or the recommendations it generates. As Roberts explains, "Accountability is the obligation to answer for one's actions or inaction and to be responsible for their consequences" [4]. This concept is especially critical when AI systems influence clinical decisions that directly impact patient outcomes.

For accountability to work in practice, three key elements are required: traceability, justification (providing evidence-based explanations for decisions), and responsibility (clearly identifying who is accountable when issues arise). The complexity here lies in the joint accountability model, where developers, healthcare institutions, and clinicians share responsibility for the outcomes of AI use [4]. When an AI system makes a mistake, these stakeholders must collaborate to investigate and resolve the issue. Without these accountability mechanisms in place, patient safety and trust are at risk.

How Accountability Gaps Affect Patient Safety and Trust

When accountability is unclear, the consequences can be severe. One major issue is algorithmic opacity, which makes it difficult - or even impossible - for clinicians to understand how an AI system arrived at its recommendation [4][2]. This lack of transparency forces healthcare providers into a difficult position: either trust the system without question or dismiss its guidance entirely.

Another risk comes from hallucinations in generative AI and Large Language Models. These systems can produce false but authoritative-sounding information, leading to inaccurate clinical recommendations [2]. Without proper verification, clinicians might unknowingly act on flawed data, putting patients in harm's way. Equally concerning is the amplification of biases. AI systems trained on incomplete or skewed datasets can worsen existing disparities in healthcare, such as underdiagnosing conditions in underserved communities or misdiagnosing individuals with certain skin tones [5].

Accountability gaps also create confusion over who takes ownership when AI-related errors occur. This ambiguity often leads to "scapegoating", where stakeholders shift blame instead of addressing the root cause [4]. Such dynamics erode trust at every level. Clinicians may grow skeptical of AI tools they can't verify, patients may lose faith in AI-assisted care, and healthcare organizations may struggle to comply with regulations. Ultimately, these gaps hinder the adoption of AI technologies that could otherwise benefit patients, underscoring the need for managing third-party AI risk strategies.

Strategies for Building Accountability Into Healthcare AI

Healthcare organizations need to prioritize accountability when designing and operating AI systems. The strategies below provide practical ways to weave accountability into AI workflows, ensuring these systems operate transparently and integrate smoothly into clinical practices.

Using Transparent AI Design Principles

Start with source verification. Every recommendation made by an AI system should be traceable to reliable sources, like peer-reviewed studies or established clinical guidelines. This transforms AI from being a "black box" into a tool that healthcare professionals can trust [2].

Another key feature is confidence scoring. By assigning a confidence score (e.g., 94%) to each decision, AI systems allow clinicians to gauge how much they should rely on the recommendation. For decisions with low confidence, real-time alerts can prompt immediate human review before any action is taken [1].

Inference chain documentation is another critical element. This involves recording the exact data points, reasoning steps, and evidence the AI used to arrive at its conclusion. For example, instead of simply stating, "Patient likely has pneumonia", the system would detail which symptoms, lab results, and imaging findings led to that assessment. As Amann et al. note, "Explainability is but one measure of achieving transparency; other measures include detailed documentation of datasets and algorithms, as well as open communication of the system's capabilities and limitations" [2].

Practical implementations include using "critic agents" - secondary AI systems that verify whether the primary system's recommendations are backed by evidence before presenting them to clinicians [2]. Additionally, semantic mapping with standardized ontologies, like SNOMED-CT and UMLS, ensures the AI interprets clinical terms consistently (e.g., recognizing "SOB" and "Shortness of Breath" as the same symptom) [2].

While transparency enhances clarity, maintaining detailed audit trails ensures every decision is thoroughly documented.

Creating Detailed Audit Trails

Audit trails provide a comprehensive record of what an AI system did, why it did it, and the information it relied on. These trails should include:

- Input data: The original information (e.g., lab results, EHR entries) fed into the system.

- Decision logic: Details about the algorithms or models used.

- Inference chain: Evidence supporting each decision.

- Confidence scores: Indicators of uncertainty [1][2].

To protect these records, use immutable storage methods like cryptographic hashing, digital signatures, or write-once storage solutions (e.g., Amazon S3 Object Lock). These measures prevent tampering and ensure the integrity of logs, which is essential for regulatory reviews or legal cases [1][6].

Given the sheer volume of audit data - about 50GB monthly for a mid-sized clinic processing 500 documents daily - efficient storage solutions are crucial. Implement tiered storage: keep recent logs in high-performance storage for quick access, while archiving older logs in cost-effective "cold" storage to meet HIPAA's six-year retention requirement [1][6].

To provide a complete picture, link AI audit trails with EHR logs using shared correlation IDs. This integration allows for seamless tracing of AI recommendations, clinician decisions, and patient outcomes. To prevent delays in clinical workflows, use asynchronous "write-behind" caching to record detailed logs without slowing down operations [1].

However, documentation alone isn't enough - accountability also requires active human oversight.

Setting Up Feedback Loops for Human Oversight

Human oversight is essential for ensuring AI systems remain reliable and responsive. It turns AI into a collaborative tool rather than an autonomous decision-maker. The Swedish MASAI mammography trial highlights this approach: radiologists reviewing AI recommendations achieved 80.5% sensitivity (up from 73.8% with standard double reading) while reducing workload by 44% [7].

Choose the right oversight model based on the task's risk level:

- Human-in-the-loop (HITL): Requires clinicians to approve every AI decision, making it ideal for high-stakes scenarios like diagnostics and treatment planning.

- Human-on-the-loop (HOTL): Allows AI to operate independently, with human supervisors stepping in only when anomalies are flagged. This works well for administrative tasks like scheduling or lab sorting [7].

Track human override rates to identify AI system limitations or areas needing retraining. Each override should be logged with detailed reasoning, creating valuable feedback for improving future models [1]. These logs can also serve as training data for the next iteration of the AI system.

A 2024 AMA survey found that while 66% of physicians use some form of AI, 47% identified increased oversight as the key to building trust [7]. To address this, use predetermined change control plans (PCCPs) to outline when performance issues or accumulated feedback should trigger updates to the AI model [7].

Emerging approaches like AI-instigated human oversight (AIHO) take this further. Here, AI systems self-assess their uncertainty and escalate high-risk decisions to human experts [8]. This self-awareness aligns AI systems with international standards for trustworthy use in medicine [8].

Finally, tackle automation bias, where clinicians may over-rely on AI recommendations, even when they're wrong. Structured training programs can help healthcare providers develop a healthy skepticism toward AI. Publishing internal dashboards that display real-time AI accuracy and intervention rates promotes transparency and helps calibrate trust across departments [7].

Aligning AI Accountability With Regulatory Requirements

To ensure compliance and build trust in healthcare AI, organizations must align their accountability mechanisms with the existing regulatory landscape. This is especially important since healthcare AI operates at the intersection of numerous regulatory frameworks, each with its own demands and timelines.

Key Regulatory Frameworks to Know

Healthcare organizations are navigating a maze of regulations governing AI systems. For instance, under HIPAA's Security Rule, any AI system handling Protected Health Information (PHI) must conduct rigorous risk assessments and implement robust technical safeguards [10]. The FDA, on the other hand, oversees AI systems with medical purposes, classifying them as Software as a Medical Device (SaMD). This requires clinical validation, software verification, and strict cybersecurity measures [3]. On January 7, 2025, the FDA introduced draft guidance outlining a 7-step risk-based framework for assessing the credibility of AI models used in drug and biological product development [9].

The NIST AI RMF provides a structure for AI governance, organized into four key functions: GOVERN, MAP, MEASURE, and MANAGE [3]. Similarly, ISO/IEC 42001, the first global standard for AI management systems, offers a shared framework for managing risks and ensuring end-to-end governance [3]. Meanwhile, the EU AI Act classifies medical AI as "high-risk", requiring conformity assessments and extensive technical documentation. However, some of these requirements won’t take effect until late 2027 [3][10].

On a state level, laws often mandate impact assessments, algorithmic reviews, and bias audits [3][10]. Adding to this complexity, the December 2025 Executive Order on National AI Policy Framework established a federal policy aimed at maintaining U.S. leadership in AI. This framework also introduces mechanisms to challenge state AI laws based on interstate commerce considerations [10].

Rather than creating isolated processes for each regulation, healthcare organizations are encouraged to adopt the NIST AI RMF and ISO/IEC 42001 as governance frameworks, mapping specific regulatory requirements onto these systems [3]. As Trustible aptly puts it:

"The organizations that win with AI in healthcare won't be the ones that treat each new emerging regulation in a reactionary manner. They'll be the ones that treat regulation as the floor from day one" [3].

These frameworks act as a solid foundation for developing operational policies that promote ethical AI practices.

Creating Policies for Ethical AI Use

Turning regulatory requirements into actionable policies is critical for ensuring accountability in healthcare AI. Assuming that all healthcare AI systems are high-risk is a good starting point. This approach helps organizations proactively address documentation, quality management, and monitoring obligations from the outset, rather than scrambling to fix issues later [3].

One way to standardize this process is by adopting the FDA's 7-step credibility framework. This involves defining the Question of Interest, determining the Context of Use (COU), assessing model risk, creating and executing a credibility plan, documenting results, and evaluating adequacy [9]. The FDA defines "credibility" as:

"trust, established through the collection of credibility evidence, in the performance of an AI model for a particular COU" [9].

Policies should also emphasize continuous lifecycle governance rather than a one-time review process. For instance, automated alerts can be set to notify teams if model performance drops by more than 5% or if there are significant shifts in data distributions [10]. To manage clinical alerts effectively, organizations should aim for a false positive rate below 20% and immediately address performance differences exceeding 10% across demographic groups [10].

Before deploying AI systems fully, a "silent mode" testing phase lasting 1–2 weeks can help verify technical stability and data quality without disrupting clinical workflows [10]. Establishing an AI Governance Committee that includes diverse experts - such as a Chief Medical Information Officer (CMIO), Chief Information Security Officer (CISO), bioethicist, and legal counsel - can further enhance oversight of AI acquisitions and implementations [10].

Lastly, organizations should negotiate validation rights into vendor contracts. This allows for independent validation of AI systems and ensures that results can be published, regardless of the outcome [10]. Such contractual safeguards promote transparency, even when working with external AI providers.

Scaling Accountability Mechanisms Across the Enterprise

Once healthcare AI accountability frameworks are established, the next big hurdle is scaling them consistently across various departments, facilities, and clinical settings. By 2025, the adoption of AI tools by physicians jumped from 38% to 68% [12]. This means AI governance can no longer remain confined to individual departments. Instead, it must strike a balance between standardized oversight and the flexibility needed at the local level. Enterprise-wide strategies are critical to ensure these accountability measures are applied consistently across diverse clinical environments.

At the same time, the healthcare sector is bracing for a potential shortage of up to 124,000 physicians by 2033 [11]. As AI tools become integral to specialties like radiology, oncology, emergency medicine, and even administrative workflows, ensuring consistent accountability across these expanding applications becomes a complex task. Without a unified governance approach, departments may create their own standards, leading to uneven patient outcomes and compliance risks.

Maintaining Consistency Across Departments

A structured, three-tiered governance model can help maintain consistency:

- Executive Leadership: Key figures like the Chief Medical Officer (CMO), Chief Nursing Officer (CNO), and General Counsel set the vision and allocate necessary resources.

- Advisory Councils: Teams comprising IT, informatics, and compliance specialists handle technical reviews.

- Specialty Teams: These teams implement AI tools in daily workflows and ensure they align with clinical needs [12].

This layered structure ensures that strategic priorities from leadership are effectively translated into everyday operations.

"Clinical decision-making must still lie with clinicians. AI simply enhances their ability to make those decisions."

– Dr. Margaret Lozovatsky, Chief Medical Information Officer at the AMA [12].

To streamline oversight, organizations can consolidate AI monitoring into a unified framework. This eliminates the need for separate audits at each facility. Instead, a centralized system tracks Key Performance Indicators (KPIs) and flags significant drops in AI model performance in real time, enabling proactive interventions.

However, standardization doesn’t mean one-size-fits-all. While core technical elements like data formats and algorithms should be standardized at the enterprise level, individual departments can customize workflows to meet their unique needs [13].

Another critical step is internal validation. Relying solely on vendor-provided scorecards isn’t enough. Organizations need to independently validate AI models using their own patient data to avoid accountability blind spots that could jeopardize patient safety [11].

Building Collaboration Among Stakeholders

Scaling accountability across an enterprise is as much about people as it is about technology. Effective AI governance thrives on collaboration among healthcare leaders, clinicians, and AI developers. A shared responsibility approach focuses on identifying system improvements rather than assigning blame when AI-assisted decisions result in adverse outcomes [4]. Instead of asking, “Who’s at fault?” the focus should shift to uncovering system-level failures.

Securing buy-in from the CEO and board is a vital first step to making AI governance a top organizational priority [14]. From there, forming a multidisciplinary working group can bring together data scientists, clinicians, compliance officers, and ethics experts. This group can define priorities, create AI-specific policies, and establish standardized procedures for project intake and vendor evaluations [11][14].

"Responsible AI use is not optional, it's essential... This guidance provides practical steps for health systems to evaluate and monitor AI tools, ensuring they improve patient outcomes and support equitable, high-quality care."

– Dr. Lee H. Schwamm, Chief Digital Health Officer at Yale New Haven Health System [15].

Rather than creating isolated oversight systems, integrating AI governance into existing quality assurance programs leverages institutional knowledge and streamlines processes [15]. Engaging stakeholders early on - such as department heads, nurses, and front-line clinicians - ensures that AI tools are tailored to fit real-world workflows. A comprehensive audit of current AI use cases can help establish priorities, while updating procedures to address AI-specific considerations like data privacy and physician liability strengthens accountability [14].

Centralized platforms like Censinet RiskOps™ can further simplify governance. These systems allow organizations to route assessments and tasks to the right stakeholders, such as members of an AI governance committee, for timely review and approval. With real-time data displayed on intuitive dashboards, teams can maintain continuous oversight and ensure that issues are addressed promptly.

Fostering collaboration not only aligns AI operations with regulatory requirements but also builds the trust needed to prioritize patient safety effectively.

Conclusion

As the use of AI in healthcare grows, organizations must put strong accountability measures in place to safeguard patient safety and maintain clinical trust. The risks are simply too great to depend on algorithms that operate without clear reasoning or evidence. To avoid harmful outcomes, healthcare leaders need to focus on transparency and verification processes, ensuring every AI recommendation is backed by reliable medical references.

For AI to be trustworthy in healthcare, its decisions must be traceable to credible guidelines and securely logged for review. The EU AI Act identifies most clinical AI systems as "high risk" [2], which means organizations must prepare for strict documentation and risk management protocols. Using standardized frameworks like the NIST AI Risk Management Framework or the IEEE UL 2933 (TIPPSS framework) can provide a structured way to evaluate AI vendors. These frameworks emphasize key principles like Trust, Identity, Privacy, Protection, Safety, and Security, helping organizations build a solid foundation for oversight.

Enterprise-wide governance is critical. Healthcare organizations should leverage automated workflows to ensure AI risks are routed to the right experts across clinical, IT, and security teams. Tools like Censinet RiskOps™ can help manage this process by providing real-time dashboards that enhance visibility and ensure accountability measures are consistently applied across all departments. This approach supports compliance with strict regulatory standards and aligns with best practices in healthcare cybersecurity.

Healthcare leaders must take immediate action. Introduce bias audits, anchor probabilistic models in reliable evidence, and integrate AI governance into current quality assurance systems. These steps not only make AI safer but also build trust and regulatory confidence across the organization. By acting now, healthcare organizations can ensure that AI innovations remain safe and reliable for the patients and communities they serve.

FAQs

Who is responsible when healthcare AI gets it wrong?

When AI systems in healthcare make errors, the burden of responsibility typically lands on healthcare providers and organizations. Developers of these AI tools often sidestep liability, which means hospitals and medical professionals are left to answer for mistakes such as diagnostic errors or other related problems. This underscores the need for strong accountability frameworks when implementing AI in healthcare settings.

What should an AI audit trail include in a hospital?

An AI audit trail in a hospital needs to maintain detailed, tamper-proof logs of every automated decision. These logs should clearly outline the how and why behind each decision, identify who accessed or modified the data, and record the exact timing and location of all actions. This approach helps ensure compliance with healthcare regulations, while promoting transparency and accountability in the decision-making process.

How can clinicians spot and prevent AI hallucinations and bias?

Clinicians can tackle the issue of AI hallucinations by cross-referencing AI-generated outputs with reliable sources or clinical data, particularly in critical scenarios. For instance, reviewing AI-created clinical notes for any false information can help identify and correct mistakes. To address bias, clinicians should employ tools designed to test for bias, assess the quality of data, and keep a close eye on AI systems to detect any disparities. Implementing transparency measures, such as explainability frameworks, can also help maintain fairness and build trust in patient care.