Boardroom to Bedside: Making AI Governance Everyone's Responsibility

Post Summary

AI is transforming healthcare, but its rapid adoption has left governance trailing behind. Effective AI management isn’t just an IT issue - it requires input from executives, clinicians, compliance teams, and frontline staff. Poor oversight can lead to patient harm, regulatory penalties, and loss of trust. To close this gap, healthcare organizations need structured frameworks that connect strategic decisions with daily operations. Here’s how to make it work:

- Establish an AI Governance Committee: Include diverse experts like clinicians, ethicists, legal advisors, and data scientists.

- Define Clear Roles and Accountability: Assign responsibilities and provide tailored training based on risk levels.

- Monitor AI Systems Continuously: Track performance, detect bias, and manage lifecycle risks.

- Ensure Data Security: Use robust privacy measures like encryption, federated learning, and differential privacy.

- Align Policies with Clinical Workflows: Create feedback loops and standardize processes for seamless integration.

AI governance in healthcare must extend beyond technology teams to protect patient outcomes while driving responsible innovation.

Establishing an AI Governance Framework

sbb-itb-535baee

Building an AI Governance Framework

Creating a solid framework for AI governance starts with establishing clear roles and communication channels. Without these, oversight can become disorganized, putting both patient safety and regulatory compliance at risk. A well-structured framework links high-level strategic oversight to hands-on implementation, ensuring governance is consistent and effective across all levels.

Forming an AI Governance Committee

The backbone of any AI governance strategy is a committee made up of diverse experts. This team should bring together healthcare professionals, AI specialists, ethicists, legal advisors, patient advocates, and data scientists. Forming this group is one of the most important steps in managing AI effectively [1]. The committee’s responsibilities include drafting and approving AI policies, performing ethical reviews, and greenlighting projects before they go live.

To get started, conduct an AI audit. This audit identifies who is using AI, what platforms are involved, and the purposes behind them. Essentially, it maps out the organization’s AI landscape, laying the groundwork for assigning roles and prioritizing governance efforts.

Assigning Clear Roles and Accountability

Once the committee is in place, the next step is to clearly define roles and responsibilities to ensure thorough oversight. These roles should be formalized in policies, with training tailored to the level of risk involved. For example, physicians working with high-risk AI systems might require advanced training, while administrative staff handling basic AI tools would need simpler instruction.

For systems classified as high-risk - such as those defined under frameworks like the EU AI Act - it's critical to provide comprehensive training and require documented approvals for deployment [1]. This ensures that the individuals using these systems are well-prepared and that the organization maintains a strong accountability structure.

Setting Up Communication and Escalation Processes

Effective communication is key to identifying and addressing AI-related risks promptly. Establish clear reporting channels that combine clinical and IT insights, and use standardized templates to turn technical vulnerabilities into actionable steps. Incident protocols are also essential - they should outline how to report issues, document them, and determine when to suspend AI systems if necessary.

To further strengthen governance, integrate AI risk assessments into the procurement process. This involves a cross-functional review by IT, legal, and clinical leaders to ensure that governance measures are in place before new technology is introduced. Finally, regular monitoring through structured processes helps track AI risks and usage across the organization, keeping oversight efforts consistent and up to date.

5 Core Components of Healthcare AI Governance

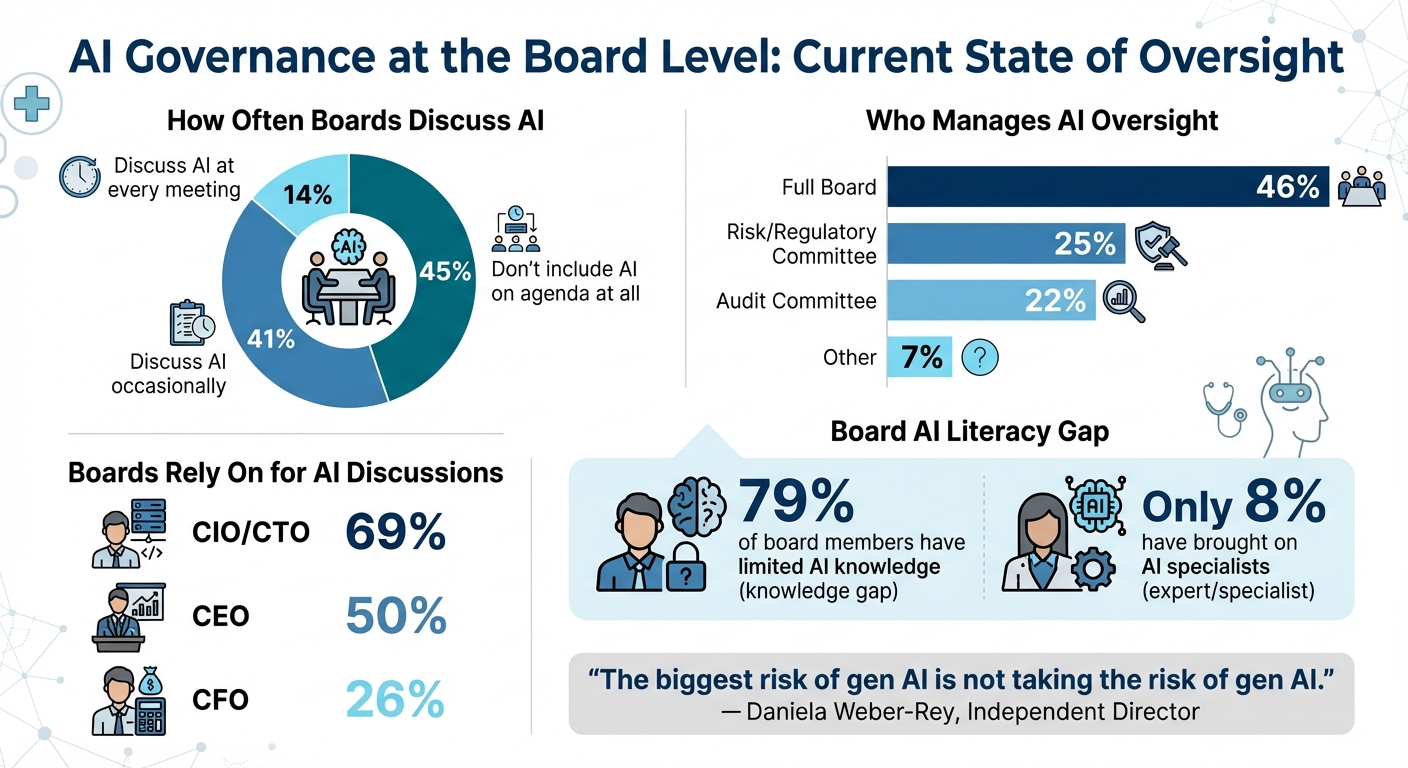

Healthcare AI Governance: Board Engagement and Leadership Statistics

Once your governance framework is in place, it’s important to understand how it covers the necessary areas. Effective AI governance in healthcare requires attention to five key components. Each one addresses specific risks and responsibilities, ensuring that oversight connects smoothly from strategy to execution.

Leadership and Organizational Structure

Strong leadership and a clear organizational structure are critical for managing AI in healthcare. Recent data [2] highlights some gaps: only 14% of boards discuss AI at every meeting, while 45% don’t include it on their agenda at all. Oversight is often managed by full boards (46%) or delegated to risk/regulatory (25%) and audit committees (22%). Despite this, 79% of board members admit to having limited knowledge of AI, and only 8% have brought on AI specialists. Boards primarily rely on CIOs/CTOs (69%), CEOs (50%), and CFOs (26%) for AI-related discussions.

As Independent Director Daniela Weber-Rey puts it:

"The biggest risk of gen AI is not taking the risk of gen AI." [2]

To address these gaps, organizations should assign clear ownership of AI responsibilities, update succession plans to include tech-savvy leaders, and create immersive digital literacy programs for board members.

Regulatory Compliance and Clinical Risk Management

Healthcare AI systems must navigate a maze of regulations, including HIPAA for protecting patient data, FDA guidelines for AI-enabled devices, and state-specific laws. Maintaining compliance requires ongoing attention to both technical vulnerabilities and patient safety.

Many organizations now use AI-powered Governance, Risk, and Compliance (GRC) platforms to centralize risk management. Tools like the Health Sector Coordinating Council (HSCC) SMART framework help align vendors and products with critical healthcare services.

One overlooked area is "AI Telemetry" - the ability to detect when vendors introduce new AI features into existing products. To manage this, organizations can benchmark against frameworks like the NIST AI Risk Management Framework and NIST Cybersecurity Framework 2.0. Adding privacy assessments into procurement and using rapid risk scoring ensures data protection measures are in place before deployment, safeguarding patient outcomes.

AI System Monitoring and Lifecycle Management

AI systems don’t stay static - they evolve as models are retrained, data sources shift, and usage patterns change. That’s why continuous monitoring and lifecycle management are essential. Start by establishing performance baselines, tracking metrics like accuracy, processing speed, error rates, and clinical outcomes. Set clear alert thresholds to catch performance dips early.

Bias detection should also be part of regular monitoring, ensuring outputs are fair across all patient groups. When systems are retired or replaced, having a solid transition plan helps maintain care continuity and keeps historical data intact. Keeping a real-time inventory of AI capabilities not only supports regulatory compliance but also enhances clinical safety.

Vendor and Supply Chain Risk Management

Third-party AI vendors come with their own set of risks, requiring thorough evaluation. Go beyond standard security checks - ask about their training data sources, model validation methods, bias testing procedures, and update protocols. It’s also important to assess concentration risk (relying too much on one vendor) and identify any supply chain chokepoints that could disrupt services.

Contracts should include terms for ongoing monitoring, performance guarantees, and swift incident reporting. Some organizations are exploring agentic governance models, where AI helps automate parts of vendor risk assessments. However, human oversight remains essential for high-stakes decisions. Comprehensive vendor management is key to ensuring data protection and operational reliability.

Data Security and Access Controls

Safeguarding patient data in AI systems requires more than basic cybersecurity. Encryption should be enforced for data both in transit and at rest, and strict access controls should follow the principle of least privilege - users and systems should only access what’s absolutely necessary.

Audit logs play a crucial role in tracking who accesses AI systems, what data they view, and what actions they take. These logs are vital for compliance audits, investigations, and demonstrating governance. Retaining logs for at least six years, in line with HIPAA standards, is a common best practice.

Technologies like differential privacy, federated learning, and synthetic data generation allow AI systems to learn from patient data without exposing individual details. Clear retention and deletion policies for training data, outputs, and logs further strengthen security and privacy measures.

AI Security and Risk Mitigation Practices

Protecting AI systems in healthcare demands tailored strategies and controls to manage the specific risks these technologies bring to patient care and data security.

Applying NIST AI RMF and HIPAA Requirements

The NIST AI Risk Management Framework (AI RMF 1.0), introduced in January 2023, provides healthcare organizations with a structured approach to handle AI-related risks. It centers on four key functions: Govern, Map, Measure, and Manage. These functions align closely with HIPAA’s safeguards, offering a clear path to compliance.

Here’s how the NIST AI RMF functions connect to HIPAA:

- Govern corresponds to administrative policies.

- Map aligns with security risk analysis.

- Measure ties to audit controls.

- Manage supports contingency planning.

For instance, when deploying an AI diagnostic tool, the risk mapping phase should identify potential HIPAA violations, such as unauthorized access to protected health information during model training or use. Assign accountability - usually to roles like the Chief Privacy Officer - and establish clear escalation procedures for addressing risks. While the framework is voluntary, it’s built to integrate seamlessly with regulations like HIPAA, the EU AI Act, and FDA guidelines, making it a cornerstone for managing AI risks in healthcare.

Conducting AI Threat Assessments

AI systems in healthcare face threats that go beyond traditional cybersecurity concerns. These include:

- Adversarial attacks, which manipulate inputs to produce incorrect diagnoses.

- Data poisoning, where corrupted training data undermines model performance.

- Model extraction, which involves stealing proprietary algorithms.

- Concept drift, where model accuracy declines as patient demographics shift over time.

To stay ahead of these risks, conduct quarterly AI threat assessments. Here’s how:

- Inventory the AI systems critical to patient care.

- Map out data flows and dependencies across all stages - data collection, model training, deployment, and inference.

- Analyze potential attack vectors.

- Evaluate the impact on both patient safety and data confidentiality.

Take a radiology AI system as an example. Your assessment should explore scenarios like attackers manipulating training images to lead to missed diagnoses or using adversarial patches to deceive the model in clinical settings. Document each threat with severity ratings, such as "High: Adversarial attack causes misdiagnosis leading to patient harm." Assign mitigation responsibilities to specific teams and ensure findings are reviewed by your governance committee for resource allocation and policy updates. To test your defenses, use tools like CleverHans or Adversarial Robustness Toolbox, focusing on high-risk models that directly influence clinical decisions.

Using Privacy-Preserving Technologies

Protecting patient data goes beyond basic encryption and access controls. Advanced privacy-preserving technologies allow AI systems to learn from sensitive data without exposing individual details.

- Differential privacy introduces mathematical noise to datasets or model outputs. This prevents the re-identification of individual patients while maintaining statistical utility for AI training. For example, you can add calibrated noise to patient records before using them to train diagnostic models, ensuring no single patient’s data can be reverse-engineered.

- Federated learning enables model training across multiple hospitals without centralizing sensitive data. Each hospital trains a local model on its own data and shares only model updates - not raw data - with a central server for aggregation. Imagine a network of hospitals developing an AI tool to predict sepsis: Hospital A, B, and C each train models locally on their patient data and combine their insights without exposing individual records.

Implementing these technologies involves setting privacy budgets (to determine acceptable noise levels), securing communication protocols between federated nodes, and ensuring model performance remains clinically reliable after applying privacy measures. Frameworks like TensorFlow Federated can help get started, but don’t forget to audit for data leakage using techniques like membership inference attacks.

For even greater security, homomorphic encryption allows computations to be performed on encrypted data without ever decrypting it. This is particularly useful for performing secure calculations on sensitive health data while keeping it encrypted throughout the process.

Creating Organization-Wide Accountability for AI Governance

For AI governance to work effectively, it needs to be embraced at every level of an organization. From top-level executives to frontline staff, everyone must share responsibility for ensuring AI is used safely, ethically, and in compliance with established guidelines. By embedding accountability throughout - from strategic decisions in the boardroom to practical applications in clinical settings - organizations can create a unified approach to managing AI technologies.

Developing Training Programs for All Staff Levels

AI training isn't one-size-fits-all. The content should match the responsibilities of each role. For instance, board members don't need to understand the technical details of neural networks but must know how to allocate resources for AI oversight. On the other hand, clinicians, like radiologists, don't need coding skills but should be equipped to critically assess the accuracy of AI tools they use.

Several training programs are already making strides in this area:

- HFMA's AI Governance Micro-Credential: This program is designed for finance and administrative staff, costs $149.25, and awards digital badges every two years. It focuses on AI compliance, risk management, and ethics [3].

- Mayo Clinic's AI Foundations Course: Priced at $250.00, this course prepares clinicians to evaluate AI outputs critically. According to Mayo Clinic Executive Education, this knowledge helps professionals become "critical consumers and responsible users" of AI technologies [4].

- Harvard Medical School's Program: A more intensive option, this eight-week course costs $3,100.00 and is aimed at executives. Participants, like Hugo Lama, have highlighted its practical insights into integrating AI into clinical workflows [5].

Ethics training is essential for promoting transparency and responsibility. For example, the World Health Organization (WHO) offers a free course on the Ethics and Governance of AI for Health. This program, the result of 18 months of collaboration among global experts, provides guidance aligned with WHO’s six principles for keeping human rights central in AI applications [6].

To create a well-rounded skill set, organizations should integrate AI governance training with existing certifications, such as those in health information management or cybersecurity (e.g., CompTIA Security+). Additionally, educating staff on whistleblower protections and conflict-of-interest standards can encourage a culture where reporting AI-related issues is both safe and supported [3][7].

Linking Board Policies to Clinical Workflows

Training equips individuals, but aligning boardroom policies with clinical workflows ensures governance translates into everyday practices. A great example of this is Trillium Health Partners' collaboration with the Duke Institute for Health Innovation in 2025. They implemented the People, Process, Technology, and Operations (PPTO) framework through co-design workshops involving senior leaders and frontline stakeholders. This effort led to a structured AI governance committee under the Digital Health Committee, addressing what had previously been described as "completely ad hoc" AI adoption [9].

A three-tiered governance structure can help bridge the gap between strategy and practice:

- Top Level: Clinical Executive Leadership (e.g., CMO, CIO, COO) sets the strategic vision and allocates resources.

- Middle Level: Advisory Councils, made up of IT, legal, ethics, and informatics experts, review technical aspects and ensure systems are interoperable.

- Bottom Level: Specialty Areas, like department heads and clinical champions, focus on integrating AI into workflows and providing feedback.

Dr. Margaret Lozovatsky, Chief Medical Information Officer of the American Medical Association, emphasizes that clinicians must always retain decision-making authority, with AI serving as a tool to enhance their capabilities [8].

To operationalize policies, organizations can establish feedback loops and support channels for end-users. "Shadow deployments", where AI systems run silently alongside existing workflows, allow staff to assess risks and refine operations before full implementation [10].

Ensuring Transparency in AI Decision-Making

Transparency is the backbone of trust in AI systems. Without it, staff and patients alike may hesitate to rely on these tools.

One way to enhance transparency is by maintaining a centralized inventory of all AI models used within the organization. This eliminates silos and ensures visibility across departments. By August 2024, the FDA had authorized 950 AI-enabled medical devices, highlighting the growing need for such oversight [9].

Documenting the AI lifecycle is equally important. This includes details on data extraction, model adaptation, evaluation processes, and de-identification methods, all captured through standardized operating procedures. High-risk AI tools that directly impact patient care should face stricter oversight compared to lower-risk administrative tools [9].

Ethics consultations should also be a standard part of clinical AI deployment, not just during research. At Trillium Health Partners, one stakeholder expressed surprise at the lack of ethics consultations for AI deployment, highlighting a critical gap in current practices [9].

Clear disclosure policies are another key element. These policies should outline what information about AI systems is shared with clinicians, patients, and other stakeholders. For instance, in April 2025, JP Morgan’s Chief Information Security Officer issued a public letter to suppliers about Responsible AI (RAI) requirements. The company created a dedicated RAI governance function within its model risk department, staffed by over 20 professionals who report directly to the CEO [11].

Real-time feedback loops are essential for addressing issues promptly. With the percentage of physicians using AI tools nearly doubling - from 38% to 68% in 2024 - the need for continuous monitoring and open communication has become more urgent [8].

Transparency isn’t just about documentation; it’s about communication. Ensuring that all stakeholders, including non-technical leaders, understand how AI integrates into workflows and its limitations fosters trust and accountability across the board.

Conclusion

AI governance in healthcare requires a unified effort across all levels of an organization. This isn't something that can be left solely to IT departments - there's simply too much at stake. Effective governance must extend from the decision-makers in the boardroom to the clinicians at the bedside, ensuring patient care is protected while also encouraging advancements in medical technology.

At the heart of this effort is executive accountability. Leaders must take charge by involving clinical experts and multidisciplinary review boards to thoroughly evaluate AI tools for potential biases, safety concerns, and regulatory compliance before they are implemented. Dr. Margaret Lozovatsky, Chief Medical Information Officer at the AMA, emphasizes this point:

"AI governance isn't just oversight. It's about creating a culture of innovation in a structured framework that positions AI as a tool to improve care" [8].

But governance doesn't stop at oversight. Testing AI tools in real clinical settings is equally important. Relying solely on vendor claims isn't enough - organizations need to conduct their own assessments to ensure these tools work effectively for their specific patient populations. For example, researchers from the Australian Institute of Health Innovation and Alfred Health began validating an AI governance framework in April 2024. By March 2025, they had conducted 43 stakeholder interviews and were on track to finalize the framework by June 2025, aiming to apply it across diverse healthcare environments [12].

To make this process seamless, AI evaluations must be integrated into existing workflows. This means updating project intake forms, vendor checklists, and quality improvement protocols to include AI-specific criteria. The goal is to ensure that AI tools enhance, not replace, clinical judgment. With the use of AI tools by physicians nearly doubling from 38% to 68% in 2024 [8], the need for structured, organization-wide governance has never been more pressing.

FAQs

Who should own AI governance in a hospital?

AI governance in a hospital involves collaboration across the entire organization. The board plays a key role in ensuring that AI initiatives align with the hospital's goals, comply with regulations, and manage risks effectively. Meanwhile, senior leaders such as the Chief AI Officer (CAIO) or Chief Information Security Officer (CISO) take charge of the day-to-day management of AI systems.

To tackle ethical and operational challenges, multidisciplinary committees bring together a diverse group of stakeholders - clinicians, IT professionals, legal experts, and patient advocates. These teams work together to maintain accountability, promote transparency, and encourage the responsible use of AI within the hospital setting.

How do we decide which AI tools are “high-risk”?

Determining if an AI tool is considered "high-risk" in healthcare means evaluating its influence on patient safety, data security, and ethical considerations. Tools that play a role in clinical decision-making, affect patient outcomes, or manage sensitive information often fall into this category.

Key elements to review include the tool's intended purpose, scope of application, and the context in which it operates. To ensure these risks are properly managed, structured frameworks - like the NIST AI Risk Management Framework - provide valuable guidance. These frameworks support comprehensive governance, continuous monitoring, and ethical oversight to mitigate potential risks effectively.

What should we monitor after an AI tool goes live?

After introducing an AI tool in healthcare, keeping a close eye on its performance is crucial to ensure it operates safely, accurately, and ethically. Key areas to focus on include its effectiveness, reliability, and its ability to adapt to changes, such as updates in workflows or shifts in patient demographics.

It's also important to monitor for potential issues like biases, poor data quality, or unexpected adverse events. Establishing systems for regular feedback, incident reporting, and ensuring compliance with governance policies can help maintain trust and accountability. These proactive steps are essential for managing risks in an ever-changing environment.

Related Blog Posts

- Cross-Jurisdictional AI Governance: Creating Unified Approaches in a Fragmented Regulatory Landscape

- AI Governance Talent Gap: How Companies Are Building Specialized Teams for 2025 Compliance

- Board-Level AI: How C-Suite Leaders Can Master AI Governance

- The Governance Gap: Why Healthcare AI Needs New Rules of Engagement