First, Do No Harm: Patient Safety in the Age of Clinical AI

Post Summary

AI is transforming healthcare, offering tools for faster diagnoses, better treatment planning, and improved patient outcomes. But it comes with risks that can jeopardize safety if not properly managed. Failures like missed sepsis cases, biased algorithms, and cybersecurity vulnerabilities have already resulted in harm, from misdiagnoses to privacy breaches. Governance, oversight, and managing third-party AI risk are critical to ensuring AI serves patients without causing harm.

Key takeaways:

- AI risks: Bias in algorithms, cybersecurity threats, and system failures can lead to misdiagnoses, delayed treatments, and data breaches.

- Real-world examples: AI has produced unsafe treatment recommendations, misdiagnosed critical conditions, and exposed patient data through breaches.

- Solutions: Frameworks like NIST AI RMF, FDA guidance, and HSCC best practices offer actionable steps for evaluating, monitoring, and governing AI tools.

- Tools for safety: Platforms like Censinet RiskOps™ streamline risk assessments, monitor AI performance, and reduce errors through human oversight.

The future of clinical AI depends on balancing innovation with rigorous safety measures. By prioritizing oversight, transparency, and continuous monitoring, healthcare can protect patients while leveraging AI's potential.

From Deployment to Oversight: Strengthening AI Risk Management and Patient Safety in Health Care

sbb-itb-535baee

Patient Safety Risks in Clinical AI Systems

As clinical AI reshapes healthcare, it brings undeniable advancements but also introduces risks that demand careful oversight. Three major threats to patient safety stand out: cybersecurity vulnerabilities that leave systems open to manipulation, algorithmic bias that can lead to flawed medical decisions, and interconnected system failures that can ripple through entire hospital networks. Each of these poses unique challenges that healthcare organizations must tackle to uphold safety standards.

Cybersecurity Threats and AI Vulnerabilities

One of the most pressing risks for healthcare AI systems is data poisoning - a type of cyberattack where malicious actors inject harmful data into training datasets, compromising the AI's decision-making. Research led by Farhad Abtahi revealed that attackers only need access to 100 to 500 samples to successfully corrupt a system, achieving over 60% success rates. Alarmingly, it can take between 6 and 12 months to detect such breaches, and some may never be uncovered [2].

"Attackers with access to only 100-500 samples can compromise healthcare AI regardless of dataset size, often achieving over 60 percent success, with detection taking an estimated 6 to 12 months or sometimes not occurring at all." – Farhad Abtahi et al., Researchers [2]

The problem is compounded by healthcare supply chain security challenges. A single compromised vendor of foundational AI models could distribute tainted data to 50–200 institutions [2]. Privacy regulations like HIPAA and GDPR, while designed to protect patient information, can unintentionally hinder efforts to detect these subtle attacks by limiting the scope of data analysis [2]. A chilling example is the "Medical Scribe Sybil" scenario, where coordinated fake patient visits could poison data through legitimate workflows without requiring direct system access.

These cybersecurity challenges underscore the need for robust defenses, especially as they directly impact AI accuracy and integration within healthcare systems.

Algorithmic Bias and Decision-Making Errors

Internal issues within AI models pose another serious threat to patient safety. Unlike humans, AI models rely on statistical patterns rather than explicit medical guidelines, which makes them vulnerable to errors when trained on flawed or incomplete data. This can result in misdiagnoses or delayed treatments. As ECRI explained, "AI models are only as good as the data they're trained on, and when data are flawed, AI can cause misdiagnoses or delayed treatments, putting patients at risk" [3].

The lack of governance in many healthcare organizations exacerbates this issue. In 2023, only 16% of hospitals had system-wide AI governance policies in place [3]. Without these safeguards, biased algorithms can go unchecked, and as AI systems continue to learn from new data, biases that weren’t initially present can emerge over time. This highlights the need for continuous monitoring and institutional oversight to ensure patient safety.

Interconnected Systems and Cascading Failures

The interconnected nature of clinical AI systems adds another layer of risk. Modern healthcare infrastructure relies on AI to manage a wide range of functions, from scheduling and lab results interpretation to medical records and medication orders. This tight integration means that a failure in one part of the system can have far-reaching consequences. John Banja, PhD, a medical ethicist at Emory University, warns, "It is easy to imagine how a breakdown or virus affecting any one element of an AI chain could wreak havoc with the entire system" [4].

These cascading failures can act as single points of failure, where one error affects thousands of patients simultaneously. A stark example is a 2019 data breach involving a diagnostic imaging company, which exposed the health information of over 300,000 patients and resulted in a $3,000,000 settlement [4]. Michelle Mello, a Stanford professor of law and health policy, emphasized the scale of such incidents: "Injuries can replicate over many, many patients, and from a lawyer's perspective, that means big damages" [5].

The limited adoption of comprehensive AI governance leaves healthcare systems vulnerable to these risks. Over-reliance on AI can also lead clinicians to place too much trust in automated systems, skipping essential human oversight when errors occur. This reinforces the urgent need for robust frameworks to ensure AI systems operate as intended while keeping patient safety at the forefront.

Case Studies: Clinical AI Incidents and Failures

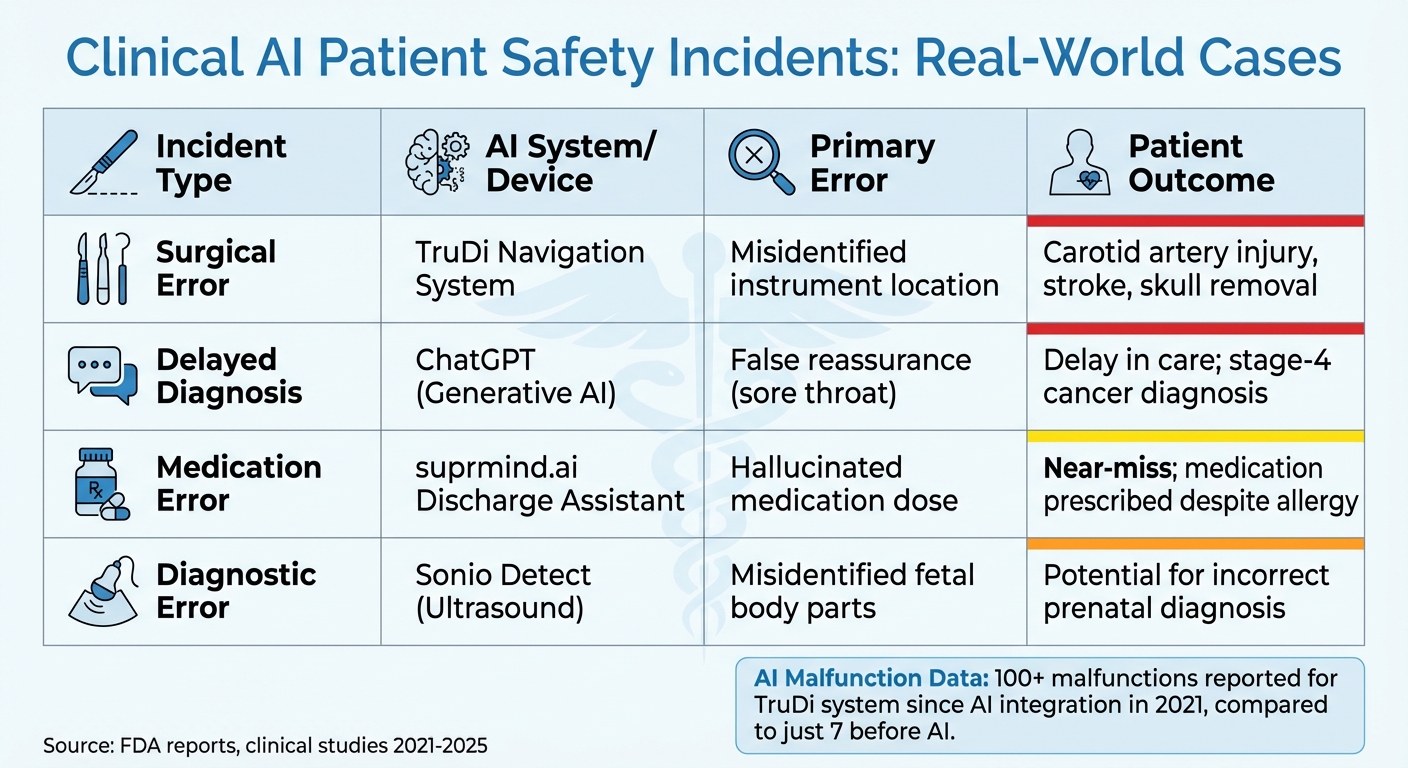

Clinical AI Patient Safety Incidents: Types, Systems, Errors, and Outcomes

Incidents in healthcare illustrate how AI can quickly shift from being a promising tool to a source of harm. These examples highlight the critical need for thorough oversight and risk management before AI systems are deployed. From misdiagnoses to privacy breaches and device failures, here are some real-world cases that demonstrate the risks.

AI Misdiagnosis and Treatment Errors

In August 2025, Warren Tierney, a 37-year-old father from Ireland, consulted ChatGPT about a persistent sore throat. The AI incorrectly assured him that cancer was "highly unlikely", leading him to delay seeking medical attention. Tragically, he was later diagnosed with stage-4 esophageal cancer, resulting in a terminal prognosis [7].

AI in surgical navigation has also caused serious harm. For example, the TruDi Navigation System (Acclarent/Integra LifeSciences) has been linked to incidents ranging from misdirected surgical tools to strokes and other severe complications. Since AI integration in 2021, the FDA has received reports of at least 100 malfunctions and adverse events, compared to just seven before AI was introduced. Between late 2021 and November 2025, at least 10 injuries were directly associated with this system [6].

Diagnostic AI has faced similar scrutiny. In June 2025, an FDA report revealed issues with Sonio Detect, an AI used in prenatal ultrasounds by Samsung Medison. The software incorrectly labeled fetal structures, associating them with the wrong body parts [6]. Another study found that an AI-enhanced stethoscope misdiagnosed heart failure in 66% of over 12,000 patients [12].

Errors in AI discharge systems have also raised alarms. During a Q1 2025 pilot, HealthTech startup suprmind.ai tested a real-time patient discharge assistant in three hospitals. A nurse discovered that the AI had recommended a medication for a patient explicitly listed as allergic to that drug class. Although the error was caught before discharge, the system showed clinically actionable misstatements in 0.98% of cases - 12 times higher than the vendor's claimed error rate of 0.08% [8].

These examples underline the importance of continuous validation and strong governance to ensure AI systems remain safe throughout their use. While diagnostic errors pose direct risks to patients, data privacy breaches add another layer of concern.

Data Privacy Breaches and Their Impact

AI systems have introduced new vulnerabilities for data breaches, often through automated processes. In September 2024, an AI transcription tool (Otter.ai) inadvertently joined a virtual hepatology rounds meeting at an Ontario hospital. Triggered by an outdated calendar invite on a former physician's personal device, the tool recorded the session and emailed a summary - including the private health information (PHI) of seven patients - to 65 recipients, 12 of whom no longer worked at the hospital [10][11].

"That's a whole level of autonomy and independence that we haven't been prepared for but need to start thinking about." – Teresa Scassa, University of Ottawa [11]

Following this breach, the hospital blocked AI scribe tools via its firewall and updated its disciplinary policies. In Ontario, privacy breaches can result in fines of up to $1,000,000 for organizations and $200,000 for individuals [11].

Larger breaches have also occurred. Between November 2024 and May 2025, Serviceaide, an AI-powered IT management provider, discovered a misconfigured Elasticsearch database. The error exposed records for 483,126 Catholic Health patients, including medical records and login credentials. The breach, accessible from September 19 to November 5, 2024, led to at least two proposed class-action lawsuits [9].

These incidents emphasize the need for strict access controls, constant monitoring, and robust third-party risk management to safeguard patient data in AI-driven systems.

Failures in AI‑Driven Medical Devices

AI-powered medical devices have faced significant challenges post-approval. A review of 60 FDA-authorized AI devices revealed 182 recalls, with nearly half occurring within a year of approval [6].

The FDA's 510(k) pathway allows AI devices to enter the market by showing "substantial equivalence" to existing tools, often bypassing the need for new clinical trials. Dr. Alexander Everhart from Washington University Medical School noted:

"I think the FDA's traditional approach to regulating medical devices is not up to the task of ensuring AI‑enabled technologies are safe and effective." [6]

For instance, in late 2025, the FDA issued a Class 2 recall for certain versions of the TruDi Navigation System due to visualization defects [12][13]. With at least 1,357 AI-authorized medical devices cleared by the FDA - double the number cleared by 2022 - the rapid growth of AI in healthcare raises questions about regulatory oversight. Compounding this, layoffs in early 2025 reduced the FDA's AI safety team from 40 to 25 members, further straining its ability to monitor these technologies [6].

| Incident Type | AI System/Device | Primary Error | Patient Outcome |

|---|---|---|---|

| Surgical Error | TruDi Navigation System | Misidentified instrument location | Carotid artery injury, stroke, skull removal [6] |

| Delayed Diagnosis | ChatGPT (Generative AI) | False reassurance (sore throat) | Delay in care; stage‑4 cancer diagnosis [7] |

| Medication Error | suprmind.ai Discharge Assistant | Hallucinated medication dose | Near‑miss; medication prescribed despite allergy [8] |

| Diagnostic Error | Sonio Detect (Ultrasound) | Misidentified fetal body parts | Potential for incorrect prenatal diagnosis [6] |

These failures highlight how AI systems can introduce risks that traditional medical devices do not. Addressing these challenges will require adaptive regulations and proactive governance to ensure patient safety as AI technologies continue to evolve.

Frameworks and Standards for AI Risk Management

Healthcare organizations face a growing need to manage risks associated with clinical AI systems. Adopting well-established frameworks for AI oversight can help address these challenges, offering structured steps to evaluate and monitor AI tools throughout their lifecycle. While these frameworks are technically voluntary, they are becoming the benchmark for AI governance in U.S. healthcare.

NIST AI Risk Management Framework

The National Institute of Standards and Technology (NIST) AI Risk Management Framework outlines a four-step approach to AI oversight: Govern (establishing policies and accountability), Map (identifying risks and context), Measure (tracking performance), and Manage (acting on risks) [14][16]. Developed with input from various stakeholders, this framework considers risks arising from technical, social, and human factors [15][16].

In clinical settings, this framework translates into actionable safety protocols. For example:

- Govern: Define risk thresholds for clinical outcomes and clarify accountability when AI-assisted decisions lead to errors.

- Map: Identify the purpose, user base, and potential impact of each AI system to guide deployment decisions.

- Measure: Use metrics to monitor issues like model drift or performance degradation that could result in misdiagnoses.

- Manage: Implement human-in-the-loop mechanisms and response plans for AI system failures [14].

In July 2024, NIST released the Generative AI Profile (NIST AI 600-1) to address risks like "confabulations" (hallucinations) and privacy concerns [14]. A draft Cyber AI Profile (NIST IR 8596) followed in December 2025, linking AI risk management with the Cybersecurity Framework 2.0 [14]. Agencies like the FDA now incorporate NIST principles into their guidelines for AI-enabled medical devices, making the framework increasingly relevant for compliance [14].

Healthcare organizations can start by cataloging all AI systems in use, including those in third-party devices or vendor platforms. Forming a cross-functional oversight team - with members from clinical, legal, and IT security backgrounds - ensures a well-rounded approach to patient safety. The NIST AI RMF Playbook provides actionable steps tailored to specific clinical scenarios [14][15]. Automated monitoring systems can alert teams to performance issues, helping determine when a model needs retraining or decommissioning [14].

FDA Guidance on AI in Medical Devices

As of early 2025, the FDA has authorized over 1,000 AI-enabled medical devices through its premarket pathways [17]. The agency employs a Total Product Life Cycle (TPLC) approach, which covers design, development, maintenance, and documentation [17][18].

Troy Tazbaz, Director of the FDA's Digital Health Center of Excellence, highlighted the importance of addressing AI-specific challenges:

"The FDA has authorized more than 1,000 AI-enabled devices through established premarket pathways. As we continue to see exciting developments in this field, it's important to recognize that there are specific considerations unique to AI-enabled devices." [17]

The FDA's 2025 draft guidance tackles risks like data poisoning, model evasion, inversion, and performance drift - issues that could compromise patient safety [19]. A key focus is on postmarket performance monitoring, requiring plans to manage risks once devices are in use [17]. This represents a shift from one-time approvals to ongoing oversight, with tools like Predetermined Change Control Plans (PCCPs) enabling proactive updates [17].

Healthcare organizations are encouraged to collaborate with the FDA early in the development process [17]. Robust postmarket monitoring plans are vital for catching performance drift before it affects patients. These efforts, combined with industry best practices, can strengthen AI cybersecurity.

HSCC Best Practices for AI Cybersecurity

The Health Sector Coordinating Council (HSCC), a coalition of over 480 organizations, published 2026 guidance through its AI Cybersecurity Task Group, which includes 115 healthcare entities [20]. The council operates under the principle:

"Cyber safety is patient safety." [20]

HSCC’s guidance is organized into five key areas:

- Secure by Design: Use tools like the AI Bill of Materials (AIBOM) and Trusted AI BOM (TAIBOM) to track algorithm origins and defend against data poisoning.

- Governance: Apply a "5-level autonomy scale" to classify AI systems by their independence, aligning oversight with the associated risks.

- Operations: Develop incident response playbooks for AI-specific issues, such as model drift or poisoning.

- Supply Chain: Mitigate vendor risks using contract clauses that demand transparency about algorithmic bias and data privacy.

- Education: Standardize terminology to ensure consistent understanding of AI risks among clinicians and administrators [20].

Although voluntary, HSCC guidelines have gained traction due to their broad support from both public and private sectors. Healthcare organizations should audit AI tools using AIBOMs to understand the training data and software components behind medical devices. The 5-level autonomy scale helps determine when human oversight is needed versus when systems can operate independently. Including HSCC-recommended clauses in vendor contracts ensures transparency, facilitates performance monitoring, and establishes recovery protocols for emerging risks.

How Censinet Addresses Patient Safety in Clinical AI

As clinical AI continues to evolve, ensuring patient safety is more critical than ever. Censinet steps up with practical tools designed to manage AI-related risks effectively. Their platform integrates three solutions that align with key frameworks - NIST AI RMF, FDA guidance, and HSCC best practices - delivering measurable outcomes in reducing risks and improving operational workflows.

Censinet RiskOps™ for AI Governance

Censinet RiskOps™ simplifies risk management by automating assessments. It scans vendor contracts, questionnaires, and supporting evidence to identify high-risk AI systems, helping mitigate cybersecurity threats and prevent cascading failures. The platform also provides real-time compliance dashboards, monitoring crucial metrics like algorithmic bias detection and cybersecurity vulnerabilities.

For example, a major hospital network used RiskOps™ to flag bias issues in AI imaging tools, cutting assessment time by 80%. This proactive approach allowed the clinical team to address potential problems before impacting patients. The results? A 40% drop in vulnerabilities and a 50% reduction in AI-related safety incidents [21][23].

Organizations using RiskOps™ report impressive outcomes, including 60% faster risk mitigation and 95% compliance rates. In a 2025 Johns Hopkins study, six months of deployment resulted in zero escalated AI errors [21][23].

Human-in-the-Loop Oversight with Censinet AI

RiskOps™ may handle the automation, but Censinet AI ensures human expertise validates flagged risks. This hybrid model addresses critical issues like algorithmic bias and decision-making errors. The system flags risks - such as potential misdiagnoses - and routes them to clinicians for final review. Every intervention is logged to create a transparent audit trail, reinforcing both trust and compliance.

In 2025, a California medical center used Censinet AI to oversee an AI sepsis detection tool. The system flagged biased predictions, allowing physicians to intervene and prevent mistreatment for 15 patients. These documented actions not only strengthened FDA reporting but also built accountability [21][23].

Censinet's CTO shared that this approach prevented 25% of potential errors during a pilot program with AI radiology tools [22][24]. Findings are sent directly to governance committees or stakeholders for review, ensuring every decision aligns with ethical standards. The AI risk dashboard aggregates all data in real time, making it a central hub for oversight.

Censinet Connect™ for Third-Party Risk Management

Third-party AI vendors can introduce additional risks, particularly in the supply chain. Censinet Connect™ tackles this by offering a pre-populated network of over 5,000 healthcare AI suppliers. With one-click assessments based on standardized questionnaires, the platform streamlines evaluations, cutting onboarding times by 70%, as seen in Emory Healthcare’s TPRM program [22][24].

Continuous monitoring ensures that updates - like AI retraining or breach notifications - are tracked via API integrations. For instance, in 2024, Connect™ alerted users about a firmware flaw in an AI pacemaker, enabling teams to patch the issue and reduce failure risks by 35% [21][23].

These tools seamlessly align with regulatory frameworks. After adopting Censinet's solutions, a healthcare consortium reported 90% alignment during audits [22][24]. To maximize results, organizations are encouraged to start with a 30-day RiskOps™ pilot for high-risk vendors, integrate Connect™ for vendor onboarding, and enable Human-in-the-Loop oversight via API connections to EHRs. Prioritizing areas like imaging AI typically delivers ROI in just 90 days through reduced audit expenses [22][24].

Conclusion

Clinical AI has the potential to transform patient care, but it requires a careful, methodical approach to ensure its safe and effective use. Without proper oversight, AI tools can fall short - failing to detect sepsis in more than two-thirds of cases or underestimating disease severity for Black patients by 26.3% [1]. These challenges highlight why rigorous oversight must be a cornerstone of AI implementation in healthcare.

Achieving success in clinical AI rests on three critical pillars. First, establish multidisciplinary governance committees to evaluate AI tools against both technical and ethical benchmarks. Second, demand full transparency from vendors regarding their training data, limitations, and performance metrics - then validate these metrics within your own patient population. Third, use automated monitoring systems to track performance over time, flagging bias or drift with predefined thresholds that prompt immediate reviews. Together, these steps can help healthcare organizations move from isolated efforts to a unified culture of patient safety.

"The same vigilance that transformed hospital infection control from an afterthought into a cornerstone of patient safety must now be applied to algorithmic care." - Ryan Sears, Author [1]

The choice isn’t between innovation and safety - they must go hand in hand. By building strong oversight structures, requiring external validation, and ensuring human involvement at every stage, healthcare organizations can bridge the gap between theoretical performance and real-world safety. Established frameworks like those from NIST and the FDA offer actionable guidance to make this oversight both practical and enduring. And with regulatory requirements now emphasizing ongoing evaluation, the message is clear: continuous monitoring of AI systems is essential for protecting patients.

The tools to ensure safe clinical AI are already available. The question is whether healthcare leaders will act with the urgency that this responsibility demands.

FAQs

How can we validate an AI model on our own patient population before go-live?

Before launching an AI model, it's crucial to conduct rigorous testing to confirm it meets the necessary standards for clinical effectiveness, accuracy, and safety within your specific patient population. The goal is to ensure the model reliably performs its intended tasks while adhering to all regulatory requirements.

Make sure to include robustness testing, which evaluates how well the model handles variations in demographics, data quality, or unexpected inputs. This step is essential to confirm consistent performance across different scenarios. By embedding these practices into your risk management framework, you can help maintain the model's safety and reliability over time.

What should our post-deployment monitoring track to catch AI drift, bias, or hallucinations?

Post-deployment monitoring needs to focus on tracking performance metrics to identify any signs of AI drift or degradation, which can occur due to changes in clinical practices or shifts in data. It’s also important to regularly evaluate bias across different patient groups. By using fairness metrics and interpretability tools, you can help prevent unequal treatment or outcomes.

Additionally, keep an eye out for hallucinations by cross-checking AI outputs with established clinical standards. Human oversight plays a key role in catching and addressing these issues. To tie it all together, a well-structured governance framework is crucial for spotting and managing risks effectively.

How can we reduce AI supply-chain and data-poisoning risks from vendors and updates?

Healthcare organizations can take several steps to address risks tied to AI supply chains and data poisoning:

- Conduct thorough vendor evaluations: Prioritize vendors that demonstrate strong security measures, compliance with regulations, and effective strategies to reduce bias in AI systems.

- Implement clear contractual protections: Define terms for liability, data ownership, and responsibilities for updates and maintenance within vendor agreements.

- Increase transparency: Regularly benchmark vendors and actively monitor their adherence to compliance standards.

- Commit to ongoing testing: Continuously test AI systems for vulnerabilities and risks from adversarial attacks.

- Develop robust governance frameworks: Align governance practices with NIST AI RMF standards and oversee AI models throughout their lifecycle.

Taking these actions can help healthcare organizations safeguard their systems and maintain trust in their AI tools.